OpenClaw local models fail because of a streaming protocol bug (GitHub #5769) that breaks tool calling for all Ollama models. The fix: set OLLAMA_DISABLE_STREAMING=true in your environment, verify the model name matches exactly what ollama list shows, and ensure OLLAMA_HOST is set to http://host.docker.internal:11434 if running in Docker. Below are all five failure modes and their fixes.

The streaming bug, the tool calling trap, and the context window lie. Real fixes from 50+ GitHub issues.

The model worked perfectly in the terminal. I'd just watched Ollama respond to "hello" in under two seconds. Clean JSON response. Model loaded. Everything fine.

Then I opened the OpenClaw dashboard. Typed the same message. Watched the typing indicator spin. And spin. And spin.

No response. No error message. No log entry that made sense. Just silence.

The model works. OpenClaw doesn't see it. What is happening?

If you've searched "OpenClaw local model not working" or "OpenClaw Ollama setup fails," you've found the right article. I spent two weeks digging through 50+ GitHub issues, Discord threads, and community reports to figure out exactly why local models break in OpenClaw and what to do about each failure mode.

There are five distinct ways it fails. Each one has a different fix. And one of them is a fundamental architectural limitation that no config change will solve.

The #1 failure: Tool calling silently breaks with streaming

This is the bug that burns the most people. It's documented in GitHub Issue #5769, and it affects every Ollama model configured through OpenClaw.

Here's what happens: OpenClaw always sends stream: true when making model calls. This is fine for cloud providers like Anthropic and OpenAI. But Ollama's streaming implementation doesn't properly emit tool_calls delta chunks. When a local model decides to call a tool (exec, web_search, browser, file read), the streaming response returns empty content with finish_reason: "stop", losing the tool call entirely.

The result: your agent can chat, but it can't do anything. No file reading. No web searches. No shell commands. No skill execution. It just produces narrative text describing what it would do, instead of actually doing it.

This is a known Ollama limitation tracked in their own issues (ollama/ollama#9632 and ollama/ollama#12557). It affects Mistral, Qwen, and most other local models.

If your OpenClaw agent talks about using tools instead of actually using them, you've hit the streaming + tool calling bug. It's not your config. It's an architectural mismatch.

The current workaround requires modifying OpenClaw's source code to disable streaming when tools are present. The community has proposed a config option (stream: false per provider), but it hasn't been merged yet. The suggested fix looks like this:

const shouldStream = !(context.tools?.length && isOllamaProvider(model))

Until this lands in a release, local models through Ollama are effectively limited to chat-only interactions. They can't perform agent actions. Which means they can't do most of what makes OpenClaw useful.

The #2 failure: "No response" in the dashboard (but Ollama works fine)

This one shows up in Issues #7791, #29120, and #31577. The pattern is identical every time:

You run ollama run qwen3:8b in the terminal. It responds instantly. You open the OpenClaw dashboard or TUI. You type a message. The typing indicator appears. No response ever comes. CPU usage spikes to 50%. Ollama loads the model into memory. But nothing reaches the UI.

The root cause is usually one of three things:

Model discovery timeout. OpenClaw tries to auto-discover Ollama models on startup. If Ollama is slow to respond (common on Windows WSL2 setups or when the model isn't pre-loaded), discovery times out silently. Your gateway starts, but it can't actually talk to the model. Check your logs for: Failed to discover Ollama models: TimeoutError.

Context window mismatch. OpenClaw recommends at least 64K token context for agent operations. Many local models default to much less. A 3B model like Qwen2.5:3b with 32K context will choke on OpenClaw's system prompts, which are larger than most people realize. The gateway doesn't tell you this. It just hangs.

WSL2 networking. If you're running OpenClaw in WSL2 and Ollama on the Windows host (or vice versa), 127.0.0.1 doesn't always resolve correctly across the boundary. Issue #29120 documents this exact scenario. The fix: use the WSL2 IP address from hostname -I instead of localhost.

For more context on how OpenClaw's agent architecture actually works and why it needs such large context windows, our explainer covers the system prompt structure and gateway model.

The #3 failure: Ollama models not detected by OpenClaw

Issue #22913 captures this perfectly. You have five models loaded in Ollama. ollama list shows them all. But openclaw models list only shows your API-based providers. The local models are invisible.

This happens because OpenClaw's model scanning prioritizes API providers. When Ollama model discovery fails (timeout, connection issue, or just a race condition during startup), OpenClaw doesn't retry. It silently falls back to whatever API models are configured.

The fix depends on your setup:

If discovery fails on startup, try pre-loading your model with ollama run model_name in a separate terminal before starting the OpenClaw gateway.

If using a remote Ollama server (different machine), make sure the baseUrl in your config points to the correct IP and port. Issue #14053 documents how http://127.0.0.1:11434 fails when Ollama runs on a different host, even though curl to the same URL works fine. Use the actual network IP.

If on WSL2, bind Ollama to 0.0.0.0 instead of localhost: OLLAMA_HOST=0.0.0.0:11434 ollama serve.

The #4 failure: OpenClaw calls the Ollama CLI instead of the API

This one is genuinely bizarre. Issue #11283 documents it.

You configure Ollama as a remote provider with a baseUrl pointing to a GPU server. OpenClaw should make API calls to that endpoint. Instead, it tries to execute ollama run model_name as a shell command on the local machine. Since Ollama isn't installed locally, it fails.

The agent log shows it clearly: the model generates a toolCall to exec with the command ollama run llama3:8b "Hello from Llama 3 8B". It's treating Ollama as a CLI tool rather than an API provider.

This happens when OpenClaw's model routing falls back to a cloud model (usually Claude) and that cloud model tries to be "helpful" by executing Ollama commands. The fix: make sure your config explicitly defines the Ollama model in the models.providers section with api: "ollama" and that the model is listed in the models array. Don't rely on auto-discovery for remote Ollama.

If you want to see the full Ollama configuration process and the common failure modes in action, this community walkthrough covers setup, debugging, and the workarounds for the most frequent issues.

Watch on YouTube: OpenClaw with Ollama Local Models Setup and Troubleshooting (Community content)

The #5 failure: The model just isn't smart enough

Here's the one nobody wants to hear.

Even if you fix every configuration issue, most local models under 30B parameters can't reliably perform agent tasks. They can chat. They can answer questions. But OpenClaw agents need to make multi-step decisions, call tools with precise syntax, maintain context over long conversations, and follow complex system prompts.

Community benchmarks from OpenClaw's GitHub discussions are consistent: models under 30B context frequently fail on tool use and reasoning. The tool calling format needs to be exact. One misformatted JSON response and the skill execution fails silently.

The models that work best locally (according to community reports):

glm-4.7-flash (requires ~25GB VRAM): strong reasoning and code generation, called "huge bang for the buck" by multiple users.

qwen3-coder-30b: good for code-heavy agent workflows, requires significant hardware.

hermes-2-pro and mistral:7b: recommended by Ollama's own docs for tool calling, but limited reasoning depth.

For anything under 8B parameters, expect frequent tool call failures, context loss, and hallucinated skill executions. These models are fine for simple chat. They're not fine for autonomous agent operations.

Local models work for chat. They mostly don't work for agent actions. That's not a config problem. It's a capability gap.

If you don't want to deal with model compatibility issues, tool calling bugs, or hardware requirements, Better Claw supports 28+ cloud providers with BYOK and zero configuration. $19/month per agent. Point it at Claude, GPT, DeepSeek, or Gemini and your agent works in 60 seconds. No Ollama debugging required.

The cheap cloud alternative that changes the math

Here's the part that shifted my thinking.

I spent a week debugging local Ollama issues because I wanted to avoid API costs. The appeal of $0/month is strong. But the reality is: you're paying in time, frustration, and missing features instead of money.

Meanwhile, cloud providers in 2026 have gotten absurdly cheap:

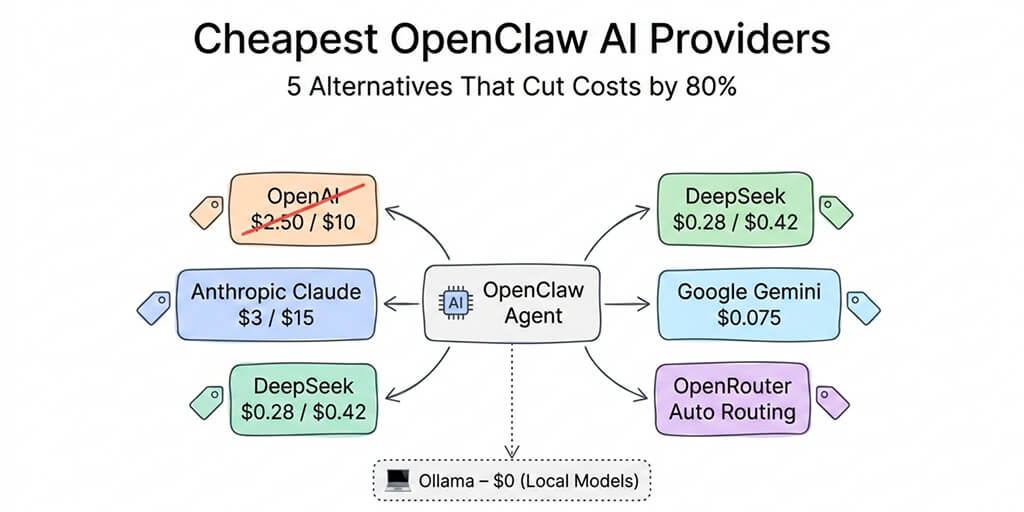

Google Gemini 2.5 Flash: free tier with 1,500 requests/day. DeepSeek V3.2: $0.28/$0.42 per million tokens. Claude Haiku 4.5: $1/$5 per million tokens.

A full month of moderate agent usage on DeepSeek costs $3-8. On Gemini's free tier, it costs nothing. These providers have reliable tool calling, large context windows, and no streaming bugs.

The real costs of running OpenClaw on cheap cloud models are often less than what you'd spend on electricity keeping a GPU-capable machine running 24/7 for local inference.

For a full comparison of Gemini vs local models for OpenClaw, our cost guide covers the exact math.

When local models actually make sense

I'm not going to pretend local models are always wrong. They make sense in specific scenarios:

Privacy-first deployments where no data can leave your network. Government, healthcare, legal work where data sovereignty is non-negotiable.

Experimentation and learning where you want to understand how OpenClaw works without any API commitment.

Offline environments where internet access is unreliable or unavailable.

Supplementary use as a heartbeat or sub-agent model while keeping a cloud provider for primary interactions. This hybrid approach saves money on automated operations while maintaining quality for interactions that matter. Our model routing guide covers how to set this up.

For anything else (personal productivity, business automation, customer-facing agents), cloud providers offer better reliability, better tool calling, and surprisingly competitive pricing.

The honest takeaway

OpenClaw with local models is not a "just works" experience in 2026. The tool calling streaming bug alone means your agent can't perform most useful actions. The discovery issues, context window mismatches, and WSL2 networking problems add layers of frustration on top.

The community is working on fixes. The streaming issue has proposed patches. Model capabilities improve every few months. Local-first OpenClaw will get better.

But right now, the fastest path to a working OpenClaw agent is a cheap cloud provider. Or even better, a managed platform that handles provider configuration, model routing, and infrastructure entirely.

If you've been fighting Ollama configs and silent failures, give Better Claw a try. $19/month per agent, BYOK with any of the 28+ supported providers, and your first agent deploys in 60 seconds. No streaming bugs. No discovery timeouts. No context window mismatches. Just an agent that works.

Frequently Asked Questions

Why is my OpenClaw local model not working?

The most common cause is the tool calling streaming bug (GitHub Issue #5769). OpenClaw sends stream: true to all providers, but Ollama's streaming implementation drops tool call responses. This means your local model can chat but can't execute tools, skills, or actions. Other causes include model discovery timeouts, context window mismatches (OpenClaw needs 64K+ tokens), and WSL2 networking issues. Check your gateway logs for "Failed to discover Ollama models" or "fetch failed" messages.

How does Ollama compare to cloud providers for OpenClaw?

Ollama gives you zero API costs and full privacy, but local models under 30B parameters struggle with reliable tool calling, multi-step reasoning, and long-context accuracy. Cloud providers like Claude Sonnet ($3/$15 per million tokens) and DeepSeek ($0.28/$0.42) offer reliable tool calling, larger context windows, and consistent performance. Gemini 2.5 Flash has a free tier with 1,500 requests/day. For production agents, cloud providers are significantly more reliable.

How do I fix OpenClaw Ollama tool calling?

The core issue is OpenClaw's stream: true default breaking Ollama's tool call responses. The community workaround requires modifying OpenClaw's source to disable streaming when tools are present for Ollama providers. Until this is merged into a release, the practical fix is to use Ollama for chat-only tasks and a cloud provider for tool-dependent operations. Set Ollama as your heartbeat model and a cloud model as your primary.

Is it worth running OpenClaw on local models to save money?

Depends on your definition of "worth." You save $3-15/month in API costs (what cheap cloud providers charge) but spend hours debugging streaming bugs, discovery issues, and tool calling failures. Local models require 16GB+ RAM for 8B models and a GPU for anything larger. For privacy-first requirements, local models are essential. For cost savings alone, DeepSeek at $0.28 per million tokens or Gemini's free tier are cheaper than the electricity for 24/7 local inference.

Which local models work best with OpenClaw?

Community reports recommend glm-4.7-flash (~25GB VRAM, strong reasoning), qwen3-coder-30b (good for code tasks), and hermes-2-pro or mistral:7b (Ollama's recommended tool calling models). Models under 8B parameters frequently fail on agent tasks due to inadequate tool calling capability and limited context windows. For reliable local agent operations, plan for 30B+ models with at least 64K context.

Related Reading

- OpenClaw Ollama "Fetch Failed" Fix — Specific Ollama connection error troubleshooting

- "Model Does Not Support Tools" Fix — Tool calling failures with local models

- OpenClaw Local Model Hardware Requirements — RAM, GPU, and storage specs for local inference

- OpenClaw Memory Fix Guide — Memory issues that compound with local model limitations

- OpenClaw Not Working: Every Fix in One Guide — Master troubleshooting guide for all common errors