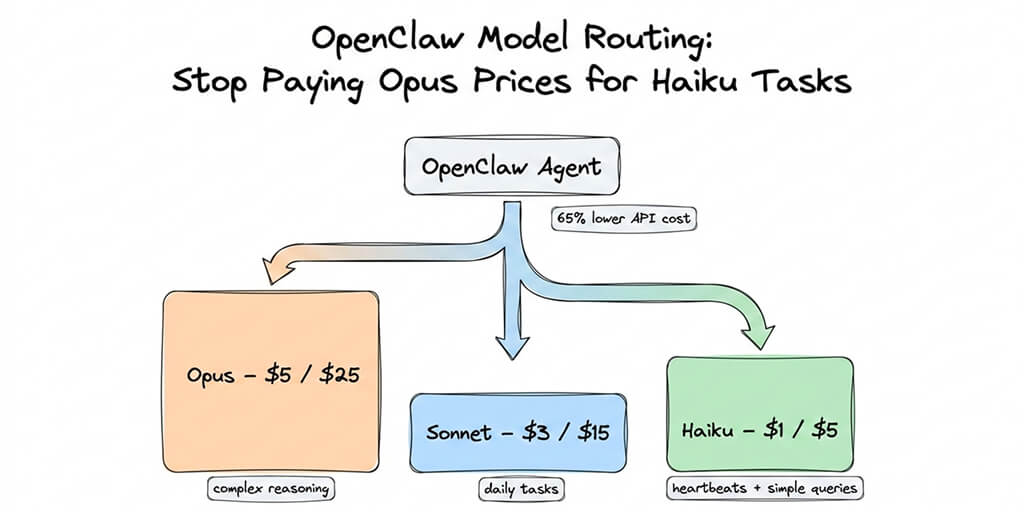

The one config change that cut our OpenClaw API bill by 65%. Here's exactly how to set it up.

I opened my Anthropic dashboard on a Monday morning and stared at the number. $214. One week. One agent.

The agent was doing good work. Morning briefings. Calendar management. Email triage. Research tasks. Cron jobs running every 30 minutes to check for updates.

But $214 in seven days? For a personal AI assistant?

I dug into the usage logs. And that's when I saw it.

Every single task, from a complex research synthesis to a simple "are you still there?" heartbeat check, was routing through Claude Opus at $5 per million input tokens and $25 per million output tokens. My agent was using a $25/million-token model to check the weather.

That's like hiring a neurosurgeon to take your temperature.

Here's what nobody tells you about OpenClaw model routing: by default, everything goes to your primary model. Every heartbeat. Every sub-agent. Every quick calendar lookup. If your primary is Opus, you're paying Opus rates for tasks that Haiku could handle in its sleep.

One config change later, my weekly bill dropped to $74. Same agent. Same quality on the tasks that mattered. 65% savings.

This is that config change.

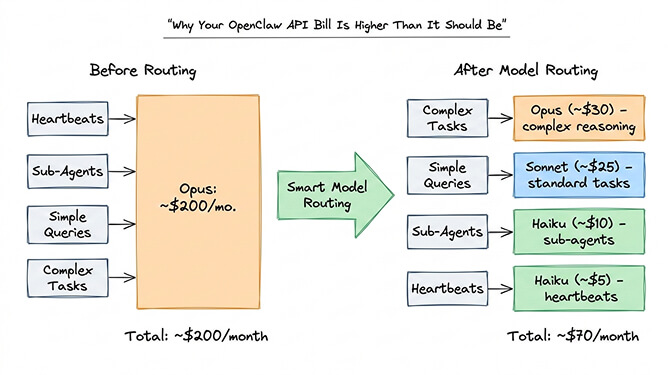

Why your OpenClaw API bill is higher than it should be

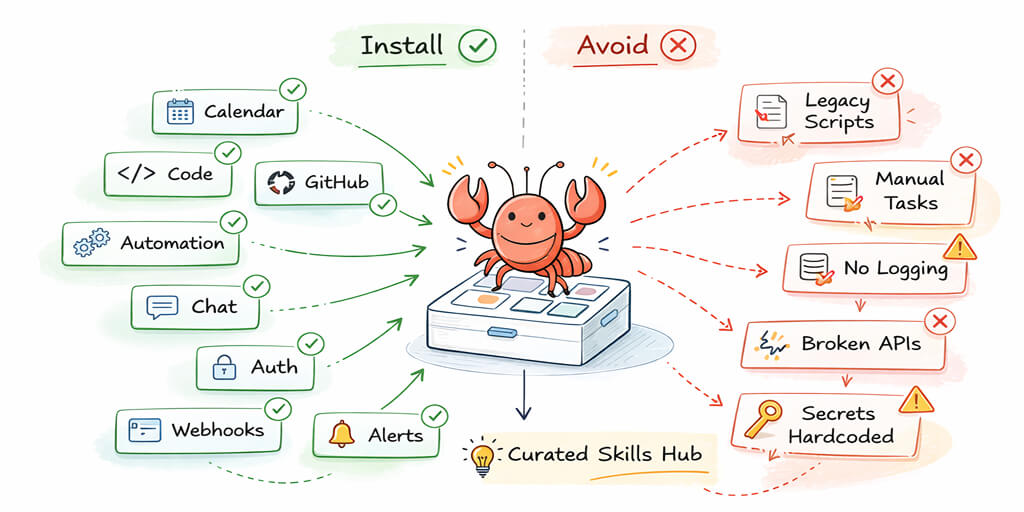

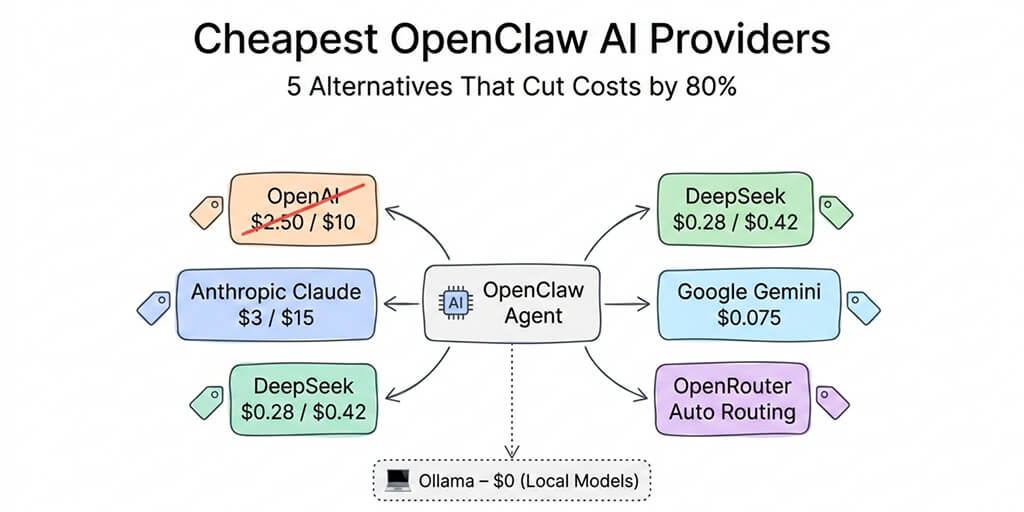

OpenClaw supports 28+ AI model providers. Claude, GPT, Gemini, DeepSeek, Mistral, local models through Ollama. The framework is genuinely model-agnostic.

But the default behavior is anything but smart about how it uses them.

When you set up OpenClaw, you pick a primary model. Most people choose something powerful: Claude Opus 4.6, GPT-4o, or Claude Sonnet 4.5. Makes sense. You want your agent to be capable.

The problem is that OpenClaw sends everything to that model.

Heartbeats are the biggest offender. These are periodic "are you still there?" checks that run every 30 minutes by default. They use your primary model. That's 48 Opus calls per day doing nothing more than confirming your agent is alive.

Sub-agents make it worse. When your main agent spawns parallel workers (researching multiple topics, checking multiple inboxes), each sub-agent defaults to the primary model too.

Simple queries round it out. "What's on my calendar today?" does not need Opus-level reasoning. But it gets Opus-level pricing.

One community member built a calculator to show the impact. For a light user (24 heartbeats per day, 20 sub-agent tasks, 10 queries), running everything through Opus costs roughly $200 per month. With smart routing, the same workload drops to about $70.

The viral Medium post "I Spent $178 on AI Agents in a Week" captured this exact pain. Most of that spend was waste.

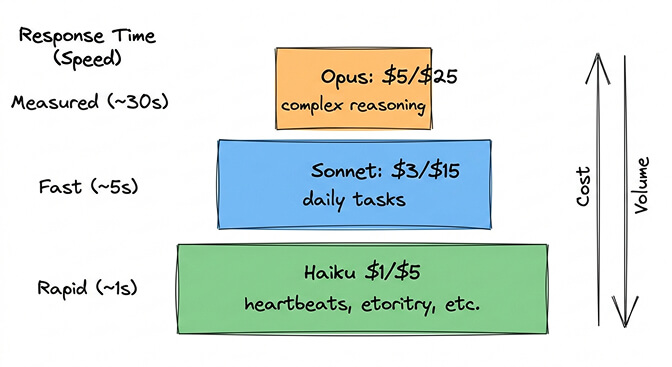

The model pricing math you need to know

Before we touch config files, here's the pricing reality for 2026.

Claude's current lineup (per million tokens, input/output):

Opus 4.6: $5 / $25. The flagship. Best reasoning, best for complex multi-step tasks.

Sonnet 4.6: $3 / $15. The workhorse. Matches or exceeds previous Opus quality for most tasks.

Haiku 4.5: $1 / $5. The sprinter. Fast, cheap, genuinely capable for straightforward work.

The output token pricing is where the real money goes. Opus output costs 5x what Haiku output costs. And agents generate a lot of output tokens, especially with extended thinking enabled.

The right model for each task isn't always the smartest model. It's the cheapest model that does the job well enough.

For a deeper dive into how these costs compound across different OpenClaw usage patterns, we wrote a full breakdown of OpenClaw API costs with specific monthly projections.

OpenRouter makes the comparison even starker. DeepSeek V3.2 runs at $0.28 per million input tokens. Gemini Flash sits around $0.075. These aren't as capable as Claude for complex reasoning, but for a heartbeat check? More than sufficient.

How OpenClaw model routing actually works

OpenClaw's model configuration lives in ~/.openclaw/openclaw.json. The key section looks like this:

{

"agent": {

"model": {

"primary": "anthropic/claude-opus-4-6"

},

"models": {

"anthropic/claude-opus-4-6": { "alias": "opus" },

"anthropic/claude-sonnet-4-6": { "alias": "sonnet" },

"anthropic/claude-haiku-4-5": { "alias": "haiku" }

}

}

}

This gives you three things:

A primary model for complex reasoning (Opus). Named aliases so you can switch mid-conversation with /model sonnet or /model haiku. A model allowlist that restricts what's available.

But this alone doesn't route tasks intelligently. Everything still defaults to primary.

Here's where it gets interesting.

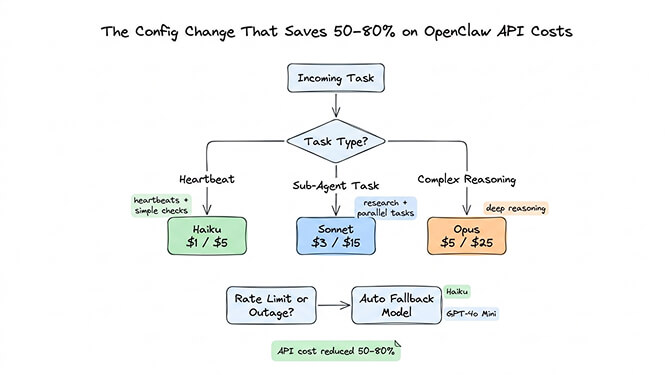

The config change that saves 50-80%

OpenClaw supports setting different models for different task types. The VelvetShark community guide documented this clearly, and here's the practical version.

In your openclaw.json, you can specify models for heartbeats, sub-agents, and the primary reasoning model separately:

{

"agent": {

"model": {

"primary": "anthropic/claude-opus-4-6",

"heartbeat": "anthropic/claude-haiku-4-5",

"subagent": "anthropic/claude-sonnet-4-6"

}

}

}

That's it. Three lines.

Heartbeats now run on Haiku at $1/$5 instead of Opus at $5/$25. Sub-agents use Sonnet (plenty capable for parallel research tasks) at $3/$15. Only your primary interactions, the ones where you actually want top-tier reasoning, use Opus.

For a more aggressive setup, you can route the primary model to Sonnet and only escalate to Opus manually:

{

"agent": {

"model": {

"primary": "anthropic/claude-sonnet-4-6",

"heartbeat": "anthropic/claude-haiku-4-5",

"subagent": "anthropic/claude-haiku-4-5"

},

"models": {

"anthropic/claude-opus-4-6": { "alias": "opus" },

"anthropic/claude-sonnet-4-6": { "alias": "sonnet" },

"anthropic/claude-haiku-4-5": { "alias": "haiku" }

}

}

}

Now your default is Sonnet (which, in 2026, is genuinely excellent for 90% of tasks). When you need Opus for something complex, you type /model opus, handle the task, then /model sonnet to switch back.

Model fallbacks add another layer of savings. If your primary model hits rate limits or has an outage, OpenClaw can automatically fall back to a cheaper alternative instead of failing:

{

"agent": {

"model": {

"primary": "anthropic/claude-sonnet-4-6",

"fallbacks": ["anthropic/claude-haiku-4-5", "openai/gpt-4o-mini"]

}

}

}

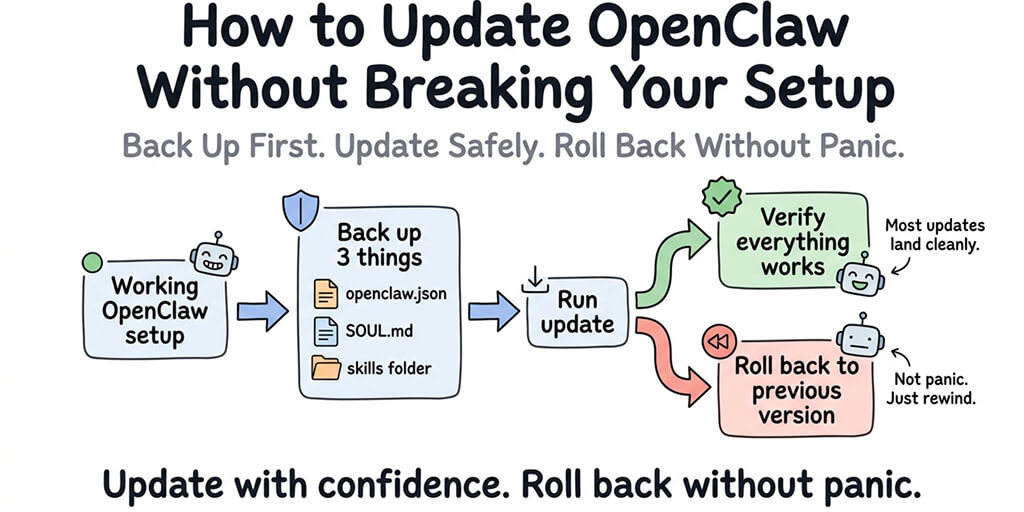

Edit the file, save, restart your gateway. Done.

The OpenRouter shortcut (for people who hate config files)

If editing JSON feels like too much, OpenRouter offers a shortcut that works surprisingly well.

Set your model to openrouter/openrouter/auto and OpenRouter's routing engine will automatically select the most cost-effective model based on the complexity of each prompt. Simple queries go to cheap models. Complex reasoning tasks get routed to capable ones.

It's not as precise as manual routing. You give up some control. But for someone who just wants lower bills without touching config files, it works.

The full setup through OpenRouter is straightforward: get an API key from openrouter.ai, run openclaw onboard and select OpenRouter as your provider, or set it manually with one command.

If you want to see the full model routing configuration in action, including the OpenRouter auto-routing approach and manual fallback setup, this community tutorial walks through the entire process with real usage numbers and cost comparisons.

Watch on YouTube: OpenClaw Multi-Model Setup and Cost Optimization (Community content)

The context window trap (and how it eats your budget)

Here's a cost problem that model routing alone won't fix.

OpenClaw has a known issue where cron jobs accumulate context indefinitely. A task scheduled to "check emails every 5 minutes" gradually builds a context window that grows with every execution. What starts as a 2,000-token prompt eventually balloons to 100,000 tokens.

At Opus pricing ($5 per million input tokens), that escalation turns a $0.02 task into a $0.50 task. Run it 50 times a day and you're burning $25 daily on a single cron job.

The fix requires setting hard limits in your skill configurations:

{

"maxContextTokens": 4000,

"maxIterations": 10

}

We documented this memory bug and its workarounds in detail. It's one of those things that no setup guide mentions until you've already been burned.

Also: set spending caps. OpenRouter lets you configure daily limits. If you're using Anthropic directly, monitor your dashboard religiously. A recursive agent loop can drain hundreds of dollars overnight. One community member reported a $37 burn in six hours from a single runaway research task.

If you'd rather not think about any of this, if you want model routing handled automatically with built-in spending controls and anomaly detection that pauses your agent before costs spiral, Better Claw handles all of this at $19/month per agent. BYOK, 60-second deploy, and you never have to edit a JSON config file again.

Which model for which task (the practical cheat sheet)

After months of running OpenClaw with various model configurations, here's what actually works:

Use Opus for: Complex multi-step reasoning. Writing that needs to be genuinely good. Code architecture decisions. Anything where you'd want a senior engineer's opinion, not an intern's.

Use Sonnet for: Daily driver tasks. Email drafting. Calendar management. Research summaries. Content generation. 90% of what most people use their agent for. Sonnet 4.6 in 2026 is remarkably capable.

Use Haiku for: Heartbeats. Simple lookups. Quick classifications. Sub-agent tasks that are narrow and well-defined. High-frequency, low-complexity operations.

Use DeepSeek/Gemini Flash for: Extreme cost optimization on tasks where quality tolerance is high. Heartbeats. Status checks. Data parsing.

Smart routing isn't about always using the cheapest model. It's about never using an expensive model where a cheap one would do.

For ideas on high-value tasks worth running through Opus, our guide to the best OpenClaw use cases covers the workflows where premium model access actually pays for itself.

What this means for the future of agent costs

Here's the thing I keep thinking about.

AI model pricing has been falling dramatically. Opus 4.5 at $5/$25 is 66% cheaper than Opus 4.1 was at $15/$75. Sonnet 4.6 delivers quality that would have required Opus just months ago. Haiku keeps getting better.

The trend line is clear: the models get cheaper and smarter. But agent usage keeps going up. More tasks, more automations, more cron jobs, more sub-agents. The savings from better pricing get eaten by expanded workloads.

Smart model routing isn't a one-time optimization. It's a habit. Every time you add a new skill or schedule a new cron job, ask yourself: does this need Opus? Or is Sonnet (or Haiku) enough?

That question, asked consistently, is the difference between a $50/month agent and a $200/month one.

OpenClaw is one of the most exciting projects I've worked with. 230,000+ GitHub stars for good reason. The ability to text your AI assistant on WhatsApp and have it handle real work across your entire digital life is genuinely transformative. But running it affordably requires intentional choices about how OpenClaw works under the hood, especially around model selection.

If you've been running your agent on a single model and wincing at the bill, try the three-line config change above. You'll see results within 24 hours.

And if you'd rather skip the config file entirely and get automatic model routing, anomaly-based cost controls, and zero-config deployment, give Better Claw a try. It's $19/month per agent, BYOK, and your first agent deploys in about 60 seconds. We handle the infrastructure and the optimization. You focus on building workflows that are actually worth the tokens.

Frequently Asked Questions

What is OpenClaw model routing and why does it matter?

OpenClaw model routing is the practice of assigning different AI models to different task types within your agent. By default, OpenClaw sends every request (heartbeats, sub-agents, simple queries, and complex reasoning) to your primary model. Routing lets you assign cheap models like Haiku ($1/$5 per million tokens) to low-complexity tasks while reserving expensive models like Opus ($5/$25) for tasks that genuinely need them. This typically reduces API costs by 50-80%.

How does Claude Opus compare to Sonnet for OpenClaw agent tasks?

Claude Opus 4.6 ($5/$25 per million tokens) excels at complex multi-step reasoning, code architecture, and nuanced analysis. Claude Sonnet 4.6 ($3/$15) handles 90% of typical agent tasks (email, calendar, research, content generation) at roughly the same quality level. For most OpenClaw users, Sonnet as the primary model with Opus available for manual escalation offers the best cost-to-quality ratio.

How do I set up multi-model routing in OpenClaw?

Edit your ~/.openclaw/openclaw.json file and set separate models for "primary," "heartbeat," and "subagent" fields within the agent.model section. For example, set primary to Sonnet, heartbeat to Haiku, and subagent to Haiku. Save the file and restart your gateway. You can also switch models mid-conversation by typing /model opus or /model haiku in your chat.

How much does OpenClaw cost per month with smart model routing?

API costs with smart routing typically run $40-80/month depending on usage, compared to $150-200/month without routing. The VPS or hosting cost is separate ($5-29/month depending on your approach). Better Claw at $19/month includes zero-config deployment and built-in cost optimization. In all cases, you bring your own API keys, so the model token costs are the same regardless of hosting.

Is it safe to use cheaper models like Haiku for OpenClaw sub-agents?

Yes, for well-scoped tasks. Haiku 4.5 is genuinely capable for narrow, well-defined operations like lookups, status checks, and data parsing. The key is matching model capability to task complexity. Don't use Haiku for tasks requiring complex reasoning or multi-step planning. Set maxIterations and maxContextTokens limits in your skill configs to prevent runaway costs regardless of which model you use.