Everything happening under the hood when you text your AI agent at 2 AM, and why understanding it matters more than you think.

It was a Tuesday night, and I was watching my OpenClaw agent reply to a Slack message, browse a competitor's pricing page, summarize the findings, and drop the results into our team channel.

Nobody asked it to do the last part. It just... did.

That was the moment I stopped thinking of OpenClaw as a chatbot and started thinking of it as infrastructure. But here's the thing: I had no idea how any of it actually worked.

And if you're like most of the 150,000+ developers who've starred OpenClaw on GitHub this year, you probably don't either. You installed it, followed a tutorial, maybe got it talking through Telegram. But what's actually happening between the moment you send "check my calendar" and the moment it replies with your schedule?

That's what this article is about.

Not another setup guide. Not a feature list copy-pasted from the README. We're going to open the hood and look at the engine. Every layer. Every decision. And by the end, you'll understand not just how OpenClaw works, but why it works the way it does, and where things get complicated when you're running it yourself.

The Gateway: OpenClaw's Nervous System

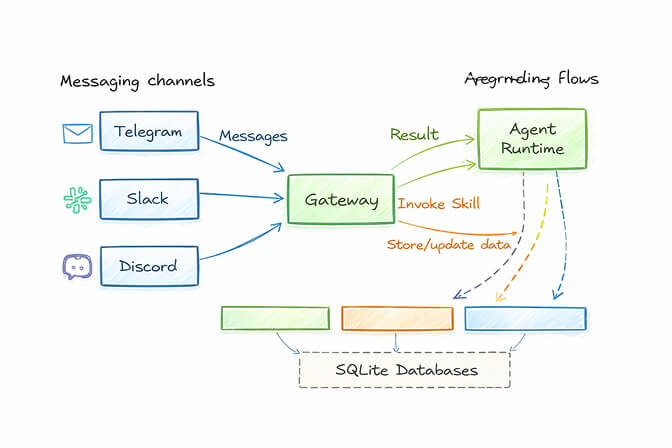

Everything in OpenClaw flows through a single process called the Gateway.

Think of it as air traffic control. Every message from every chat platform, every heartbeat ping, every tool execution, every session state change goes through this one process. It runs as a background daemon on your machine (systemd on Linux, LaunchAgent on macOS) and binds to a local WebSocket at ws://127.0.0.1:18789.

The Gateway handles four critical jobs:

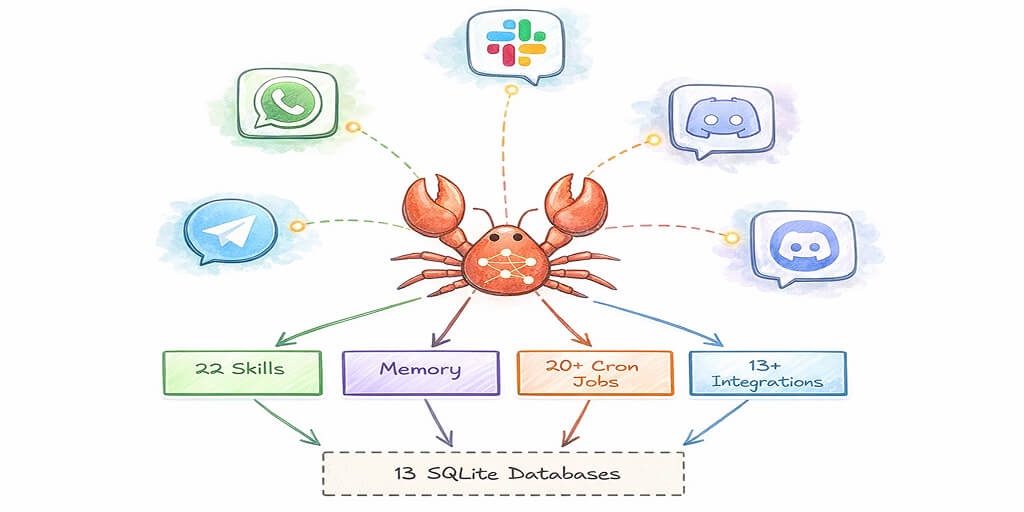

Routing. When a message arrives from WhatsApp, Telegram, Discord, or any of the 15+ supported channels, the Gateway figures out which agent session should handle it. If you're running multi-agent routing, different channels or even different contacts can go to completely isolated agent instances with their own workspaces and models.

Session management. Each conversation gets its own session. The Gateway tracks who's talking, what context has been loaded, and what tools are available. It's the reason your agent remembers what you said yesterday.

Authentication. After early security incidents where users left their gateways exposed to the internet with no auth, OpenClaw permanently removed the auth: none option. Now every instance requires token or password authentication. The Gateway enforces this.

Heartbeat orchestration. This is the part that makes OpenClaw feel alive. Every 30 minutes (configurable), the Gateway triggers a heartbeat. The agent reads a checklist from HEARTBEAT.md in your workspace, decides if anything needs attention, and either acts or silently reports back. This is how your agent sends you a morning briefing without being asked.

Here's the part nobody tells you: the Gateway is a single point of failure. If it crashes, every connected channel goes silent. If it's misconfigured, your agent is either unreachable or, worse, reachable by the wrong people. This is fine when you're tinkering on a weekend project. It's less fine when you're running an agent that handles client communications.

The Agent Loop: Input, Think, Act, Repeat

Once the Gateway routes a message to the right session, the Agent Runtime takes over. This is where the intelligence lives.

OpenClaw uses a pattern called the ReAct loop (Reason + Act). If you've worked with any modern agent framework, you've seen this before. But OpenClaw's implementation has some specific wrinkles worth understanding.

Here's the flow:

- Context assembly. Before the LLM sees your message, OpenClaw builds a massive context window. It packs in your system instructions (the "Soul" file), conversation history, relevant memories, tool schemas, active skills, and any workspace-specific rules. This is why most serious OpenClaw deployments use a frontier model like Claude or GPT-4: the context load is substantial.

- LLM inference. The assembled context goes to whichever model you've configured. OpenClaw is fully model-agnostic. You set providers in

openclaw.json, and the Gateway routes with auth rotation and exponential backoff fallback chains. - Tool execution. If the model decides it needs to take action (browse a webpage, run a shell command, check your calendar), it outputs a tool call. The runtime executes it and feeds the result back.

- Loop or reply. The model looks at the tool result and decides: do I need more information, or am I ready to respond? This loop can run multiple cycles for complex tasks.

The key insight: OpenClaw isn't a chatbot that sometimes uses tools. It's an orchestration engine that happens to communicate through chat. The messaging interface is just the surface. Underneath, it's running the same agent patterns you'd find in any enterprise automation framework.

But here's where it gets messy.

Skills, Memory, and the "It's All Just Files" Philosophy

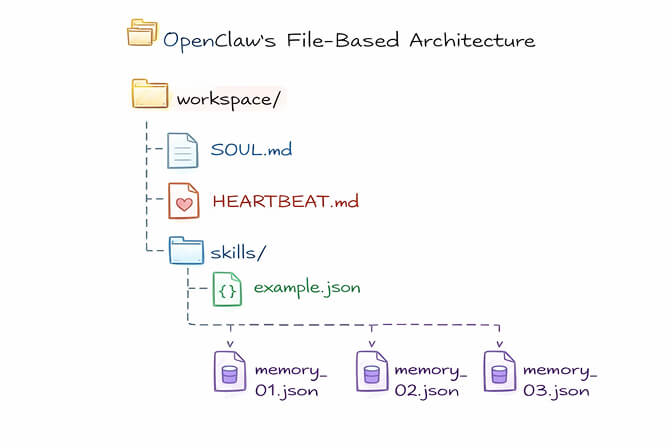

One of the boldest design decisions in OpenClaw is that everything is a file on disk.

Your agent's personality? A Markdown file called SOUL.md. Its memory? Markdown files in your workspace. Skills? YAML and Markdown files in a skills folder. Heartbeat rules? HEARTBEAT.md. Tool permissions? Configuration files you can open in any text editor.

This is both OpenClaw's greatest strength and its biggest operational headache.

The strength: You can version control your entire agent with Git. You can inspect every decision, every memory, every skill in a text editor. There's nothing hidden. For developers who value transparency, this is deeply satisfying.

The headache: You're now responsible for managing a growing pile of files across potentially multiple agent workspaces. Memory files need pruning. Skills need updating. Configuration drift between what's in your files and what the agent is actually doing becomes a real problem over time.

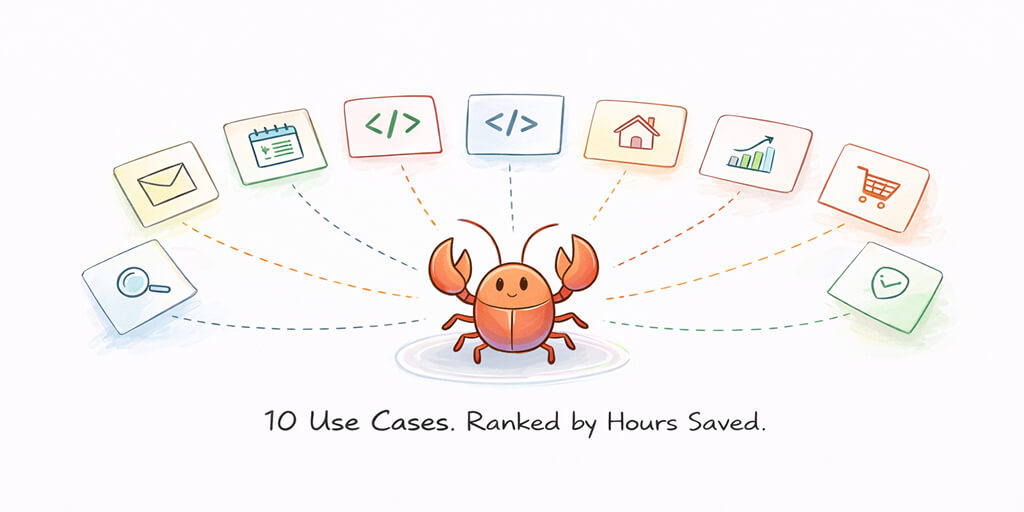

The skills system is particularly interesting. Skills are modular capability packages that expand what your agent can do. Browse the web. Control your email. Manage GitHub repos. There are over 1,700 skills on ClawHub, the community registry. Installing one is a terminal command away.

But stay with me here: the skill registry has had security problems. Cisco's AI security research team found a third-party skill that performed data exfiltration and prompt injection without user awareness. The registry lacks the kind of vetting you'd expect from, say, npm or the VS Code marketplace. You're essentially giving code execution privileges to community-contributed packages with minimal review.

The memory system works through local Markdown files that get compacted when context runs low. It's persistent and inspectable, but it's also fragile. Developers have reported agents "forgetting" important context, which led community members like Nat Eliason to build elaborate three-layer memory systems just to make retention reliable.

Multi-Channel: One Agent, Every Platform

This is the feature that made OpenClaw go viral.

Your single agent instance can simultaneously connect to WhatsApp, Telegram, Slack, Discord, Signal, iMessage (via BlueBubbles), Microsoft Teams, Google Chat, Matrix, and WebChat. Each platform gets its own adapter that normalizes messages into a common format before handing them to the Gateway.

The practical implication: you text your agent from WhatsApp while commuting, switch to Slack at your desk, and the agent maintains context across both. Same session. Same memory. Different interfaces.

Each adapter handles platform-specific quirks. WhatsApp uses QR code pairing through the Baileys library. Discord and Slack use bot tokens. iMessage requires a separate BlueBubbles server. The adapters abstract all of this away, so the agent runtime doesn't need to know which platform it's talking to.

The real-world problem: Setting up even three channels means configuring three separate authentication flows, managing three sets of credentials, and debugging three different failure modes. I've seen developers spend an entire weekend just getting WhatsApp + Telegram + Slack working together reliably.

And that's on a good day.

The Security Question Nobody Can Ignore

Let's be direct about this. OpenClaw asks for a level of system access that would make any security engineer nervous.

It can read and write files. Run shell commands. Control your browser. Access your email, calendar, and messaging accounts. And if you're running it with root-level execution privileges (which many tutorials don't warn against), a compromised agent has full control of your machine.

Prompt injection is the biggest threat. Because OpenClaw processes messages from external sources (group chats, forwarded content, even scraped web pages), a malicious prompt embedded in that data can hijack the agent's behavior. CrowdStrike published a detailed analysis of this attack surface, noting that successful injections can leak sensitive data or hijack the agent's tools entirely.

OpenClaw's maintainers have been responsive. They removed the dangerous auth: none option, added DM pairing codes for unknown senders, and published the openclaw doctor command to audit your security configuration. But as one of OpenClaw's own maintainers warned in Discord: "If you can't understand how to run a command line, this is far too dangerous of a project for you to use safely."

That's an honest assessment. And it's why the question of where and how you deploy OpenClaw matters as much as understanding how the framework works.

Watch: OpenClaw Full Tutorial for Beginners (freeCodeCamp)

If you're a visual learner, this 55-minute course walks through everything from installation and the Gateway concept to Docker-based sandboxing and skill management. It's the most thorough free video walkthrough available, and a perfect companion to the architecture breakdown we've covered here.

Watch on YouTube: OpenClaw Full Tutorial for Beginners (Community content from freeCodeCamp.org)

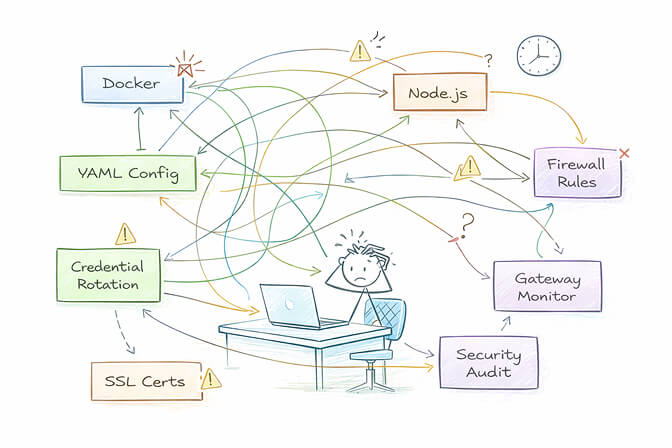

Where Self-Hosting Starts to Hurt

Understanding how OpenClaw works is one thing. Running it reliably is another.

Here's what the architecture overview doesn't tell you: you need a machine running 24/7. You need to manage Node.js versions, update OpenClaw without breaking your config, handle credential rotation for every connected platform, monitor the Gateway process, and have a plan for when things break at 3 AM. And if you want proper security, you need Docker sandboxing, firewall rules, non-root execution, and regular audits.

For developers who enjoy infrastructure, this is a fun weekend. For everyone else, it's a full-time ops job.

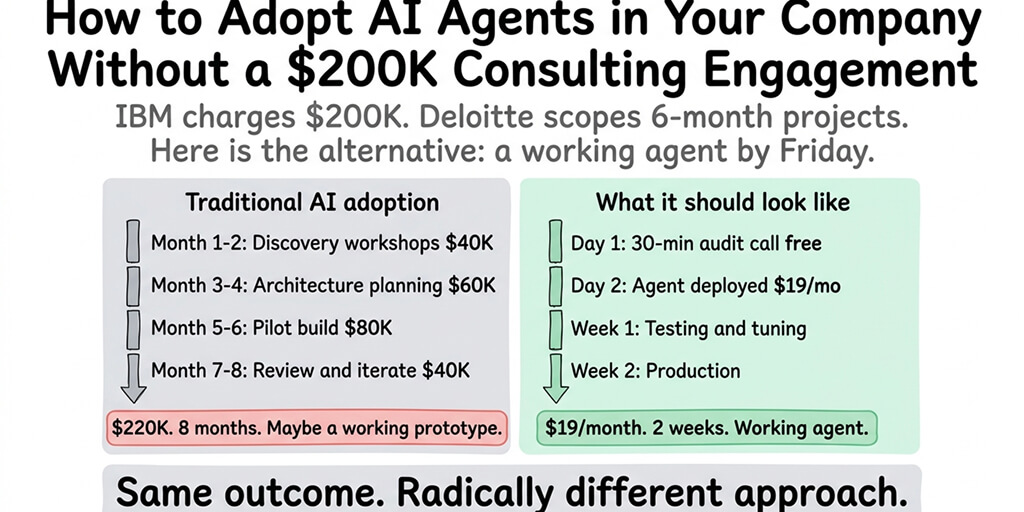

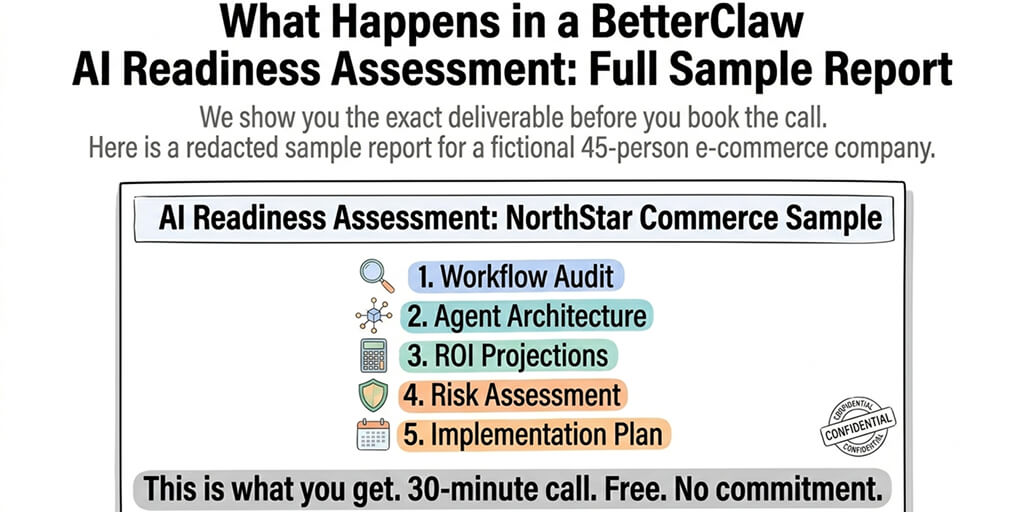

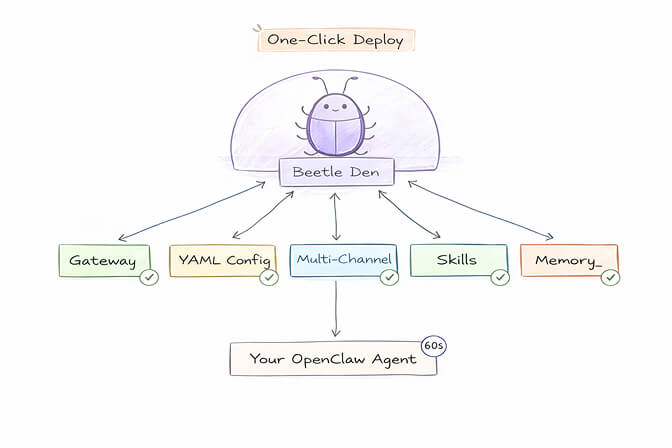

This Is Exactly the Problem We Built BetterClaw to Solve

BetterClaw is a managed deployment platform for OpenClaw. You get everything we've discussed in this article, the Gateway, the agent loop, the multi-channel connections, the skills, the memory, but without any of the infrastructure work. One-click deploy. Docker-sandboxed execution with AES-256 encryption. Persistent memory with hybrid vector and keyword search. Real-time health monitoring that auto-pauses your agent if something goes wrong.

Sixty seconds from sign-up to a running agent. $19/month. Bring your own API keys.

The Big Picture: Why Architecture Matters

If you've made it this far, you understand something that most OpenClaw users don't: this isn't just a chatbot. It's a distributed system with a control plane, an execution runtime, persistent state, multi-channel I/O, and a modular capability layer.

That architecture is powerful. It's also the reason OpenClaw is one of the most interesting open-source projects in years. Peter Steinberger built something that makes the patterns behind every serious AI agent framework tangible and inspectable. Understanding OpenClaw's architecture means understanding how AI agents will work everywhere.

But understanding the architecture also means understanding the operational cost. Every layer we discussed, the Gateway, the agent loop, the file-based memory, the multi-channel adapters, the security surface, is something you either manage yourself or let someone else manage for you.

The best developers I know are the ones who understand the engine and know when it makes sense to let someone else change the oil.

If you want to tinker and learn, self-host OpenClaw. The codebase is beautiful and the community is incredible. If you want a production-grade OpenClaw agent running reliably across your team's chat channels without the infrastructure overhead, give BetterClaw a try. Deploy in 60 seconds. We handle the rest.

Either way, now you know what's happening under the hood. And that makes all the difference.

Frequently Asked Questions

What is OpenClaw and how does the OpenClaw framework work?

OpenClaw is an open-source AI agent framework created by Peter Steinberger that runs on your own machine and connects to messaging apps like WhatsApp, Telegram, Slack, and Discord. It works by routing messages through a central Gateway process to an LLM-powered agent runtime that can reason, use tools, and take real-world actions. Unlike traditional chatbots, OpenClaw operates as a persistent daemon with memory, scheduled heartbeats, and modular skills.

How does OpenClaw compare to ChatGPT or Claude's web interface?

ChatGPT and Claude's web interface are cloud-hosted chatbots that respond to prompts in a browser. OpenClaw is a self-hosted agent framework that can take autonomous actions: running shell commands, controlling browsers, managing files, and executing scheduled tasks. The biggest difference is that OpenClaw connects to your existing messaging apps and persists between sessions, while web-based AI tools require you to open a new tab and lose context.

How do I deploy OpenClaw without managing Docker and infrastructure?

The fastest way is to use a managed platform like BetterClaw, which handles all infrastructure, Docker sandboxing, security configuration, and multi-channel setup for you. You get a running OpenClaw agent in under 60 seconds with no YAML files, no terminal commands, and no server management. It costs $19/month per agent with BYOK (bring your own API keys).

Is OpenClaw safe and secure enough for business use?

OpenClaw requires careful security configuration for business use. The framework asks for broad system permissions, and prompt injection is a known vulnerability. Best practices include running OpenClaw in a Docker sandbox, using token-based authentication, scoping file access, running the openclaw doctor security audit, and never using it with root privileges. Managed platforms like BetterClaw include enterprise-grade security (sandboxed execution, AES-256 encryption, workspace scoping) by default.

How much does it cost to run an OpenClaw AI agent?

Self-hosting OpenClaw is free (it's MIT-licensed open-source), but you'll pay for LLM API usage, which varies by model and usage. A moderate-use agent running Claude or GPT-4 typically costs $30-100/month in API fees alone, plus the cost of a VPS or dedicated machine ($5-50/month). Managed options like BetterClaw add $19/month per agent but eliminate all infrastructure management costs and time.