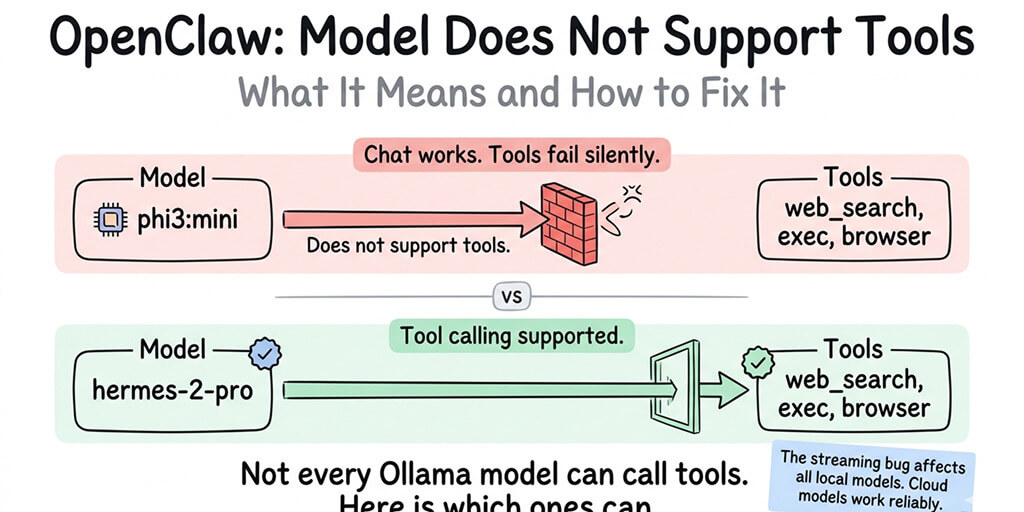

Your model can chat fine. It just can't call tools. Here's why, and which models actually work.

You installed Ollama. You pulled phi3:mini because it's small and fast. You connected it to OpenClaw. You asked your agent to search the web.

And then: "Model does not support tools."

Your model loaded. Your gateway started. Chat works perfectly. But the moment your agent tries to use a skill, execute a command, or call any tool, this error kills the interaction.

Here's what it means and exactly how to fix it.

What "model does not support tools" actually means

Tool calling is a specific capability that not every language model has. When your OpenClaw agent needs to search the web, check your calendar, or read a file, it doesn't do those things directly. It generates a structured request (a "tool call") that tells OpenClaw which tool to use and what arguments to pass. OpenClaw then executes the tool and sends the results back to the model.

The problem: generating structured tool calls is a skill the model has to be trained for. A model that's great at conversation might never have been trained to output tool call syntax. When OpenClaw sends a request with available tools to a model that doesn't understand tool calling, the model either ignores the tools entirely or throws the "model does not support tools" error.

This isn't an OpenClaw bug. It's not a config problem. Your model genuinely can't do what you're asking it to do. It's like asking a calculator to play music. The hardware works. It just wasn't built for that function.

"Model does not support tools" means exactly what it says. Your model wasn't trained for function calling. Switch to one that was.

The models that trigger this error

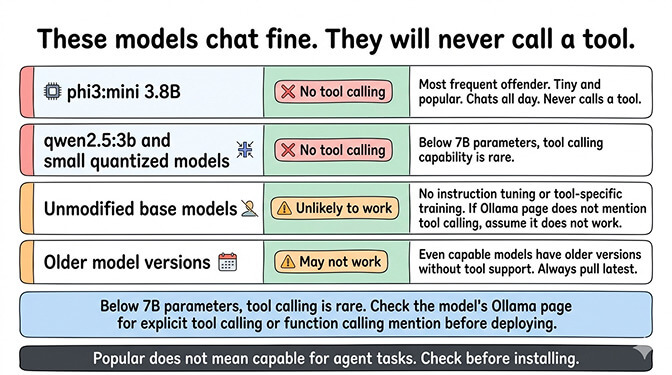

These Ollama models are commonly used with OpenClaw and commonly trigger the "does not support tools" error:

phi3:mini (3.8B) is the most frequent offender. It's popular because it's tiny and runs on almost any hardware. But it has no tool calling support. It will chat all day. It will never call a tool.

qwen2.5:3b and other small quantized models frequently lack tool calling support. Below 7B parameters, tool calling capability is rare.

Unmodified base models without instruction tuning or tool-specific training. If the model's Ollama page doesn't mention "tool calling" or "function calling" in its capabilities, it won't work for agent tasks.

Older model versions that predate tool calling support. Even models that now support tools may have older versions on Ollama that don't. Make sure you're pulling the latest version.

For the complete list of recommended models for OpenClaw, our Ollama troubleshooting guide covers which models work, which don't, and the hardware requirements for each.

The models that actually support tool calling

Ollama's official documentation recommends these models for tool calling:

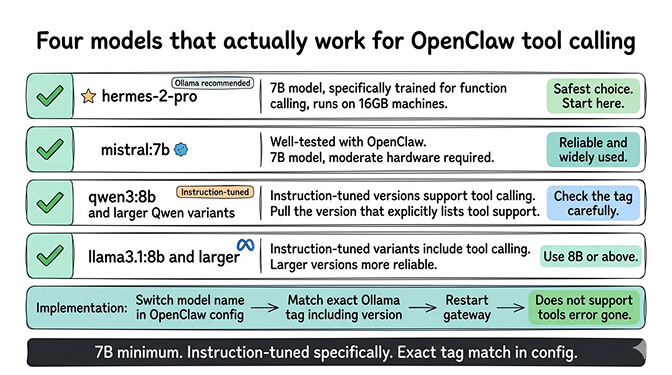

hermes-2-pro is Ollama's go-to recommendation for tool calling. It's a 7B model that was specifically trained for function calling. It runs on 16GB machines. This is the safest choice if you want tools to work.

mistral:7b supports tool calling and is well-tested with OpenClaw. It's another 7B model that runs on moderate hardware.

qwen3:8b and larger Qwen variants support tool calling in their instruction-tuned versions. Make sure you pull the version that specifically lists tool support.

llama3.1:8b and larger versions include tool calling in their instruction-tuned variants.

Switch to one of these models in your OpenClaw config. Set the model name to match exactly what Ollama has pulled (including the tag). Restart the gateway. The "does not support tools" error should be gone.

Here's the part nobody mentions

Stay with me here. This is important.

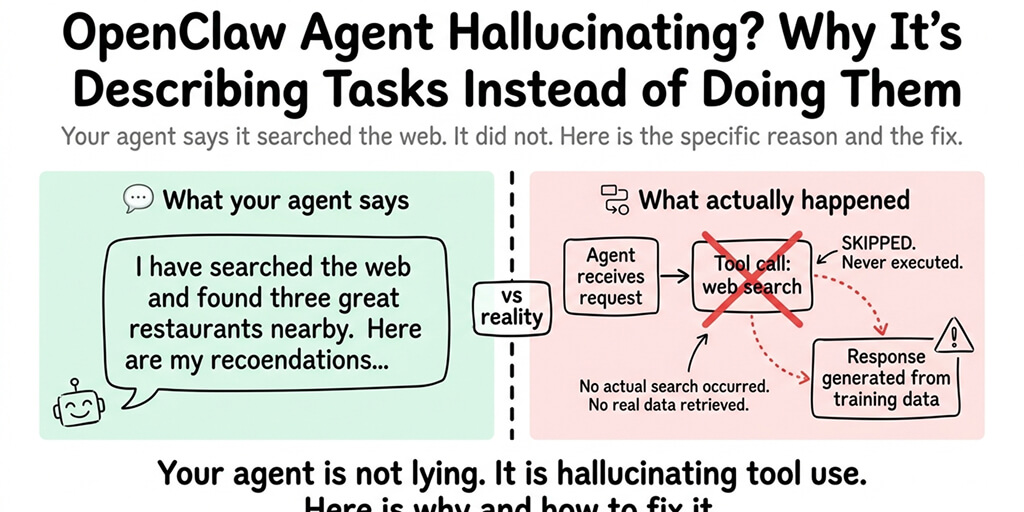

Even after you switch to a model that supports tool calling, there's a second problem. OpenClaw has a streaming bug (documented in GitHub Issue #5769) that breaks tool calling for ALL Ollama models, including the ones that officially support it.

The bug works like this: OpenClaw sends every request with streaming enabled. Ollama's streaming implementation doesn't correctly return tool call responses. The model generates the tool call. The streaming protocol drops it. OpenClaw never receives the instruction.

So even with hermes-2-pro or mistral:7b, tool calling through Ollama currently doesn't work in practice. The "model does not support tools" error goes away. But tool calls still fail silently. The model writes about what it would do instead of doing it.

This is the honest situation as of March 2026: no Ollama model running through OpenClaw can reliably execute tool calls because of the streaming protocol issue. The community has proposed a fix (disable streaming when tools are present), but it hasn't been merged into a release yet.

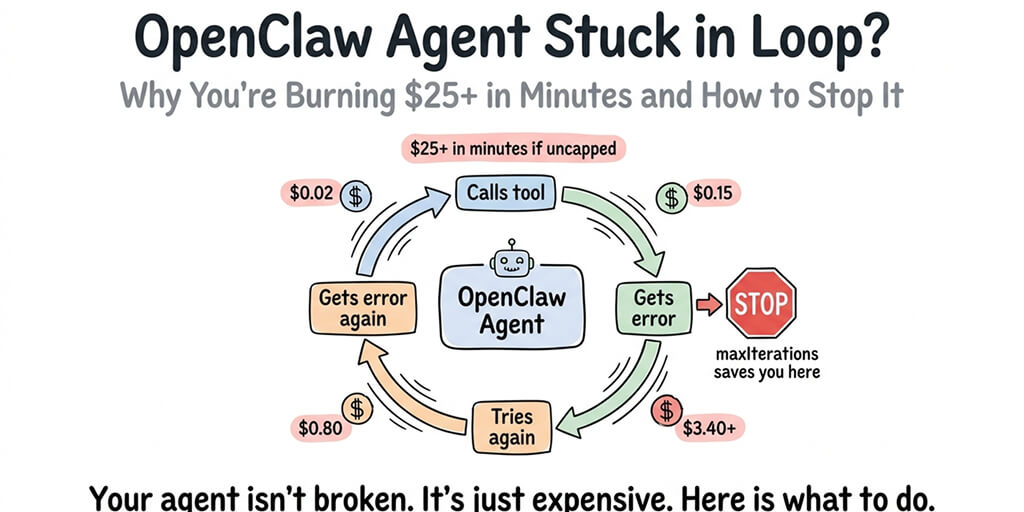

If you need tool calling that works right now, cloud providers are the reliable path. Claude Sonnet ($3/$15 per million tokens), DeepSeek ($0.28/$0.42 per million tokens), and GPT-4o ($2.50/$10 per million tokens) all have working tool calling. For the cheapest cloud providers that work with OpenClaw, our provider guide covers five options under $15/month.

If dealing with Ollama model compatibility and streaming bugs isn't how you want to spend your time, BetterClaw supports 28+ cloud providers with reliable tool calling out of the box. $19/month per agent, BYOK. Pick your model from a dropdown. Every tool call works because cloud API streaming handles function responses correctly.

How to check before you try

Before pulling a new Ollama model for OpenClaw, check whether it supports tool calling. Two quick ways:

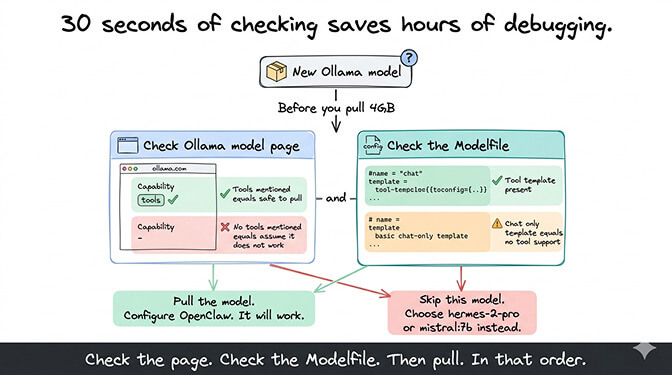

Check the Ollama model page. Go to the model's page on ollama.com. Look for "tools" or "function calling" in the capabilities or description. If it's not mentioned, the model probably doesn't support it.

Check the model's Modelfile. The Modelfile defines the model's capabilities. Models with tool calling support will have tool-related template configurations. If the Modelfile only has a basic chat template, tools aren't supported.

This 30-second check saves you the frustration of pulling a 4GB model, configuring it, testing it, and then getting the "does not support tools" error.

For the broader picture of how Ollama models interact with OpenClaw — including the streaming bug, model discovery timeouts, and WSL2 networking issues — our comprehensive Ollama guide covers every failure mode.

The realistic path forward

Here's the honest summary.

If you're seeing "model does not support tools," switch to hermes-2-pro or mistral:7b. The error will stop. But tool calls will still fail silently because of the streaming bug.

If you need an agent that can actually execute tools (web search, file operations, calendar, email), use a cloud provider. The cheapest options cost $3–8/month in API fees and have working tool calling.

If you need complete data privacy and local-only operation, Ollama works for chat interactions. Tool calling will work when the streaming fix lands. Until then, local models are chat-only agents.

The managed vs self-hosted comparison covers how these model decisions translate across different deployment approaches.

If you want tool calling that works without debugging Ollama model compatibility, Better Claw supports $19/month per agent, BYOK with 28+ cloud providers. Every model we support has working tool calling. No "does not support tools" errors. No streaming bugs. Your agent just does things.

Frequently asked questions

What does "model does not support tools" mean in OpenClaw?

It means the Ollama model you're using wasn't trained for function/tool calling. OpenClaw agents need models that can generate structured tool call requests (for web search, file operations, skills, etc.). Models like phi3:mini and other small models lack this capability. The fix is switching to a model that supports tool calling, such as hermes-2-pro or mistral:7b.

Which Ollama models support tool calling for OpenClaw?

Ollama officially recommends hermes-2-pro and mistral:7b for tool calling. Other models with tool support include qwen3:8b+ (instruction-tuned versions) and llama3.1:8b+. However, due to OpenClaw's streaming bug (GitHub Issue #5769), even these models can't reliably execute tool calls through Ollama currently. Cloud providers (Claude Sonnet, DeepSeek, GPT-4o) have working tool calling.

How do I fix the "model does not support tools" error?

Switch your OpenClaw config to use a model that supports tool calling (hermes-2-pro is the safest choice). Make sure the model name and tag match exactly what Ollama has pulled. Restart the gateway. The error will stop. Note: even after fixing this error, tool calls may fail silently due to a separate streaming protocol bug affecting all Ollama models in OpenClaw.

Is it worth using Ollama with OpenClaw if tool calling doesn't work?

For chat-only interactions (conversations, Q&A, advice), Ollama works well and provides complete data privacy at zero API cost. For agent tasks requiring tool execution (web search, file operations, calendar, email), cloud APIs are more reliable and surprisingly cheap (DeepSeek at $3–8/month, Gemini Flash free tier). The hybrid approach — Ollama for heartbeats and private chat, cloud for tool-dependent tasks — gives you the best of both.

Will the Ollama tool calling issue be fixed in OpenClaw?

The community has proposed a fix: disable streaming when tools are present in the request. The patch is straightforward and the community supports it. As of March 2026, it hasn't been merged into a release. When it lands, models with native tool calling support (hermes-2-pro, mistral:7b) should work correctly. The "model does not support tools" error for models without tool training will remain regardless of the streaming fix.

Related Reading

- OpenClaw Ollama "Fetch Failed" Fix — Connection errors between OpenClaw and Ollama

- OpenClaw Local Model Not Working: Complete Fix Guide — All local model issues in one guide

- OpenClaw Ollama Guide: Complete Setup — Full Ollama integration from scratch

- Cheapest OpenClaw AI Providers — Cloud alternatives when local models fall short