The "free AI agent" dream has a hardware price tag. Here's the honest breakdown of what runs, what struggles, and what's not worth the electricity.

A developer in our community bought a used RTX 3090 specifically to run local models with OpenClaw. He spent $650 on the GPU, $80 on a new power supply to handle it, and a weekend installing everything. Ollama loaded. The model ran. He typed "hello" and got a response in under a second.

Then he asked the agent to search the web and summarize the results. Nothing happened. The model wrote a paragraph about how it would search the web if it could. No actual web search. No tool execution.

He'd spent $730 and a weekend to build an expensive chatbot that couldn't perform agent tasks. The hardware worked perfectly. The OpenClaw local model setup had a fundamental limitation he didn't know about: streaming breaks tool calling for all Ollama models.

This guide covers the actual hardware requirements for running local models with OpenClaw, what those local models can and can't do once you have the hardware, and whether the total cost of ownership actually saves money compared to cloud APIs.

The hardware floor: what you need at minimum

Running Ollama with OpenClaw requires more resources than most people expect. The bottleneck isn't OpenClaw itself (it runs fine on minimal hardware). It's the local model that needs serious compute.

RAM is the primary constraint. Local models load entirely into memory. A 7B parameter model (the smallest useful size) needs roughly 4-8GB of RAM just for the model weights. Add OpenClaw's own memory footprint, the operating system, and any other services, and you need 16GB minimum. For anything larger than 7B parameters, 32GB is the practical floor.

VRAM matters more than RAM if you have a GPU. Running models on a dedicated GPU is dramatically faster than CPU inference. An NVIDIA RTX 3060 with 12GB VRAM can run 7B models comfortably. An RTX 3090 or 4090 with 24GB VRAM can handle models up to about 30B parameters. For the community-recommended glm-4.7-flash model (roughly 25GB VRAM requirement), you need the top tier.

Apple Silicon changes the math. M1/M2/M3/M4 Macs with unified memory handle local models surprisingly well because the GPU and CPU share the same memory pool. A Mac Mini M4 with 24GB unified memory runs 7B-14B models smoothly. A Mac Studio M2 Ultra with 64GB+ unified memory runs the larger models that give the best results.

CPU inference works but is painfully slow. If you don't have a dedicated GPU or Apple Silicon, Ollama falls back to CPU inference. A 7B model on a modern CPU generates maybe 2-5 tokens per second. For comparison, cloud APIs return responses in 1-2 seconds total. CPU inference makes the agent feel like it's thinking underwater.

For the complete breakdown of how local models interact with OpenClaw and the five most common failure modes, our troubleshooting guide covers each issue with specific fixes.

The models worth running locally (and the ones that aren't)

Not all local models perform equally with OpenClaw. The community has tested extensively, and the consensus is clear.

Models that work well for chat

glm-4.7-flash is the community favorite. Multiple users in GitHub Discussion #2936 call it "huge bang for the buck." Strong reasoning and code generation. The catch: it needs roughly 25GB of VRAM, which means an RTX 4090 or a Mac with 32GB+ unified memory. It won't fit entirely in VRAM on anything smaller.

qwen3-coder-30b performs well for code-heavy conversations. Requires significant hardware (24GB+ RAM for quantized versions). Good for developers who want a local coding assistant.

hermes-2-pro and mistral:7b are Ollama's official recommendations for models with native tool calling support. They're lightweight enough to run on 16GB machines. They're also the models most likely to work properly when the streaming fix eventually lands in OpenClaw.

Models to avoid

Anything under 7B parameters. Models like phi-3-mini (3.8B) and qwen2.5:3b technically run but produce unreliable results for agent tasks. Context tracking degrades quickly. Instructions get ignored or misinterpreted. Not worth the electricity.

Unquantized large models on insufficient hardware. If your hardware forces heavy quantization (Q2 or Q3), the model quality drops dramatically. You're better off running a smaller model at higher quality than a large model at extreme quantization.

Ollama's own OpenClaw integration docs recommend setting the context window to at least 64K tokens. Many popular models default to much less. Configure this explicitly to avoid the agent running out of context mid-conversation.

For guidance on choosing the right model for your agent's specific tasks, our model comparison covers cost-per-task data across local and cloud providers.

The elephant in the room: tool calling doesn't work

Here's what nobody tells you about the OpenClaw local model hardware discussion.

You can build the most powerful local inference setup imaginable. RTX 4090. 128GB RAM. Fastest SSD. Perfect Ollama configuration. And your agent still can't perform actions.

The reason is documented in GitHub Issue #5769: OpenClaw sends all model requests with streaming enabled. Ollama's streaming implementation doesn't correctly return tool call responses. The model decides to call a tool (web search, file read, shell command), generates the tool call, but the streaming protocol drops it. OpenClaw never receives the instruction.

The result: your local agent can have conversations but can't execute tools. No web searches. No file operations. No calendar checks. No email skills. No browser automation. The model writes about what it would do instead of doing it.

This affects every model running through Ollama on OpenClaw. The community has proposed a fix (disabling streaming when tools are present), but as of March 2026, it hasn't been merged into a release.

Building expensive local hardware for OpenClaw tool calling is like buying a race car for a track that isn't built yet. The hardware will work eventually. But right now, local models are limited to chat-only interactions.

The real cost of "free" local models

The appeal of local models is zero API costs. But "zero API costs" and "zero cost" are very different things.

Let's do the actual math.

Hardware cost. A Mac Mini M4 with 24GB unified memory costs around $600. An RTX 4090 costs $1,600-2,000. A used RTX 3090 runs $500-700. Add a power supply upgrade ($80-120) if your existing PSU can't handle the GPU.

Electricity. A Mac Mini M4 running 24/7 consumes roughly $3-5/month. A desktop with an RTX 4090 under load uses significantly more, roughly $15-30/month depending on electricity rates and inference frequency.

Your time. The initial setup takes 2-4 hours for someone comfortable with the command line. Ongoing maintenance (model updates, Ollama updates, troubleshooting WSL2 networking issues, resolving model discovery timeouts) adds 1-3 hours per month.

Hardware depreciation. That $600 Mac Mini depreciates. That $1,600 GPU depreciates faster. Over two years, you're losing $25-65/month in hardware value.

Total monthly cost of local model ownership: $30-100/month when you factor in hardware amortization, electricity, and time.

Meanwhile, cloud APIs in 2026 are absurdly cheap. DeepSeek V3.2 costs $0.28/$0.42 per million tokens, which works out to $3-8/month for a moderately active agent. Gemini 2.5 Flash offers 1,500 free requests per day. Claude Haiku runs $1/$5 per million tokens, typically $5-10/month for moderate usage.

And critically: cloud providers have working tool calling. Your agent can actually do things.

For the full comparison of which cloud providers cost what for OpenClaw, our provider guide covers five alternatives that are cheaper than most people expect.

When local hardware genuinely makes sense

I've just spent several paragraphs explaining why local models cost more and do less than cloud APIs. Let me be fair about the three scenarios where the hardware investment is justified.

Complete data sovereignty

If your data absolutely cannot leave your network, local models are the only option. Government agencies, defense contractors, healthcare organizations with strict HIPAA requirements, legal firms handling privileged communications. These environments have compliance requirements that no cloud API can satisfy.

For these use cases, the tool calling limitation is a real constraint, but conversational interaction with sensitive data is still valuable. A local agent that can discuss classified documents or answer questions about patient records without any data leaving the building is worth the hardware cost.

Air-gapped and offline environments

No internet means no API calls. Period. If you need an AI assistant in a facility without reliable connectivity (remote installations, secure facilities, maritime environments, some manufacturing floors), local models are the only path.

Hybrid heartbeat routing

This is the practical compromise that makes the most financial sense. Use a local Ollama model for heartbeats (the 48 daily status checks that consume tokens on cloud providers) and route everything else to a cloud model that has working tool calling.

Heartbeats don't require tool calling. They're simple status pings. Running them locally saves $4-15/month depending on which cloud model would otherwise handle them. Set the heartbeat model to your local Ollama instance and the primary model to a cloud provider like Claude Sonnet or DeepSeek.

For the full model routing configuration including the hybrid local/cloud approach, our routing guide covers the setup pattern.

If managing local hardware, cloud APIs, and model routing configuration feels like more infrastructure work than your agent is worth, BetterClaw handles model routing across 28+ providers with a dashboard dropdown. $19/month per agent, BYOK. Pick your models. Set your limits. Deploy in 60 seconds. No hardware to buy, no Ollama to debug, no streaming bugs to work around.

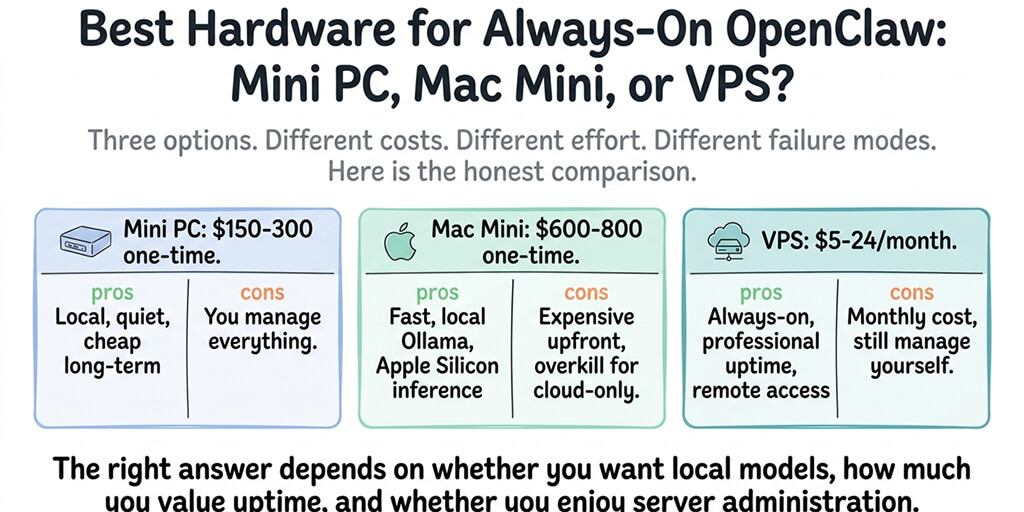

The hardware buying guide (if you're still committed)

If your use case genuinely requires local models, here's what to buy at each budget level.

Budget tier ($600-800). Mac Mini M4 with 24GB unified memory. Runs 7B-14B models at decent speed. Quiet. Low power consumption. The best value for local OpenClaw. Handles chat interactions and hybrid heartbeat routing without issue.

Mid-range tier ($1,200-1,500). Used RTX 3090 (24GB VRAM) in an existing desktop, or a Mac Mini M4 Pro with 48GB unified memory. Runs models up to 30B parameters. Better reasoning quality, faster inference. Good enough for the heavier local models.

Power user tier ($2,500-4,000). Mac Studio M2 Ultra with 64GB+ unified memory, or a workstation with an RTX 4090. Runs glm-4.7-flash and qwen3-coder-30b at full speed. This is what the community builders running five-agent setups use.

What not to buy. Don't buy a cloud GPU instance (Lambda Labs, Vast.ai) for running Ollama with OpenClaw. The per-hour cost of a GPU instance (typically $0.50-3.00/hour) adds up to $360-2,160/month. That's 10-100x more expensive than cloud API costs. GPU instances make sense for training models. They make no sense for inference.

The future: when local models will actually work

The streaming plus tool calling bug will get fixed. The proposed patch is straightforward. The community wants it. It's a matter of when, not if.

When it lands, the best local models (glm-4.7-flash, qwen3-coder-30b, hermes-2-pro) will become genuinely useful for agent tasks. Tool calling will work. Skills will execute. The gap between local and cloud will narrow significantly for tasks that don't require frontier-level reasoning.

But "narrowing" isn't "closing." Cloud models like Claude Sonnet and GPT-4o will still outperform local models on complex multi-step reasoning, long-context accuracy, and prompt injection resistance. The hardware requirements for running competitive local models (25GB+ VRAM, 64GB+ RAM for larger models) put them out of reach for most users.

The practical future is hybrid. Cloud for the tasks that need it. Local for privacy-sensitive conversations and heartbeat cost savings. OpenClaw's model routing architecture already supports this split. The tooling just needs to catch up.

For now, if you need an agent that can act (not just talk), cloud providers are the reliable path. If you need complete privacy for conversational AI, local hardware works today within the chat-only limitation.

The managed vs self-hosted comparison covers how these choices translate across deployment options, including what BetterClaw handles versus what you manage yourself.

If you want an agent that works with any cloud provider, supports 15+ chat platforms, and deploys without buying hardware or debugging Ollama, give BetterClaw a try. $19/month per agent, BYOK with 28+ providers. 60-second deploy. Docker-sandboxed execution. Your agent runs on infrastructure that's already optimized. You focus on what the agent does, not what it runs on.

Frequently Asked Questions

What hardware do I need to run local models with OpenClaw?

At minimum, 16GB RAM and a modern CPU for 7B parameter models (chat only, slow inference). For a usable experience, 32GB RAM or 24GB unified memory on Apple Silicon, ideally with an NVIDIA GPU with 12GB+ VRAM. For the best local models (glm-4.7-flash, qwen3-coder-30b), you need 24GB VRAM (RTX 4090) or 64GB+ unified memory (Mac Studio M2 Ultra). Ollama recommends at least 64K context window for OpenClaw compatibility.

How does running local models compare to cloud APIs for OpenClaw?

Local models cost $30-100/month when you factor in hardware depreciation, electricity, and maintenance time. Cloud APIs like DeepSeek ($0.28/$0.42 per million tokens) cost $3-15/month for the same usage level. The critical difference: cloud APIs have working tool calling, meaning your agent can perform actions. Local models through Ollama currently can only handle conversations due to a streaming protocol bug (GitHub Issue #5769).

How do I set up Ollama with OpenClaw?

Install Ollama and pull your chosen model. Pre-load the model before starting OpenClaw to avoid discovery timeouts. Configure your OpenClaw settings with the Ollama provider, setting the context window to at least 64K tokens. Start the gateway and test with a simple message. If you're on WSL2, use the actual network IP instead of localhost. Expect chat to work and tool calling to fail. Total setup time: 2-4 hours for the first attempt.

Is running OpenClaw locally cheaper than cloud APIs?

Usually not. A Mac Mini M4 ($600) depreciates roughly $25/month over two years. Add $3-5/month electricity. Add 1-3 hours/month maintenance. Total: $30-40/month for a machine that can only handle chat, not tool calling. DeepSeek via API costs $3-8/month with full agent capabilities. The exception: if you already own suitable hardware and need data sovereignty for compliance reasons, the marginal cost of running Ollama is just electricity and time.

Can I use both local and cloud models with the same OpenClaw agent?

Yes. OpenClaw's model routing supports hybrid configurations. The most practical setup: route heartbeats (48 daily status checks) to your local Ollama model to save $4-15/month on cloud token costs, and route all other tasks to a cloud provider like Claude Sonnet or DeepSeek that has working tool calling. This gives you cost savings on heartbeats and full agent functionality for everything else.