Your agent says the task is done. It isn't. It invents files that don't exist, reports prices that are wrong, and confidently completes work it never started. Here are 5 fixes ranked by impact.

A developer on the OpenClaw Discord posted this three weeks ago: "I have been facing a lot of hallucination issues from OpenClaw where it keeps saying things are done but are really not. I can see it starting to ignore some of my questionings. It's starting to run away from problems."

Another developer on DEV.to shared something worse: his agent told him a £716 visa cost £70,000. Not a rounding error. A fabrication that would have broken his entire budget if he hadn't caught it.

The agent doesn't know it's wrong. That's the terrifying part.

OpenClaw agent hallucination isn't a model problem you can't fix. It's a context management problem, an instruction problem, and a verification problem. Here are five fixes ranked by how much they actually reduce hallucination in practice.

Fix 1: Replace "black box" skills with AGENTS.md index entries (biggest impact)

The breakthrough: A developer on DEV.to described spending weeks building complex "Research Skills" to force his agent to be accurate. The skills became black boxes. He pushed a button, the AI disappeared into a script, and came back with wrong answers.

Then he read Vercel's AI team research: dividing project knowledge into indices (markdown) versus skills (executable code) dramatically reduces hallucination.

Why it works: When the agent has structured reference material in AGENTS.md (a knowledge index), it looks up facts instead of generating them. When it has only a skill (executable code), it guesses at inputs and invents outputs it thinks the skill should produce.

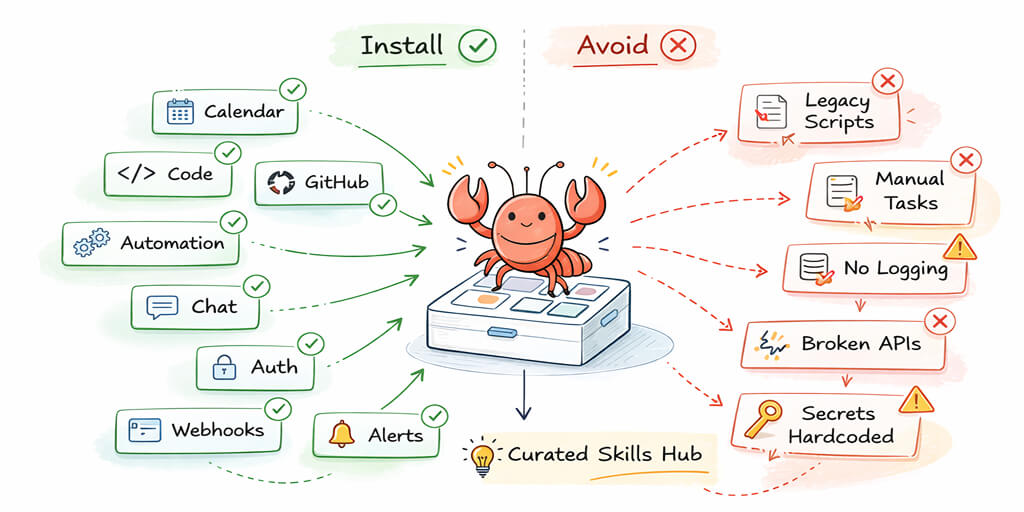

The fix: Delete complex skills that wrap simple lookups. Replace them with index entries in AGENTS.md. If your agent needs to know your pricing, put the pricing in AGENTS.md. Don't make it call a skill that fetches pricing from an API. The index is cheaper (no tool call), faster (no round-trip), and more accurate (no fabrication of missing API fields).

The index vs skill rule: If the information is static and known, put it in AGENTS.md as an index entry. If the action requires executing something (sending email, calling API, writing files), keep it as a skill. Most hallucinations happen when skills are used for lookups that should be index entries.

Fix 2: Trim your context window (the hidden cause of hallucination)

Here's what nobody tells you about agent hallucination.

Context bloat causes hallucination. When your system prompt is 15,000+ tokens (AGENTS.md + SOUL.md + MEMORY.md + 23 tool schemas), the model's attention is spread across too much information. It starts confusing details from one section with details from another. It "remembers" instructions that don't exist because it's interpolating between overlapping context.

Research confirms this: models exhibit "lost-in-the-middle" bias. They track the beginning and end of context but lose the middle. If your agent's critical instructions are buried in the middle of a 10,000-token SOUL.md, the model literally doesn't attend to them reliably.

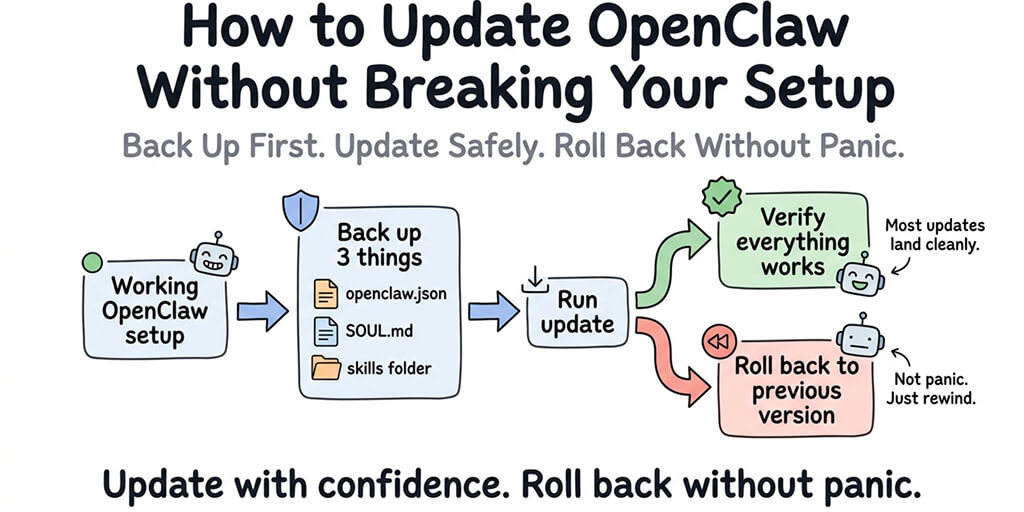

The fix: Keep AGENTS.md + SOUL.md + MEMORY.md under 5,000 tokens combined. Set contextTokens: 50000 to prevent unbounded context growth. Start new sessions regularly with /new to clear accumulated context. For the complete context optimization guide, our guide covers the token math and specific trim targets.

Fix 3: Add verification instructions to your SOUL.md

The problem: By default, OpenClaw agents don't verify their own work. The agent says "I've sent the email" without checking whether the email tool returned a success response. It says "I've created the file" without confirming the file exists.

The fix: Add explicit verification rules to your SOUL.md:

"After completing any task, verify the result before reporting. If you used a tool, check the tool's response for errors. If you created a file, read it back to confirm. If you sent a message, confirm delivery. Never report a task as complete without verification evidence."

Why this works: Claude Opus 4.7 introduced self-verification behavior ("devises ways to verify its own outputs before reporting back"). But this behavior isn't activated by default in OpenClaw. You need to explicitly instruct the agent to verify. Other models (GPT-5.5, DeepSeek V4) don't self-verify at all without instructions.

For the best practices for agent configuration, our OpenClaw best practices guide covers SOUL.md patterns that reduce both hallucination and token waste.

Fix 4: Use the right model for the task (model mismatch causes hallucination)

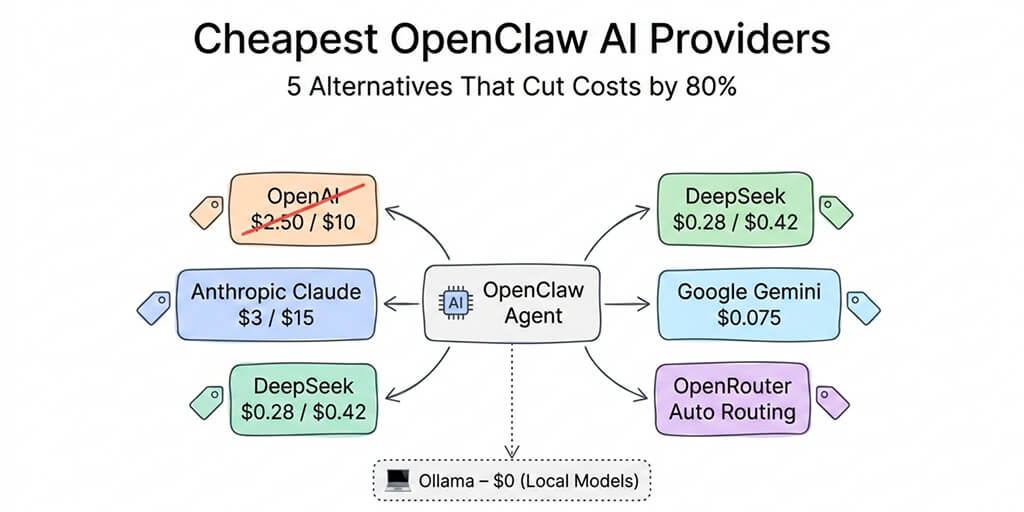

The problem: Different models hallucinate at different rates on different tasks. Using a cheap model for complex reasoning produces more fabrication. Using a powerful model for simple tasks wastes money without reducing hallucination.

The pattern from real usage:

- DeepSeek V4 Flash ($0.14/M tokens): Good for routine Q&A, FAQ, scheduling. Hallucinates on multi-step research, number-heavy tasks, and anything requiring precise recall of specific facts.

- Claude Sonnet 4.6 ($3/$15/M tokens): The sweet spot for most agent tasks. Low hallucination rate on structured tasks. Good instruction following.

- Claude Opus 4.7 ($5/$25/M tokens): Lowest hallucination rate overall, especially on complex multi-step tasks. Self-verification behavior reduces "says done but isn't" errors. But the new tokenizer adds 12-35% more tokens, increasing cost.

The fix: Route complex, high-stakes tasks (research, financial data, client communications) to Opus or Sonnet. Route routine tasks (FAQ, scheduling, simple Q&A) to V4 Flash. Don't use one model for everything.

If managing model routing, context limits, verification instructions, and AGENTS.md structure sounds like more agent tuning than you want, BetterClaw's smart context management reduces hallucination at the platform level. We don't inject bloated workspace files. We optimize context delivery. We monitor for anomalous outputs and auto-pause when the agent loops. Free tier with 1 agent and BYOK. $19/month per agent for Pro.

Fix 5: Constrain tool calling (stop the hallucination loop)

The problem: Sometimes the agent hallucinates tool calls. It calls a tool with invented parameters, gets an error, then hallucinates a "fix" by calling the tool again with different invented parameters. This loops until the model hits its token limit or the session stalls.

The BetterClaw blog documented this as one of the 10 most common OpenClaw errors: "agent stuck in loop, hallucinating tool use."

The fix: Set maxToolCalls in your agent config to limit tool calls per turn (5-10 is reasonable). Add to your SOUL.md: "If a tool call fails twice, stop and report the error. Do not retry with guessed parameters." Enable OpenClaw's health monitoring to detect and pause looping agents.

The honest take (hallucination is a feature, not a bug)

Here's the uncomfortable truth.

Hallucination is what makes these models useful. The same ability that lets the model generate creative responses, draft emails in your tone, and solve problems it hasn't seen before is the same ability that lets it invent files that don't exist and report tasks it didn't complete.

You can't eliminate hallucination without eliminating creativity. What you can do is constrain it: give the model facts instead of making it guess (AGENTS.md index), limit how much context it juggles (trim workspace files), force it to verify its work (SOUL.md instructions), match the model to the task (routing), and stop it from looping (tool call limits).

The agents that work best in production aren't the ones that never hallucinate. They're the ones whose operators built the guardrails to catch hallucination before it matters.

If you want those guardrails built into the platform, give BetterClaw a try. Free tier with 1 agent and BYOK. $19/month per agent for Pro. Smart context management. Anomaly detection. Auto-pause on loops. The agent still uses an LLM. But the platform catches the moments when the LLM loses the plot.

Frequently Asked Questions

Why does my OpenClaw agent hallucinate?

Five main causes: bloated context (15,000+ token system prompts cause the model to lose focus), vague instructions (no verification requirements in SOUL.md), wrong model for the task (cheap models hallucinate more on complex tasks), black box skills that should be AGENTS.md index entries, and unconstrained tool calling (the agent loops on failed tool calls with invented parameters). Each cause has a specific fix.

How do I stop my OpenClaw agent from saying tasks are done when they aren't?

Add explicit verification instructions to your SOUL.md: "After completing any task, verify the result before reporting. Check tool responses for errors. Read back files you created. Confirm message delivery. Never report a task as complete without evidence." Claude Opus 4.7 has built-in self-verification behavior, but other models need explicit instructions.

Does the AI model affect OpenClaw hallucination rate?

Yes, significantly. Claude Opus 4.7 has the lowest hallucination rate and built-in self-verification. Claude Sonnet 4.6 is the best value for low-hallucination agent tasks. DeepSeek V4 Flash hallucinates more on complex reasoning but is fine for routine Q&A. Route complex tasks to better models. Don't use one model for everything.

Can I reduce hallucination without switching models?

Yes. Three non-model fixes work immediately: trim workspace files to under 5,000 tokens combined (reduces context confusion), replace complex skills with AGENTS.md index entries (gives the model facts instead of making it guess), and add verification instructions to SOUL.md (forces the agent to check its work). These three fixes reduce hallucination noticeably regardless of model.

Does BetterClaw reduce agent hallucination compared to self-hosted OpenClaw?

BetterClaw's smart context management reduces the context bloat that causes hallucination. The platform doesn't inject 15,000+ tokens of overhead on every call. Anomaly detection catches looping agents (hallucinating tool use) and auto-pauses them. Verified skills eliminate the black-box skill problem. The model still hallucinates sometimes (all LLMs do), but the platform reduces the structural causes.

Related Reading

- OpenClaw Agent Hallucinating? Why It's Describing Tasks Instead of Doing Them — The diagnostic-first companion piece on tool-call failure modes

- Stop Making Your OpenClaw Agent Re-Read Files on Every Message — How to trim

AGENTS.md,SOUL.md,MEMORY.mdtoken bloat - OpenClaw Best Practices —

SOUL.mdpatterns that reduce hallucination - OpenClaw Agent Stuck in Loop — Detect and break tool-call retry loops

- 10 Most Common OpenClaw Errors — Quick-reference index of failure modes

- "Model Does Not Support Tools" Fix — Tool-calling compatibility by model

- OpenClaw Context Window Reached — Fix the bloat that causes "lost-in-the-middle"