Your agent gets smarter with every message. It also gets more expensive and eventually crashes. Here's the config that prevents both.

Message 1 worked perfectly. Message 10 was slightly slower. Message 25 cost four times as much as message 1. Message 38 crashed with "context window reached."

My agent just got stupider as it got smarter.

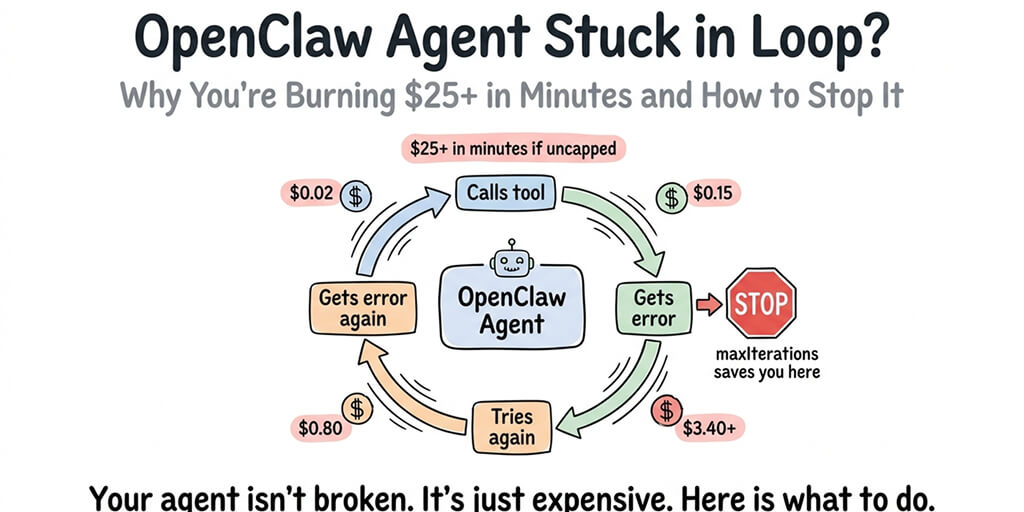

This is the most common error every OpenClaw user eventually hits. It's also the most expensive error to ignore. The context window error doesn't just stop your agent. It means every message leading up to the crash was progressively more expensive because OpenClaw sends the entire conversation history with every request.

Here's what's actually happening, why the defaults cause it, and the four settings that prevent it.

What "context window reached" actually means

Every AI model has a maximum input size. Claude Sonnet: 200K tokens. GPT-5: 128K tokens. DeepSeek V3: 64K tokens. When the total input (system prompt + conversation history + memory + skill descriptions) exceeds this limit, the model can't process the request.

Here's what nobody tells you about OpenClaw's default behavior.

OpenClaw sends the entire conversation history with every request. Message 1 sends your system prompt plus the first message. Message 2 sends your system prompt plus messages 1 and 2. Message 30 sends your system prompt plus all 30 messages. Each message also includes the model's response, which is often longer than the user's message.

By message 30, a single request contains 20,000-30,000 tokens of input. At Claude Sonnet's $3 per million input tokens, that's $0.06-0.09 per message. At message 1, the same request cost $0.007. You're paying 10x more per message by the end of a conversation than at the beginning.

The "context window reached" error is the moment the accumulated history exceeds the model's limit. But the cost damage starts much earlier.

The context window error is the visible crash. The invisible cost is the 20 messages before the crash where each request got progressively more expensive. By the time the error appears, you've already overpaid by 5-10x.

The four settings that prevent it (in order of impact)

Fix 1: Set maxContextTokens (prevents the crash entirely)

What it does: Caps how many tokens OpenClaw includes in each request. When the conversation exceeds this limit, older messages are dropped from the context (but stay in the log).

Recommended value: 6,000-8,000 tokens for most agent tasks. This gives the model enough context for multi-turn conversations without approaching any model's limit.

Why not set it to the model's maximum? Because you pay for every token in the context. Setting maxContextTokens to 200,000 means "send everything every time." Setting it to 8,000 means "send the system prompt plus the last 5-8 exchanges." The quality difference is minimal for most agent tasks. The cost difference is enormous.

For the complete guide to OpenClaw cost optimization, our provider guide covers all the token-saving configurations.

Fix 2: Use /new to reset the conversation buffer

What it does: The /new command starts a fresh conversation context. The agent's persistent memory (MEMORY.md, facts it's learned about you, preferences) carries over. The message history resets to zero.

When to use it: After completing a task. Before starting a different topic. Every 20-25 messages as a habit. The agent remembers who you are and what it knows about you. It just doesn't carry the full transcript of the last 25 messages.

The community rule of thumb: "Use /new like you'd close a browser tab. When you're done with a task, open a fresh context."

Fix 3: Route heartbeats to a cheap model

What it does: OpenClaw sends 48 heartbeats per day (one every 30 minutes). Each heartbeat carries the full system prompt and recent conversation context. By default, heartbeats use the same model as conversation messages.

The fix: Route heartbeats to Haiku ($1/$5 per million tokens) or Gemini Flash (free). Heartbeats check for pending tasks and scheduled actions. They don't need Claude Sonnet's reasoning quality. The cheapest model that can read a task queue is sufficient.

Cost impact: 48 heartbeats/day on Claude Sonnet with a 4,000-token context: approximately $4.32/month. Same heartbeats on Haiku: approximately $0.29/month. Monthly saving: $4.03 from this one setting.

Fix 4: Trim your SOUL.md and skill descriptions

What it does: Your system prompt (SOUL.md) and active skill descriptions are included in every request. A 3,000-token SOUL.md means 3,000 tokens of overhead on every single message, including heartbeats.

The fix: Keep your SOUL.md under 1,000 tokens (roughly 750 words). Remove verbose instructions that the model doesn't need repeated every message. Move reference information to the memory system (searchable, loaded on demand) instead of the system prompt (loaded every time).

Skill descriptions add up fast. Each installed skill adds its description to every request. Five skills with 500-token descriptions each add 2,500 tokens of overhead to every message. Uninstall skills you're not actively using.

The total overhead formula: System prompt + skill descriptions + memory injection = tokens sent before your actual message. Keep this under 3,000 tokens total. The rest of the context window is for your conversation.

If managing maxContextTokens, heartbeat routing, SOUL.md size, and skill overhead sounds like more optimization than you want to handle manually, BetterClaw's smart context management does all four automatically. The platform manages what goes into each request so you don't hit context limits or pay for bloated contexts. Free tier with 1 agent and BYOK. $19/month per agent for Pro (up to 25 agents, each billed at $19/month). The context window error doesn't happen because the context is managed by the platform, not by your config files.

The cost math that makes this urgent

Here's why this error matters beyond the crash itself.

Default OpenClaw (no optimization): A 30-message conversation on Claude Sonnet costs approximately $1.20-1.80 in API fees. The first 10 messages cost $0.15. The last 10 messages cost $0.70. The context grows linearly. The cost grows linearly with it.

Optimized OpenClaw (maxContextTokens at 8,000, heartbeats on Haiku): The same 30-message conversation costs approximately $0.25-0.35. Every message after the context cap costs the same as every other message because the context size is constant.

Monthly difference (moderate usage, 50 messages/day): Default: $40-87/month. Optimized: $10-20/month. The viral "$178 in one week" Medium post was the direct result of running OpenClaw with default settings. The user wasn't doing anything unusual. The defaults were doing it for them.

For the best practices guide, our best practices post covers the complete optimization playbook.

The pattern behind every OpenClaw cost problem

Here's the honest take.

The context window error and the cost spiral are the same problem. Both are caused by sending too much data with every request. The error is the hard crash (context exceeds model limit). The cost spiral is the soft crash (context doesn't exceed the limit but costs more than it should).

The fix for both is the same: cap the context size, route housekeeping to cheap models, keep the system prompt lean, and reset conversations when tasks are complete. These four settings take 5 minutes to configure and save 60-80% on monthly API costs.

If you want those four settings managed automatically, give BetterClaw a try. Free tier with 1 agent and BYOK. $19/month per agent for Pro (up to 25 agents, each billed at $19/month). Smart context management prevents both the error and the cost spiral. 60-second deploy. The context window just works because the platform manages it. You manage the conversations.

Frequently Asked Questions

What causes the OpenClaw "context window reached" error?

OpenClaw sends the entire conversation history with every request. As the conversation grows, the total input (system prompt + conversation + memory + skill descriptions) eventually exceeds the model's maximum context window (200K for Claude, 128K for GPT-5, 64K for DeepSeek). The fix: set maxContextTokens to 6,000-8,000 to cap context size per request.

How do I reduce OpenClaw context window usage?

Four fixes in order of impact: set maxContextTokens to 6,000-8,000 (caps context per request), use /new every 20-25 messages (resets conversation buffer), route heartbeats to Haiku or Gemini Flash (stops 48 daily heartbeats from burning premium tokens), and keep SOUL.md under 1,000 tokens (reduces per-request overhead).

Does the context window error cause data loss?

No. The error stops the current request but doesn't delete conversation history or memory files. Your MEMORY.md, persistent facts, and conversation logs are preserved. Use /new to reset the conversation buffer and continue. The agent retains its persistent memory across resets.

How much does the context window problem cost per month?

With default settings and moderate usage (50 messages/day on Claude Sonnet): $40-87/month. With maxContextTokens at 8,000, heartbeats on Haiku, and SOUL.md under 1,000 tokens: $10-20/month. The difference is configuration, not usage. The viral "$178 in one week" Medium post was caused by default context settings, not heavy usage.

Does BetterClaw have the context window error?

No. BetterClaw's smart context management automatically controls what's included in each request. The platform manages context size, routes housekeeping to efficient models, and keeps per-request overhead low. The context window error doesn't occur because the conditions that cause it are handled at the platform level. Free tier with 1 agent and BYOK. $19/month per agent for Pro (up to 25 agents, each billed at $19/month).