Your AGENTS.md, SOUL.md, and MEMORY.md are injected into every API call. At 10,000 tokens combined, that's 10K tokens of overhead before you've even said hello. Here's how to cut it by 60%.

One user reported their OpenClaw session context hitting 56-58% of a 400K token window. That's 230,000 tokens being resent with every single message. Not because the conversation was long. Because the workspace files were bloated.

The conversation was 20 messages. The overhead was 230,000 tokens.

Here's what nobody tells you about OpenClaw's architecture: your AGENTS.md, SOUL.md, MEMORY.md, tool schemas, skill descriptions, and runtime metadata are rebuilt and injected into the system prompt on every API call. Not once per session. Every message. Every heartbeat. Every tool call response.

The base system prompt alone contributes approximately 15,000 tokens before you've typed anything. That's 23 tool definitions with their schemas, your workspace files, skill descriptions, self-update instructions, time and runtime metadata, and safety headers.

At Opus 4.7 pricing ($5 per million input tokens), 15,000 tokens of overhead on every message costs about $0.075 per message. Send 50 messages a day? That's $3.75/day in pure overhead. $112/month on tokens that have nothing to do with your actual conversations.

Here's how to cut that by 60% in about 10 minutes.

The Three Files That Drain Your Tokens (and What to Do About Each)

OpenClaw injects several workspace files into every API call. These are the bootstrap files controlled by agents.defaults.bootstrapMaxChars (default: 12,000 per file) and agents.defaults.bootstrapTotalMaxChars (default: 60,000 total).

AGENTS.md is usually the biggest offender. Community examples range from 200 lines (lean) to 420+ lines (bloated). A 420-line AGENTS.md can easily consume 5,000-8,000 tokens. Every. Single. Message.

The fix: Cut your AGENTS.md to under 100 lines. Remove verbose explanations. Remove examples the model doesn't need. Remove redundant instructions. The model is smart enough to infer intent from concise instructions. If your AGENTS.md tells the model how to format responses in 50 lines, replace it with 5.

SOUL.md defines your agent's personality and behavior. Most users write this as a creative essay. It should be a checklist.

The fix: Keep SOUL.md under 1,500 tokens. "You are a helpful assistant. Be concise. Use casual tone. Don't apologize unnecessarily." is 20 tokens. A 3-paragraph personality essay is 500 tokens. The model performs identically on both.

MEMORY.md grows over time as you add facts about yourself. After months of use, it can reach 5,000+ tokens.

The fix: OpenClaw's official guidance: keep MEMORY.md under 3,000 tokens. Review it monthly. Remove outdated facts. Consolidate redundant entries. Use the daily memory files (memory/*.md) for time-specific context that doesn't need to be in every prompt.

The optimization rule: AGENTS.md + SOUL.md + MEMORY.md combined should stay under 5,000 tokens. The default cap is 60,000. Most users are burning 10,000-15,000 tokens per message on these files alone. Cutting to 5,000 saves 5,000-10,000 tokens per message, per heartbeat, per tool call.

For the complete guide to reducing OpenClaw costs, our cost optimization stack covers the other five token drains beyond workspace files.

The Tool Call Multiplication Problem (the Part Nobody Talks About)

But that's not even the real problem.

Here's what the workspace file optimization guides miss: tool calls multiply the overhead. When your agent calls a tool (file_read, run_bash, web_search), the tool result is added to the context. Then the model is called again to process the result. That second call includes the full system prompt again.

A single user message that triggers 5 tool calls means 6 API calls (original + 5 tool responses). Each one includes your full 15,000-token system prompt. That's 90,000 tokens of system prompt overhead for one user message.

Example from real OpenClaw usage: "Help me organize today's emails and create to-do items." This triggers: email skill to fetch list, analyze each email, determine priority, call Todoist skill to create tasks, generate summary. Five to ten API calls, each carrying the full context.

The fix: Two things. First, trim the workspace files (previous section). Second, set maxContextTokens to cap how much history accumulates between compactions:

agents.defaults.contextTokens: 50000

This limits the total context window to 50K tokens instead of letting it grow to the model's maximum (200K-1M). Smaller context = faster responses + lower cost per message. The trade-off: the agent forgets older conversation history sooner.

The Heartbeat Overhead (the Silent Drain Nobody Checks)

OpenClaw's heartbeat system fires every 55-60 minutes, 24/7. Each heartbeat is a full API call with the complete system prompt. That's 15,000 tokens minimum per heartbeat, even if the heartbeat does nothing.

The math: 24 heartbeats/day × 15,000 tokens = 360,000 tokens/day in heartbeat overhead alone. At Opus 4.7 pricing: $1.80/day just to keep the agent alive. That's $54/month before you've asked a single question.

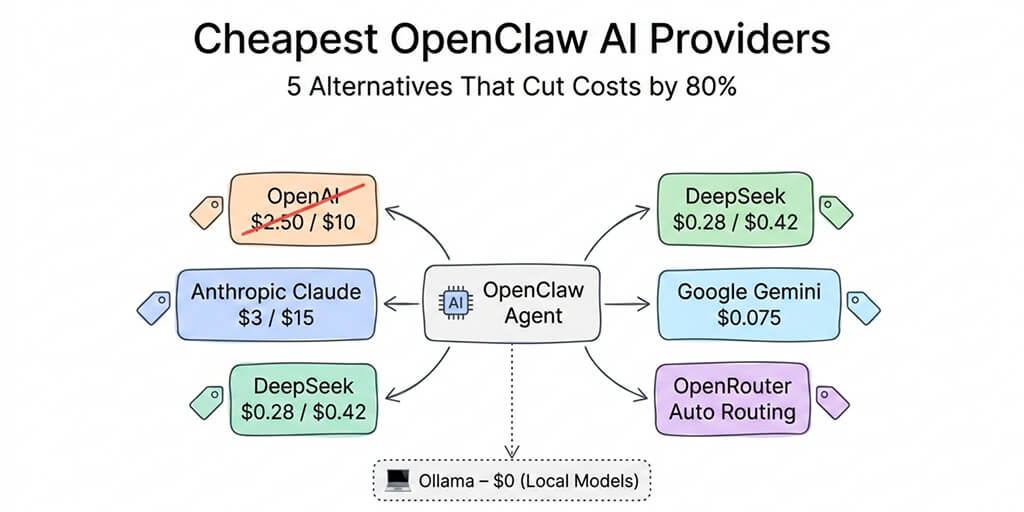

The fix: Route heartbeats to your cheapest model. The heartbeat doesn't need Opus-level reasoning to check if an email arrived. DeepSeek V4 Flash at $0.14/M tokens handles heartbeats perfectly. That drops the heartbeat cost from $54/month to $1.50/month. For the cheapest model options, our provider guide covers routing by task type.

The Prompt Caching Trick (the 90% Discount Most Users Miss)

Here's what nobody tells you about Anthropic's prompt caching.

If your system prompt stays identical between API calls, Anthropic caches it and charges only 10% on subsequent calls. That 15,000-token system prompt costs $0.075 on the first call and $0.0075 on every cached call. A 90% discount on the overhead.

But here's the catch: If you include dynamic content in your workspace files (timestamps, dates, changing metadata), the cache is invalidated on every call. Every call pays full price.

The fix: Keep workspace files 100% static. Don't include "Current Date: May 8, 2026" in your AGENTS.md. Let the model infer the date from conversation context, or inject it in the user message (not the system prompt). One user reported that removing a dynamic timestamp from their workspace file dropped their effective input cost by 80%.

For heartbeat caching: Set the heartbeat interval to just under your model's cache TTL. If the cache TTL is 60 minutes, set heartbeat to 55 minutes. The heartbeat keeps the cache warm, and every subsequent message benefits from cached pricing. The heartbeat pays for itself.

If managing workspace file sizes, context limits, heartbeat routing, prompt caching TTLs, and tool call multiplication sounds like more optimization work than you signed up for, BetterClaw's smart context management handles all of this at the platform level. We don't inject bloated workspace files on every call. We don't send full context with heartbeats. We optimize the token flow so you pay for conversations, not housekeeping. Free tier with 1 agent and BYOK. $19/month per agent for Pro.

The Real Savings (Before and After)

Here's a real scenario from the community. Before optimization:

AGENTS.md: 420 lines (8,000 tokens). SOUL.md: personality essay (3,000 tokens). MEMORY.md: 6 months of facts (4,000 tokens). Dynamic timestamp in workspace. Heartbeats on Opus. No context limit. Monthly cost: $166.

After applying the four fixes:

AGENTS.md: 80 lines (2,000 tokens). SOUL.md: checklist format (500 tokens). MEMORY.md: pruned to current facts (2,500 tokens). Static workspace files (caching active). Heartbeats on DeepSeek V4 Flash. contextTokens: 50000. Monthly cost: $27. Saved: $139/month.

One tech blogger reported dropping from $87/month to $27/month by applying just the top three optimizations (model routing, workspace trimming, and heartbeat routing).

The optimization that saves the most is the one you should do first: trim your AGENTS.md. Ten minutes of editing. Measurable savings on every message, every heartbeat, every tool call, from that point forward.

If you want the optimized context management without the manual work, give BetterClaw a try. Free tier. $19/month Pro. 28+ model providers. Smart context management that keeps tokens low on every call. The bootstrap overhead is our problem. The conversations are yours.

Frequently Asked Questions

What is OpenClaw AGENTS.md and why does it cost tokens?

AGENTS.md is one of several workspace files that OpenClaw injects into the system prompt on every API call. Along with SOUL.md, MEMORY.md, tool schemas, and skill descriptions, it forms the "bootstrap" context that the model reads before processing your message. The base system prompt contributes approximately 15,000 tokens per call. This is re-sent with every message, heartbeat, and tool call response.

How many tokens does the OpenClaw system prompt use?

Approximately 15,000 tokens before you've typed anything. This includes 23 tool definitions with schemas (~5,000 tokens), workspace files (AGENTS.md, SOUL.md, MEMORY.md, typically 5,000-15,000 tokens combined), skill descriptions, runtime metadata, and safety headers. The default bootstrap cap is 60,000 total characters, but most users hit 10,000-15,000 tokens.

How do I reduce my OpenClaw AGENTS.md token count?

Cut it to under 100 lines and 2,000 tokens. Remove verbose explanations (the model infers intent from concise instructions). Remove examples it doesn't need. Remove redundant formatting instructions. Keep SOUL.md under 500 tokens (checklist, not essay). Keep MEMORY.md under 3,000 tokens (prune monthly). Combined target: under 5,000 tokens for all three.

How much does OpenClaw context bloat actually cost?

At Opus 4.7 pricing ($5/M input tokens): 15,000 tokens of overhead per message costs $0.075. At 50 messages/day plus 24 heartbeats: approximately $5.55/day or $166/month in pure overhead. After optimization (5,000 tokens, heartbeats on V4 Flash, caching active): approximately $0.90/day or $27/month. Savings: $139/month.

Does BetterClaw have the same AGENTS.md token drain?

No. BetterClaw's smart context management doesn't inject bloated workspace files on every API call. The platform manages context at the infrastructure level, keeping token overhead minimal regardless of how much configuration or memory your agent has. This is one of the key technical differences between self-hosted OpenClaw and BetterClaw as a managed platform.