Your OpenClaw agent doesn't need GPT-4o for everything. Here are the providers that cost a fraction and work just as well.

My OpenAI dashboard showed $147. Fourteen days. One agent.

I'd set up my OpenClaw instance on a Friday, pointed it at GPT-4o because that's what every tutorial recommended, and let it run. Morning briefings. Email triage. Calendar management. A few research tasks. Nothing exotic.

Two weeks later, $147. For an AI assistant that mostly checked my calendar and summarized emails.

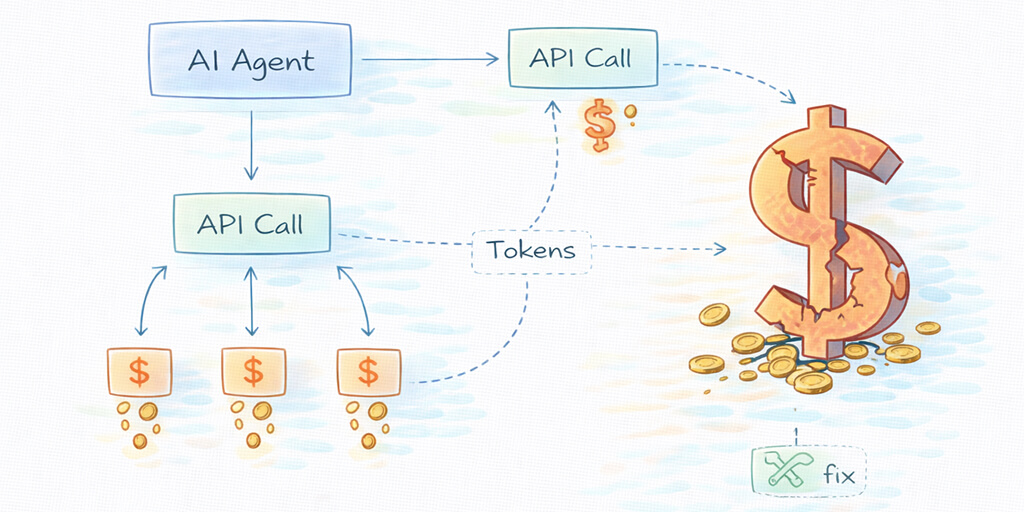

I pulled up the token logs and did the math. GPT-4o at $2.50 per million input tokens and $10 per million output tokens sounds reasonable in isolation. But OpenClaw agents are hungry. Heartbeats every 30 minutes. Sub-agents spawning for parallel tasks. Context windows that grow silently as cron jobs accumulate history.

The tokens add up. Fast.

Here's the thing: the cheapest OpenClaw AI provider isn't always the worst one. In 2026, there are models that cost 90% less than GPT-4o and perform just as well for the kind of work most agents actually do. Some of them are better at tool calling. Some have larger context windows. One of them is literally free.

This is the guide I wish I'd read before handing OpenAI $147 for two weeks of calendar checks.

Why OpenAI is the default (and why that's costing you)

OpenAI is the default recommendation in most OpenClaw tutorials for a simple reason: familiarity. Everyone has an OpenAI account. The API is well-documented. GPT-4o is genuinely good.

But "good" and "cost-effective for an always-on agent" are very different things.

OpenClaw agents don't work like a ChatGPT conversation. They run continuously. They process heartbeats (periodic status checks) every 30 minutes using your primary model. They spawn sub-agents for parallel work. They execute skills that require multiple model calls per task.

A single browser automation task can consume 50-200+ steps, with each step using 500-2,000 tokens. At GPT-4o pricing, that's $0.50-2.00 per complex task. Run a few of those daily and your monthly bill climbs past $100 easily.

The viral Medium post "I Spent $178 on AI Agents in a Week" captured this pain perfectly. Most of that spend was GPT-4o running tasks that didn't need GPT-4o.

For a deeper look at where OpenClaw API costs actually come from (and how they compound faster than you'd expect), we wrote a complete breakdown of OpenClaw API costs with real monthly projections.

1. Anthropic Claude: The agent-first provider

Pricing: Haiku 4.5: $1/$5 | Sonnet 4.6: $3/$15 | Opus 4.6: $5/$25 (per million tokens, input/output)

Claude isn't cheaper than GPT-4o across the board. Sonnet at $3/$15 is actually more expensive per output token. But here's why it's on this list: Claude is better at the specific things OpenClaw agents need to do.

Tool calling reliability. Long-context accuracy. Prompt injection resistance. Multi-step instruction following. These are the areas where OpenClaw community benchmarks consistently rank Claude above GPT-4o.

The real savings come from Haiku 4.5 at $1/$5. That's 60% cheaper than GPT-4o on input and 50% cheaper on output. And for heartbeats, calendar lookups, simple queries, and sub-agent tasks, Haiku handles them beautifully.

The smart setup: Sonnet as your primary model, Haiku for heartbeats and sub-agents, Opus available via /model opus for complex reasoning when you need it. This tiered approach typically costs $40-70/month compared to $100-200 with GPT-4o for everything.

Claude isn't the cheapest option. It's the option where you get the most capability per dollar on agent-specific tasks.

OpenClaw's founder, Peter Steinberger, recommended Anthropic models before joining OpenAI. That recommendation still holds for most serious agent workloads.

2. DeepSeek: The $0.28 option that actually works

Pricing: DeepSeek V3.2: $0.28/$0.42 per million tokens (input/output)

This is where the cost math gets wild.

DeepSeek V3.2 costs roughly 10x less than GPT-4o on input tokens and 24x less on output tokens. For an always-on OpenClaw agent, that difference compounds dramatically. A workload that costs $150/month on GPT-4o drops to approximately $15-20/month on DeepSeek.

And it's not a toy model. Community reports from the OpenClaw GitHub discussions consistently mention DeepSeek alongside Claude as the two providers that work best for agent tasks. It's particularly strong at code generation and debugging.

The tradeoffs are real though. DeepSeek's tool calling is less reliable than Claude's on complex multi-step chains. Context tracking over very long conversations can degrade. And if you're processing sensitive data, the provider routes through Chinese infrastructure, which matters for some use cases.

For pure cost optimization on non-sensitive tasks, DeepSeek is hard to beat. Set it as your heartbeat and sub-agent model while keeping a more capable model as your primary, and your bill drops by 70-80%.

3. Google Gemini: Free tier that's surprisingly capable

Pricing: Gemini 2.5 Flash free tier: $0 (1,500 requests/day) | Paid: $0.075/$0.30 per million tokens

Yes, free. Google AI Studio offers a free tier for Gemini 2.5 Flash with 1,500 requests per day and a 1 million token context window. No credit card required.

For personal OpenClaw use (morning briefings, calendar management, basic research), the free tier is often enough. 1,500 requests per day is surprisingly generous for a single-user agent.

Even the paid tier at $0.075 per million input tokens is absurdly cheap. That's 33x cheaper than GPT-4o. A moderate usage pattern that costs $100/month on OpenAI costs roughly $3 on Gemini Flash.

The limitation: Gemini's tool calling isn't as reliable as Claude or even GPT-4o for complex chains. It handles straightforward tasks well but can stumble on multi-step reasoning that requires precise instruction following.

Best used for: heartbeats, simple lookups, data parsing, and as a fallback model. Not recommended as your sole primary model for complex agent workflows.

To understand which tasks need a powerful model versus which tasks can run on something cheap, our guide to how OpenClaw works under the hood explains the agent architecture and where model calls actually happen.

4. OpenRouter: One API key, 200+ models, automatic routing

Pricing: Varies by model (typically 0-5% markup over direct provider pricing)

OpenRouter isn't a model provider. It's a routing layer. One API key gives you access to 200+ models across every major provider, and you can switch between them without managing separate API keys for each.

Here's why that matters for OpenClaw.

The /model command lets you switch models mid-conversation. With OpenRouter, you type /model deepseek/deepseek-v3.2 and you're on DeepSeek. /model anthropic/claude-sonnet-4.6 switches to Claude. No config file edits. No gateway restarts.

Watch on YouTube: OpenClaw Multi-Model Setup with OpenRouter (Community content) If you want to see how OpenRouter's model switching works in practice with OpenClaw (including the auto-routing feature that selects the cheapest capable model per request), this community walkthrough covers the full configuration and real-time cost comparison.

But the real savings feature is openrouter/auto. Set this as your model and OpenRouter automatically routes each request to the most cost-effective model based on the complexity of the prompt. Simple heartbeats go to cheap models. Complex reasoning gets routed to capable ones. You save money without manually managing model tiers.

The tradeoff: a small markup on token prices (typically under 5%), and you're adding a routing layer which occasionally introduces latency. For most users, the convenience of one API key and automatic cost optimization is worth it.

If you don't want to think about model routing at all, if you want automatic cost optimization with zero configuration and built-in anomaly detection that pauses your agent before costs spiral, Better Claw handles all of this at $19/month per agent. BYOK, 60-second deploy, and you can point it at any of these providers.

5. Ollama (local models): $0 per month, forever

Pricing: $0 API cost. Hardware and electricity only.

Running models locally through Ollama eliminates API costs entirely. Llama 3.3 70B, Mistral, Qwen 2.5: they all run on your machine, fully private, with no token charges.

The math: A Mac Mini M4 with 16GB RAM runs 7-8B models at 15-20 tokens per second. That's fast enough for most agent tasks. Larger models (30B+) need more RAM or a dedicated GPU.

For OpenClaw specifically, the hermes-2-pro and mistral:7b models are recommended for tool calling reliability. They're not Claude or GPT-4o, but for heartbeats, simple queries, and privacy-sensitive operations, they're genuinely useful.

The honest reality: local models in 2026 still can't match cloud providers on complex multi-step reasoning, long-context accuracy, or sophisticated tool use. The community consensus in OpenClaw's GitHub discussions is clear: local models work for experimentation and privacy-first setups, but cloud models are better for production agent workflows.

The sweet spot is hybrid: local models for heartbeats and simple tasks, cloud models for complex reasoning. OpenClaw supports this natively through its model routing configuration.

The provider nobody talks about: MiniMax

Quick honorable mention. MiniMax offers a $10/month plan with 100 prompts every 5 hours. Peter Steinberger himself recommended it during community discussions. It's not on the level of Opus, but community members describe it as "competent enough for most tasks."

For budget-conscious users who want a flat monthly rate instead of per-token billing, it's worth testing. The predictability alone can be valuable when you're worried about runaway agent costs.

The real problem isn't the provider. It's the architecture.

Here's what I've learned after months of optimizing OpenClaw costs across different providers.

Switching from GPT-4o to DeepSeek saves you money. Setting up model routing (different models for different task types) saves you more. But the biggest cost driver in OpenClaw isn't the per-token price. It's uncontrolled context growth.

Cron jobs accumulate context indefinitely. A task scheduled to check emails every 5 minutes eventually builds a 100,000-token context window. What starts at $0.02 per execution grows to $2.00 per execution regardless of which provider you use.

The memory compaction bug in OpenClaw makes this worse. Context compaction can kill active work mid-session, and the workarounds require manual token limits in every skill config.

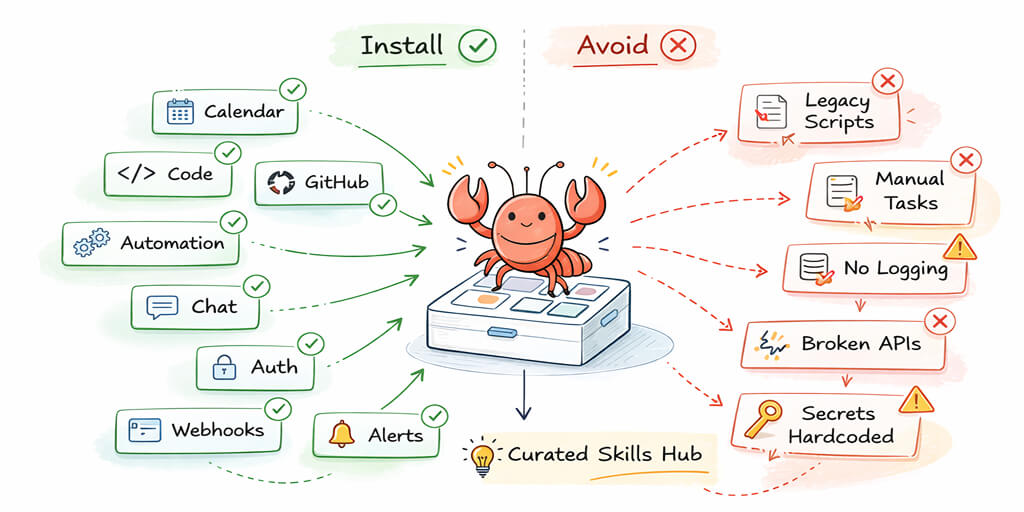

Set maxContextTokens and maxIterations in your skill configurations. Set daily spending caps on OpenRouter or your provider's dashboard. Monitor your token usage weekly. These operational habits matter more than which provider you choose.

The cheapest provider in the world can't save you from a runaway agent loop burning tokens at 3 AM.

For a look at what tasks are worth running through a premium model versus which ones can safely run on the cheapest option available, our guide to the best OpenClaw use cases ranks workflows by complexity and cost.

Pick your fighter (a practical recommendation)

For most people reading this, here's what I'd actually recommend:

If you're just starting out: Gemini 2.5 Flash free tier. Zero risk. Learn how OpenClaw works without spending anything. Upgrade to a paid provider when you outgrow the free limits.

If you want the best quality-to-cost ratio: Claude Sonnet 4.6 as primary, Haiku 4.5 for heartbeats and sub-agents. This is what most serious OpenClaw users run. Expect $40-70/month.

If cost is the priority: DeepSeek V3.2 for everything except complex reasoning. Use Claude or GPT-4o on-demand via /model for the hard stuff. Expect $15-30/month.

If you don't want to think about any of this: OpenRouter auto-routing, or Better Claw at $19/month per agent with BYOK and zero-config deployment.

The AI model market is getting cheaper every quarter. Opus 4.5 at $5/$25 is 66% cheaper than Opus 4.1 was at $15/$75. The trend is clear. But until prices hit zero (they won't), smart provider selection and model routing are the most impactful cost levers you have.

Stop paying GPT-4o prices for calendar checks. Your agent will work just as well. Your wallet will thank you.

If you've been wrestling with API costs, config files, and model routing, and you'd rather just deploy an agent that works, give Better Claw a try. It's $19/month per agent, BYOK with any of the providers above, and your first agent deploys in about 60 seconds. We handle the infrastructure, the model routing, and the cost monitoring. You focus on building workflows.

Frequently Asked Questions

What are the cheapest AI providers for OpenClaw agents?

The cheapest cloud providers for OpenClaw in 2026 are DeepSeek V3.2 at $0.28/$0.42 per million tokens and Google Gemini 2.5 Flash at $0.075/$0.30 (with a free tier offering 1,500 requests per day). For zero-cost operation, Ollama lets you run local models like Llama 3.3 and Mistral with no API charges. Claude Haiku 4.5 at $1/$5 offers the best balance of low cost and agent-specific reliability.

How does Claude compare to GPT-4o for OpenClaw?

Claude models (particularly Sonnet and Haiku) consistently outperform GPT-4o on the tasks that matter most for OpenClaw: tool calling reliability, long-context accuracy, and prompt injection resistance. GPT-4o is faster on simple tasks and has broader community support. Claude Sonnet 4.6 at $3/$15 is more expensive per output token than GPT-4o at $2.50/$10, but the improved agent performance often means fewer retries and lower total cost.

How do I switch AI providers in OpenClaw?

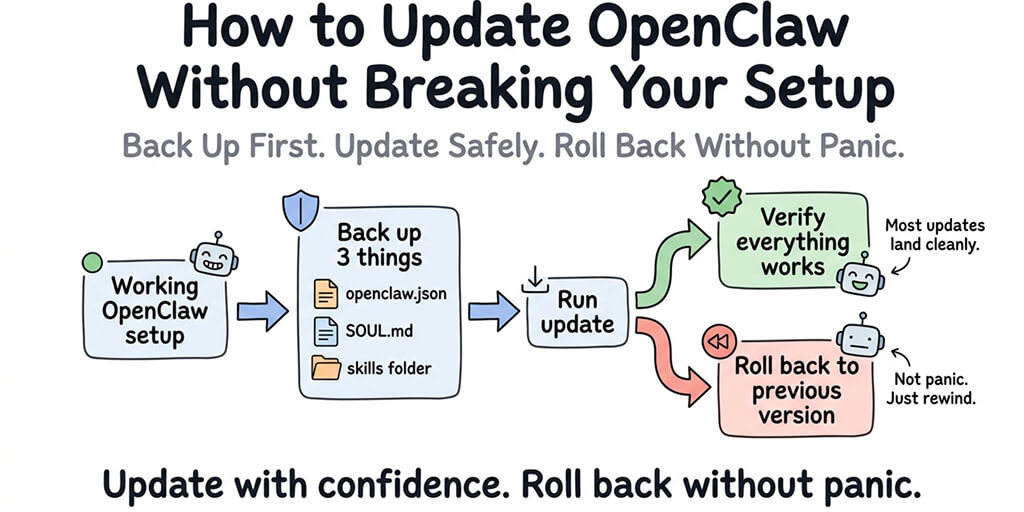

Edit your ~/.openclaw/openclaw.json file to change the model provider and API key, then restart your gateway. For quick switching mid-conversation, use the /model command (for example, /model anthropic/claude-sonnet-4-6). OpenRouter simplifies this further by giving you one API key for 200+ models. The switch takes seconds and doesn't require reinstallation.

How much does it cost to run an OpenClaw agent per month?

Monthly costs vary by provider and usage: $80-200 with GPT-4o for everything, $40-70 with Claude Sonnet plus Haiku routing, $15-30 with DeepSeek for most tasks, or $0-5 with Gemini free tier or local models. These are API costs only. Hosting adds $5-29/month depending on whether you self-host on a VPS or use a managed platform like Better Claw. BYOK means you control the API spend regardless of hosting.

Is DeepSeek reliable enough for production OpenClaw agents?

DeepSeek V3.2 is reliable for most standard agent tasks and excels at code generation. Community reports confirm it works well for daily operations. The tradeoffs: tool calling can be less precise than Claude on complex multi-step chains, and data routes through Chinese infrastructure, which matters for sensitive workloads. For heartbeats, sub-agents, and non-sensitive tasks, it's a solid budget choice. For critical workflows, pair it with a more capable model as your primary.