OpenClaw is a brilliant reactive agent. But "go do this complex thing while I sleep" is where it falls apart. Here's why, and what to do about it.

I asked my OpenClaw agent to research three competitor products, compile the findings into a comparison table, and email the result to my team.

It researched the first product. Then stopped. Waited for me to say something. I prompted it to continue. It researched the second product. Stopped again. I nudged it once more. It finished the research, but never created the table. I asked for the table. It generated one. Then asked me where to send the email.

Five prompts to complete a three-step task.

The same week, I gave the identical assignment to Manus through Telegram. One message. It planned the research, executed all three searches in parallel, built the table, formatted an email, and sent it. Zero additional prompts. Took about four minutes.

That moment crystallized something I'd been sensing for weeks: OpenClaw is not an autonomous agent. It's a brilliant, capable, incredibly flexible reactive agent. And there's a crucial difference.

The reactive vs autonomous gap (and why it matters)

OpenClaw does what you tell it, when you tell it. You message it on Telegram. It responds. You ask it to check your calendar. It checks. You tell it to draft an email. It drafts. Each interaction is a prompt-response cycle.

This works beautifully for 80% of agent use cases. Morning briefings. Quick lookups. Drafting messages. Scheduling reminders. The stuff you'd normally do yourself but faster.

But OpenClaw autonomous tasks (the "go handle this complex project end-to-end while I do something else" kind) are where the architecture shows its seams.

OpenClaw doesn't natively plan. It doesn't decompose a complex goal into sub-tasks, sequence them, and execute without intervention. It processes one instruction at a time within a conversation context. If a task requires multiple steps, you either provide each step explicitly or write an AGENTS.md workflow file that scripts the sequence in advance.

Manus, by contrast, was built for autonomy from the ground up. You assign a task. It spins up a sandboxed environment, creates an execution plan, browses the web, writes code, processes data, and delivers results. Meta paid $2 billion for that capability.

OpenClaw is "AI that responds." Manus is "AI that plans." Both are useful. They're solving different problems.

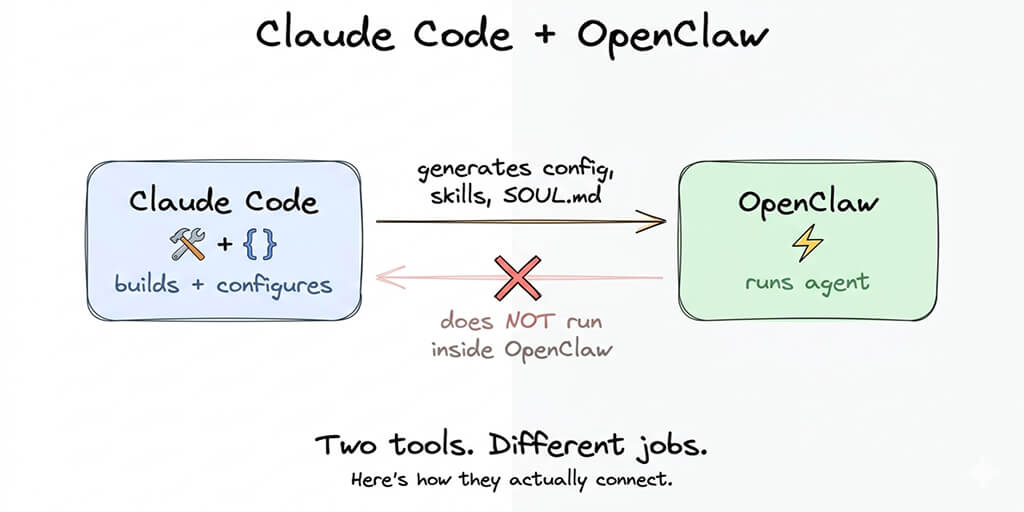

For a deeper look at how OpenClaw's agent architecture actually works under the hood, our explainer covers the gateway model, the agent loop, and where the reactive pattern comes from.

Where OpenClaw actually wins (and it's a lot)

Before this turns into a Manus ad, let me be clear: OpenClaw wins on nearly everything else.

Privacy. OpenClaw runs on your machine. Your data stays on your hardware. Manus runs in Meta's cloud. Every task you assign passes through Meta's infrastructure. For anyone handling sensitive information, that's not a tradeoff. It's a dealbreaker.

Cost control. OpenClaw uses BYOK (bring your own API keys). You control exactly what you spend. A well-optimized agent runs $15-50/month. Manus uses opaque credit pricing ($20-200/month) where a single complex task can burn 900+ credits with no cost estimation before execution. Users describe it as "playing credit roulette."

Customization. OpenClaw has 230,000+ GitHub stars, 850+ contributors, and a skill ecosystem (despite the security concerns with ClawHub). You can build anything. Manus gives you what Meta decides to ship.

Multi-platform presence. One OpenClaw agent connects to 15+ chat platforms simultaneously. Manus recently added Telegram, but the multi-platform story is still early.

Multi-agent power. OpenClaw lets you spin up multiple independent agents with separate memory and contexts. Manus doesn't offer this yet (sub-agents are on their roadmap but not shipped).

The honest comparison: Manus is better at going away and doing complex tasks autonomously. OpenClaw is better at everything you do with your agent in real-time. For the full picture of what OpenClaw agents can actually do well, our use case guide covers the workflows where it genuinely excels.

Why OpenClaw struggles with long-form autonomous tasks

The architectural limitations are specific and worth understanding.

No native task planning

When you give OpenClaw a complex instruction, it processes it as a single conversational turn. The model generates a response that may include tool calls. If the task requires more steps than the model can fit in one generation, you get partial execution.

The AGENTS.md file is the community's workaround. You script workflows as structured instructions that the agent follows step-by-step. But this is manual choreography, not autonomous planning. You're doing the planning. The agent is following your script.

Manus has a dedicated planning layer that decomposes tasks automatically. OpenClaw doesn't. This is an architectural decision, not a bug.

Context window limitations

OpenClaw agents operate within the context window of their primary model. A complex autonomous task (research, analyze, compare, generate, deliver) can easily exceed the effective context capacity, especially when tool call outputs are included.

The memory compaction issues in OpenClaw compound this. Context compaction can kill active work mid-session, and cron jobs accumulate context indefinitely without proper limits. For a task that needs to maintain state across many steps, this creates reliability problems.

Sub-agent limitations

OpenClaw's sub-agents are temporary workers that share the parent's context. They're useful for parallel lookups but can't independently plan or maintain their own state across complex workflows. They're workers, not planners.

Manus's sub-agents (while still limited) operate in dedicated sandboxed environments with their own execution context. The difference matters for tasks like "research this topic deeply, then write a report" where multiple stages need independent processing space.

No execution sandboxing by default

When OpenClaw executes code or runs shell commands, it does so on your actual machine (unless you've set up Docker isolation). Autonomous tasks that involve code execution carry real risk. Meta researcher Summer Yue's agent mass-deleted her emails while ignoring stop commands. That's what uncontrolled autonomous execution looks like.

Manus runs everything in sandboxed cloud environments where a failure can't damage your local system. For autonomous operation, that isolation isn't a luxury. It's a safety requirement.

Watch: OpenClaw vs Manus Autonomous Agent Comparison If you want to see how these two approaches handle the same task differently, this community comparison covers real-world autonomous task execution with honest assessment of where each excels and where each falls short. Watch on YouTube

How to make OpenClaw more autonomous (the workarounds)

OpenClaw's reactive nature doesn't mean you can't build autonomous-style workflows. It means you need to explicitly design them. Here's how the community does it.

AGENTS.md workflow scripting

The AGENTS.md file in your workspace defines structured task sequences. Instead of hoping the model will figure out the steps, you script them:

Research Workflow

- Search for topic using web_search skill

- Extract key findings and save to /workspace/research.md

- Compare findings across sources

- Generate summary table

- Draft email to recipient with table attached

- Send via email skill

This turns OpenClaw into a workflow executor rather than relying on autonomous planning. It's more work upfront, but it's reliable.

Cron jobs for proactive behavior

Scheduled tasks give OpenClaw a form of autonomy: it acts without being prompted. Morning briefings, hourly inbox checks, daily report generation. Each cron job runs as an independent conversation, executing a predefined task.

The limitation: cron jobs are repetitive, not adaptive. They do the same thing every time (or a variation based on the prompt). They don't dynamically plan based on new information.

Skills chaining

Individual skills can be composed into multi-step operations. A "competitor analysis" skill might internally call web_search, then data_extraction, then document_generation. This pushes the planning logic into the skill itself rather than relying on the model to orchestrate.

For the best community-vetted skills that support complex workflows, our guide to the best OpenClaw skills covers the options.

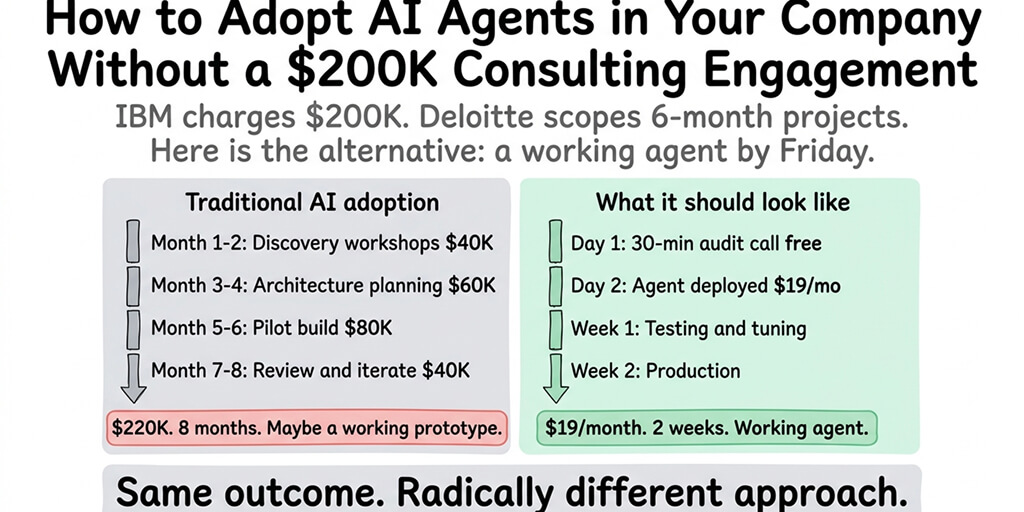

If you want OpenClaw running with proper cron jobs, skill chains, and workflow execution without managing the VPS, Docker, and security yourself, Better Claw handles all of the infrastructure at $19/month per agent. BYOK, 60-second deploy, built-in anomaly detection that auto-pauses if something goes wrong. You focus on building workflows, not babysitting servers.

The honest trade-off matrix

Here's the framework I use when someone asks me "should I use OpenClaw or Manus?"

Choose OpenClaw when:

- You want privacy (data stays local)

- You want cost control (BYOK, no opaque credits)

- You want multi-platform presence (15+ chat channels from one agent)

- You're building a personal assistant for daily reactive tasks

- You have technical capability to configure and maintain it

- You want an open ecosystem with community skills and full customization

Choose Manus when:

- You need true fire-and-forget autonomous execution

- You're non-technical and want zero setup

- You're willing to pay premium pricing for convenience

- You don't need multi-platform chat integration

- You trust Meta with your data

Choose both when:

- You use OpenClaw for daily reactive automation (email, calendar, quick tasks) and Manus for occasional complex autonomous projects (deep research, report generation, multi-step analysis). This is actually how many power users operate.

Where this is heading

OpenClaw's creator Peter Steinberger joined OpenAI in February 2026. The project is transitioning to an open-source foundation. The GitHub feature request for task planning (Issue #6421, "Two-Tier Model Routing for Task-Based Intelligence Delegation") signals that the community recognizes the autonomy gap.

The models themselves are getting better at multi-step planning. Claude Opus 4.6 and GPT-4o handle longer instruction chains more reliably than their predecessors. As model capabilities improve, the gap between reactive and autonomous will narrow even within OpenClaw's current architecture.

But architecture matters. Manus was designed for autonomous execution from day one. OpenClaw was designed for conversational interaction. Bolting planning onto a reactive system is possible (the workarounds above prove it) but it's never as clean as native support.

The most likely outcome: OpenClaw gets better at autonomy while Manus gets better at real-time interaction. They converge. But in March 2026, they serve different needs.

The AI agent space is figuring out the same thing every software category eventually figures out: there's no single tool that does everything well. Use the right tool for the right job. And if your job is "help me throughout my day across all my chat apps," OpenClaw is still the best option available.

If you want that daily assistant running reliably without the infrastructure overhead, give Better Claw a try. $19/month per agent, BYOK, zero config, and your first deploy takes 60 seconds. We handle the Docker, the security, the monitoring. You build the workflows that make your agent genuinely useful.

Frequently Asked Questions

Can OpenClaw handle autonomous tasks without user intervention?

Partially. OpenClaw can execute scheduled cron jobs and follow scripted AGENTS.md workflows without prompting. But it doesn't natively plan or decompose complex goals into sub-tasks the way Manus does. For multi-step autonomous projects, you need to provide the planning logic through workflow files or skill chains. OpenClaw is strongest as a reactive agent that responds to real-time instructions.

How does OpenClaw compare to Manus for autonomous agent work?

Manus excels at fire-and-forget autonomous execution: you assign a complex task and it plans, executes, and delivers without intervention. OpenClaw excels at real-time reactive tasks across 15+ chat platforms with full privacy and cost control. OpenClaw wins on privacy (local execution), cost (BYOK vs opaque credits), and customization (open source, 230K+ stars). Manus wins on autonomous planning and zero-setup convenience.

How do I set up OpenClaw for autonomous workflows?

Use three approaches: AGENTS.md files for scripted multi-step sequences, cron jobs for scheduled proactive tasks (morning briefings, inbox monitoring), and skills chaining for embedded multi-step logic within individual skills. Set maxIterations and maxContextTokens limits to prevent runaway execution. For the most reliable autonomous behavior, combine all three with a capable model like Claude Sonnet 4.6 as your primary.

How much does OpenClaw cost compared to Manus for autonomous tasks?

OpenClaw is BYOK: a well-optimized agent runs $15-50/month in API costs depending on usage and model choice. Manus uses credit-based pricing: $20/month (4,000 credits) to $200/month (40,000 credits), but complex autonomous tasks can burn 900+ credits each with no cost estimation. Users report credit loops where Manus consumes an entire daily allowance on a single task. OpenClaw's costs are more predictable and controllable.

Is OpenClaw safe for autonomous agent execution?

OpenClaw requires careful security configuration for autonomous operations. Without Docker sandboxing, autonomous tasks execute directly on your machine with full system access. Meta researcher Summer Yue's agent deleted her emails while ignoring stop commands. Set iteration limits, use Docker isolation, and run OpenClaw on a dedicated machine or VPS. Managed platforms like Better Claw include Docker sandboxing, AES-256 encryption, and anomaly detection that auto-pauses agents before damage occurs.

Related Reading

- OpenClaw vs Claude Cowork: Full Comparison — How OpenClaw stacks up against Anthropic's native agent tool

- OpenClaw vs Accomplish: Which AI Agent Wins? — Another head-to-head comparison for choosing your AI agent

- OpenClaw for Autonomous Trading — Real-world autonomous task example: crypto trading agents

- Best OpenClaw Use Cases — Full list of tasks where OpenClaw outperforms alternatives