We tested every recommended local model. Some chat fine. None reliably call tools. Here's the full picture.

I spent a Saturday afternoon trying to get Qwen3 8B running through Ollama as my OpenClaw agent's primary model. Zero API costs. Full privacy. The dream setup.

The model loaded. The gateway started. I typed "hello." It responded instantly. This is going to work.

Then I asked it to check my calendar. The agent generated a narrative essay about how it would check my calendar if it could, instead of actually calling the calendar tool. I asked it to search the web. Same thing. Beautiful prose about web searching. Zero actual web searches.

Three hours later, I'd tried four different models, two different API configurations, and one custom provider workaround from a GitHub issue. The chat worked perfectly every time. The tool calling failed silently every time.

Here's what nobody tells you about the OpenClaw Ollama setup: chat and tool calling are completely different capabilities, and local models in 2026 handle the first one well and the second one poorly.

This guide covers exactly what works, exactly what doesn't, and the specific scenarios where Ollama with OpenClaw is genuinely worth the effort.

The fundamental problem: streaming breaks tool calling

This is the root cause of most OpenClaw Ollama failures, and it's documented in GitHub Issue #5769.

OpenClaw sends stream: true on every model request. This is fine for cloud providers like Anthropic and OpenAI, whose streaming implementations properly emit tool call responses. But Ollama's streaming implementation doesn't correctly return tool_calls delta chunks.

What happens: your local model decides to call a tool (web_search, exec, browser). It generates the tool call in its response. But the streaming protocol drops it. OpenClaw receives empty content with finish_reason: "stop" instead of the tool call. The tool never executes.

The result: your agent can have conversations but can't perform actions. No file operations. No web searches. No shell commands. No skill execution. The model writes about what it would do instead of doing it.

This affects every Ollama model configured through OpenClaw. Mistral, Qwen, Llama, DeepSeek local variants. All of them.

OpenClaw + Ollama = chat works. Tool calling doesn't. This isn't a config problem. It's an architectural mismatch between OpenClaw's streaming requirement and Ollama's tool call implementation.

The community has proposed a fix: a per-provider config option to disable streaming when tools are present. The suggested code is straightforward:

const shouldStream = !(context.tools?.length && isOllamaProvider(model));

As of March 2026, this hasn't been merged into a release. Until it is, local models through Ollama are limited to chat-only interactions.

For a detailed breakdown of all five ways local models fail in OpenClaw (including discovery timeouts, WSL2 networking, and the CLI vs API confusion), our troubleshooting guide covers each failure mode.

What actually works with OpenClaw + Ollama

The streaming bug kills tool calling. But not everything in OpenClaw requires tools. Here's what genuinely works.

Basic conversation

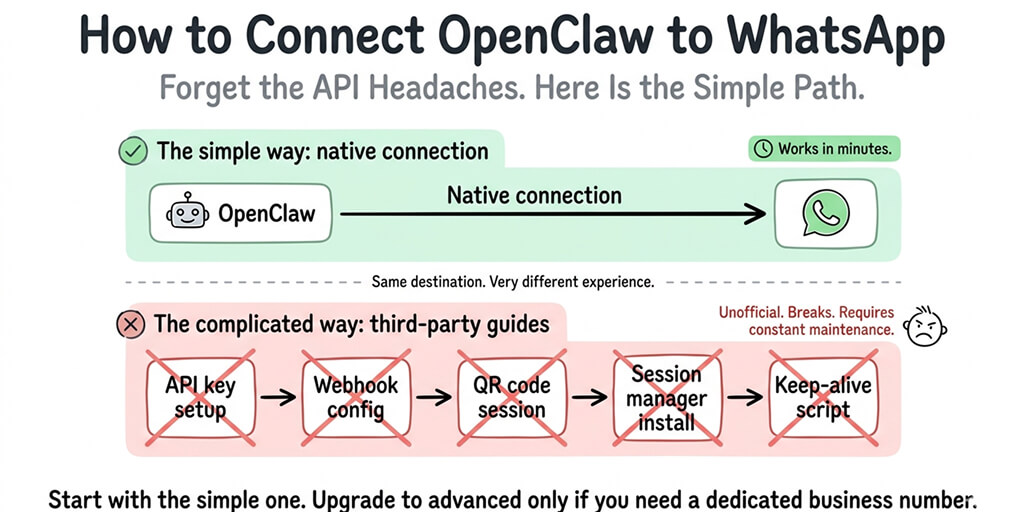

This works perfectly. Ask questions. Get answers. Have discussions. The agent responds through whatever chat platform you've connected (Telegram, WhatsApp, Slack). If all you want is a private chatbot that runs on your hardware, Ollama delivers.

Memory and context

Ollama models maintain conversation context through OpenClaw's memory system. The agent remembers previous messages, stores preferences, and builds context over time. This works the same as cloud models for conversational interactions.

SOUL.md personality

Your agent's personality configuration works normally with local models. Customize tone, behavior rules, and working context. The model follows the system prompt instructions.

Model switching mid-conversation

The /model command works with Ollama models. You can switch between local and cloud providers on the fly. Type /model ollama/qwen3:8b for a quick local response, then /model anthropic/claude-sonnet-4-6 when you need tool execution.

This hybrid approach is actually the best use of Ollama in OpenClaw: local for chat, cloud for actions.

What breaks (and why you can't config your way around it)

Tool calling (the big one)

Every skill that requires the agent to call a tool fails silently. This includes: web search, file read/write, shell command execution, browser automation, email skills, calendar skills, and essentially every skill that makes an agent more than a chatbot.

The model generates the intent to call the tool. The streaming protocol loses it. OpenClaw never receives the instruction. No error message appears. The agent just produces text instead of action.

Cron jobs that require actions

Scheduled tasks that involve tool use (morning briefings that check your calendar, email triage that reads your inbox) fail for the same reason. The cron fires. The model responds. But no tools execute. You get a narrative about what the agent would do, not an actual result.

Sub-agent parallel processing

Sub-agents inherit the tool calling limitation. If your main agent spawns workers for parallel tasks, those workers can't execute tools either. The parallelism works. The execution doesn't.

Browser relay

OpenClaw's browser automation requires precise tool calling to click elements, fill forms, and navigate pages. Local models can't generate the structured tool calls needed. Browser relay with Ollama simply doesn't function.

The models the community actually recommends

Despite the tool calling limitation, some local models work noticeably better than others for the chat-only use case.

glm-4.7-flash (~25GB VRAM): The community favorite. Multiple users in GitHub Discussion #2936 call it "huge bang for the buck." Strong reasoning and code generation. Runs on an RTX 4090, though not entirely in VRAM.

qwen3-coder-30b: Good for code-heavy conversations. Requires significant hardware (24GB+ RAM for quantized versions).

hermes-2-pro and mistral:7b: Ollama's official recommendations for tool calling. These are the models most likely to work when the streaming fix eventually lands, since they have native tool calling support in non-streaming mode.

Models under 8B parameters: Frequent failures on agent tasks even in chat-only mode. Context tracking degrades quickly. Instructions get ignored or misinterpreted. Not recommended for anything beyond basic Q&A.

🎥 Watch: OpenClaw with Ollama Local Models Setup and Limitations If you want to see the Ollama configuration in action (including what the tool calling failure actually looks like and which models perform best for chat-only use), this community walkthrough provides an honest demonstration. 🎬 Watch on YouTube

For local models, plan for 30B+ parameters with at least 64K context window. Anything smaller struggles with OpenClaw's system prompts and multi-turn conversations.

Ollama's own OpenClaw integration docs recommend 64K minimum context. Many popular models default to much less. Set it explicitly in your config:

{

"models": {

"providers": {

"ollama": {

"baseUrl": "http://127.0.0.1:11434",

"apiKey": "ollama-local",

"api": "ollama",

"models": [{

"id": "qwen3:8b",

"contextWindow": 65536

}]

}

}

}

}

For guidance on choosing the right model for your specific use case, our model comparison covers cost-per-task data across local and cloud providers.

The three Ollama gotchas that waste hours

Beyond the tool calling bug, three configuration issues eat the most time.

Gotcha 1: Model discovery timeout

When OpenClaw starts, it tries to auto-discover Ollama models. If Ollama is slow (common when the model isn't pre-loaded), discovery times out silently. Your gateway starts. Your model is listed. But requests fail.

Fix: Pre-load the model before starting OpenClaw:

ollama run qwen3:8b

# Wait for "success," then Ctrl+C

openclaw gateway start

Or define models explicitly in your config to skip discovery entirely (shown above).

Gotcha 2: WSL2 networking

If you're running OpenClaw in WSL2 and Ollama on the Windows host (or vice versa), 127.0.0.1 doesn't resolve across the boundary. Your config says localhost. Your curl works. But OpenClaw can't reach Ollama.

Fix: Use the actual WSL2 IP from hostname -I. Or bind Ollama to 0.0.0.0 with OLLAMA_HOST=0.0.0.0:11434 ollama serve.

Gotcha 3: The CLI vs API confusion

GitHub Issue #11283 documents this bizarre behavior: you configure Ollama as a remote API provider with a baseUrl. OpenClaw should make HTTP API calls. Instead, it tries to execute ollama run as a shell command on your local machine. This happens when OpenClaw's model routing falls back to a cloud model that then tries to "help" by calling Ollama via CLI.

Fix: Make sure your Ollama model is explicitly defined in the models.providers section with api: "ollama" and is listed in the models array. Don't rely on auto-discovery for remote Ollama.

The honest cost comparison: Ollama vs cheap cloud providers

The appeal of Ollama is zero API costs. But "zero API costs" and "zero cost" are different things.

Running Ollama on hardware you own means electricity, hardware depreciation, and your time debugging issues. A Mac Mini M4 running 24/7 consumes roughly $3-5/month in electricity. The machine itself costs $600+ and depreciates.

Meanwhile, cloud providers in 2026 are absurdly cheap:

- DeepSeek V3.2: $0.28/$0.42 per million tokens. A full month of moderate agent usage: $3-8/month.

- Gemini 2.5 Flash free tier: 1,500 requests/day. $0/month for personal use.

- Claude Haiku 4.5: $1/$5 per million tokens. Moderate usage: $5-10/month.

And critically: these cloud providers have working tool calling. Your agent can actually do things.

For the full breakdown of which cloud providers cost what for OpenClaw, our provider comparison covers five alternatives that cost 90% less than most people expect.

The cheapest model isn't the one with the lowest per-token price. It's the one that can do the job. An Ollama model that can chat but can't call tools isn't a cheaper agent. It's a more expensive chatbot.

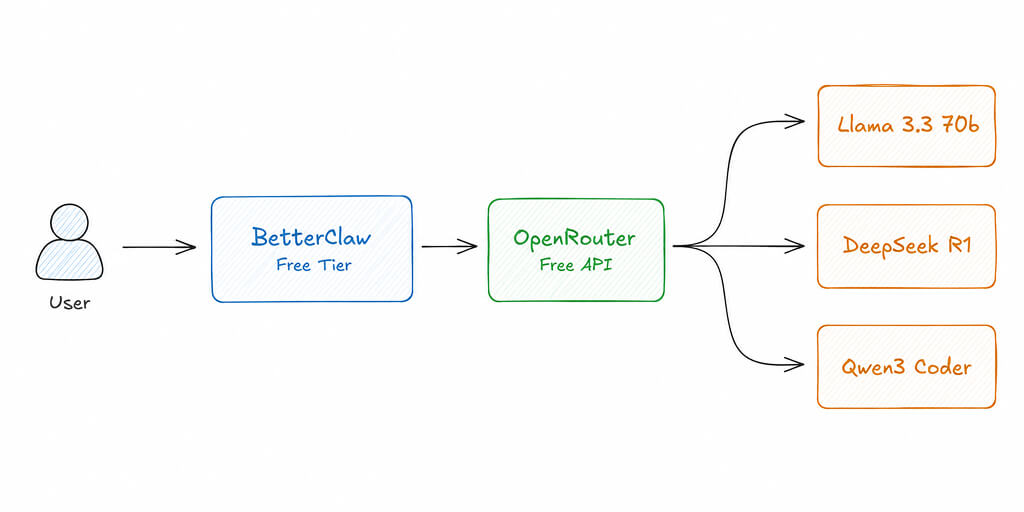

If you want tool calling that works, multi-channel support, and zero Ollama debugging, BetterClaw supports all 28+ cloud providers with BYOK and zero configuration. $19/month per agent. 60-second deploy. Every model routes correctly because the streaming issue doesn't exist with cloud APIs.

When Ollama with OpenClaw genuinely makes sense

I'm not going to pretend Ollama is never the right choice. Three scenarios justify the setup.

Privacy-first deployments

If your data absolutely cannot leave your network, local models are the only option. Government, healthcare, legal, defense: these environments have compliance requirements that no cloud provider can satisfy. The tool calling limitation is real, but for conversational interaction with sensitive data, Ollama delivers complete data sovereignty.

Offline and air-gapped environments

No internet? No API calls. Ollama runs entirely locally. If you need an AI assistant in an environment without reliable connectivity, local models are it.

Hybrid heartbeat routing

Use Ollama for heartbeats (the 48 daily status checks that cost tokens on cloud providers) and a cloud model for everything else. Heartbeats don't require tool calling. They're simple status checks. Running them locally saves $4-15/month depending on your cloud model pricing.

{

"agent": {

"model": {

"primary": "anthropic/claude-sonnet-4-6",

"heartbeat": "ollama/hermes-2-pro:latest"

}

}

}

For the full model routing setup, our intelligent provider switching guide covers the config patterns.

Where this is heading

The streaming + tool calling bug will get fixed eventually. The proposed patch is clean. The community wants it. It's a matter of when, not if.

When it lands, the best local models (glm-4.7-flash, qwen3-coder-30b) will become genuinely useful for agent tasks. Tool calling will work. Skills will execute. The gap between local and cloud will narrow significantly for the subset of tasks that don't require frontier-level reasoning.

But "narrowing" isn't "closing." Cloud models like Claude Sonnet and GPT-4o will still outperform local models on complex multi-step reasoning, long-context accuracy, and prompt injection resistance for the foreseeable future. The hardware requirements for running competitive local models (25GB+ VRAM, 64GB+ RAM for larger models) put them out of reach for most users.

The practical future is hybrid. Cloud for the tasks that need it. Local for the tasks that don't. OpenClaw's model routing architecture already supports this. The tooling just needs to catch up.

For now, if you need an agent that can act (not just talk), cloud providers are the reliable path. If you need complete privacy for conversational AI, Ollama works today.

If you want an agent that works with any provider without debugging streaming protocols, give BetterClaw a try. $19/month per agent, BYOK with any cloud provider or combination. 60-second deploy. The tool calling just works because we handle the model integration layer. You build workflows instead of workarounds.

Frequently Asked Questions

Does OpenClaw work with Ollama local models?

Partially. Chat and conversation work correctly with Ollama models through OpenClaw. Tool calling (web search, file operations, shell commands, browser automation, skills) does not work due to a streaming protocol bug documented in GitHub Issue #5769. OpenClaw sends stream: true on all requests, but Ollama's streaming implementation drops tool call responses. Until this is patched, local models are limited to chat-only interactions.

How does Ollama compare to cloud providers for OpenClaw?

Ollama offers zero API costs and complete data privacy but lacks working tool calling in OpenClaw. Cloud providers (Claude Sonnet at $3/$15, DeepSeek at $0.28/$0.42, Gemini Flash free tier) have reliable tool calling, larger context windows, and better multi-step reasoning. For agent tasks that require actions (email, calendar, web search), cloud providers are significantly more capable. For private conversational AI, Ollama works well.

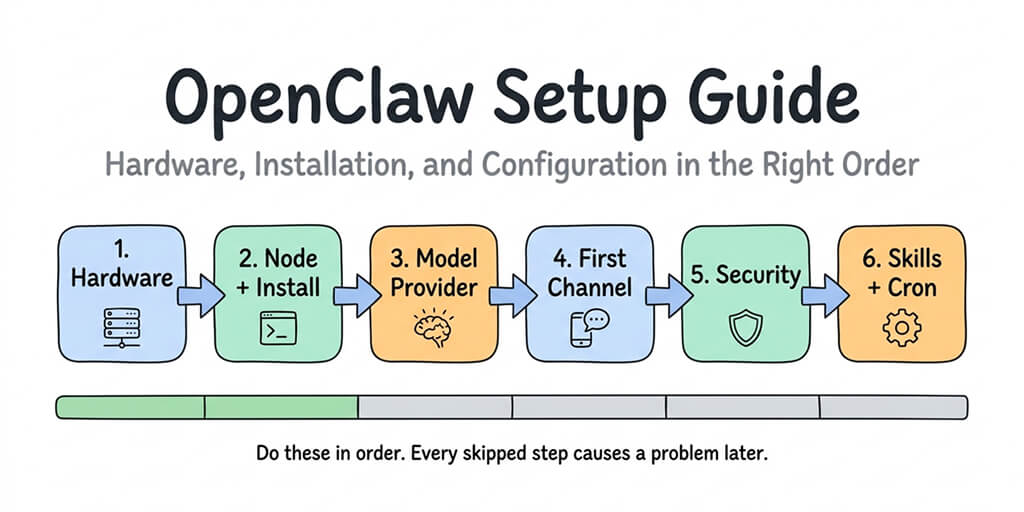

How do I set up Ollama with OpenClaw?

Install Ollama and pull your model (ollama pull qwen3:8b). Pre-load the model before starting OpenClaw to avoid discovery timeouts. Configure your ~/.openclaw/openclaw.json with the Ollama provider, setting contextWindow to at least 65536. Start the gateway and test. If on WSL2, use the actual network IP instead of 127.0.0.1. Expect chat to work and tool calling to fail.

Is running OpenClaw with Ollama cheaper than cloud APIs?

Not always. Ollama has zero token costs but requires dedicated hardware ($600+ Mac Mini or GPU-capable machine) and electricity ($3-5/month). DeepSeek V3.2 runs a full agent for $3-8/month via API. Gemini Flash has a free tier. When you factor in hardware cost, electricity, and the time debugging Ollama issues, cheap cloud providers often cost less overall. The exception: if you already have capable hardware and need complete data privacy.

Which Ollama models work best with OpenClaw?

For chat-only use: glm-4.7-flash (best quality, needs ~25GB VRAM), qwen3-coder-30b (strong for code, needs 24GB+ RAM), and hermes-2-pro or mistral:7b (Ollama's recommended tool calling models, will be first to work when the streaming fix lands). Avoid models under 8B parameters for agent tasks. Set context window to 64K+ minimum in your config.