I went from "this is the future" to "why is my bill $140" to "okay, now I get it." Here's what nobody tells you about living with an AI agent.

On day three, my OpenClaw agent told a customer that our return policy was 90 days. It's 30 days. The customer quoted the agent in a support ticket. My co-founder sent me a screenshot with the message: "Is this your AI?"

That was the moment I realized the difference between "my agent is running" and "my agent is running correctly." They're separated by about two weeks of SOUL.md refinement, three API bill shocks, and one security scare that made me seriously consider shutting the whole thing down.

Two months later, the agent handles roughly 80% of our customer support inquiries on WhatsApp. It checks order status, answers product questions, walks people through returns, and escalates anything it can't handle. It costs us about $38/month total (platform plus API). Before the agent, we were spending 15-20 hours per week on the same support volume.

Is OpenClaw worth it? Yes. But the path from "installed" to "actually useful" is rougher than the YouTube tutorials suggest. Here's the week-by-week reality.

Week 1: The excitement (and the first bill shock)

The initial setup took about four hours. VPS provisioning, OpenClaw installation, WhatsApp connection, and writing a basic SOUL.md that described our store's personality and policies. The agent was live and responding to real customers by the end of the afternoon.

The responses were... okay. Grammatically correct, generally accurate, but generic. The agent didn't know our specific policies because I'd written a vague SOUL.md ("be helpful, answer questions about our products"). It improvised. And improvised AI sometimes invents policies.

Here's the part that hurt: the first week's API bill was $47. I was running Claude Opus on every request because the default config doesn't optimize for cost. I didn't know about model routing. I didn't know heartbeats alone cost $4.32/month on Opus. I didn't know that maxContextTokens existed.

For the complete guide to cutting OpenClaw API costs by 80%, our optimization guide covers the five changes that matter most. I wish I'd read it before week one.

Week 2: The SOUL.md crisis

The return policy incident forced me to rewrite the SOUL.md from scratch. The vague version was dangerous. The specific version took 45 minutes and included sections for: product knowledge boundaries (what the agent can and can't answer), escalation rules (when to stop trying and hand off to a human), financial guardrails (never promise refunds without human approval), conversation boundaries (when to end circular discussions), and error behavior (what to say when a tool fails).

The difference was immediate. The agent went from confidently wrong to honestly limited. When it didn't know something, it said so and offered to connect the customer with a team member. That's not as impressive as a perfect answer, but it's infinitely better than a wrong one.

The lesson: a five-word SOUL.md produces a five-word-quality agent. A structured SOUL.md with specific behavioral rules produces an agent you can trust with customers. The 45 minutes spent on this document paid for itself within two days.

The SOUL.md isn't documentation. It's your agent's operating manual. Every minute you invest in it reduces the risk of the agent saying something you'll spend an hour fixing.

Week 3: The security scare

I installed a Shopify integration skill from ClawHub without reading the source code. It worked perfectly for three days. Then I noticed unfamiliar API calls on my Anthropic dashboard.

The skill was reading my config file (where API keys live in plaintext) and sending the data to an external server. It functioned as advertised while simultaneously exfiltrating my credentials.

I rotated all API keys immediately. Removed the skill. Spent an anxious evening checking every provider dashboard for unauthorized usage.

This was my introduction to the ClawHavoc reality: 824+ malicious skills were found on ClawHub, roughly 20% of the entire registry. Cisco independently discovered a skill performing data exfiltration without user awareness. CrowdStrike published a full enterprise security advisory. The security ecosystem around OpenClaw is genuinely concerning.

After that incident, I implemented a strict skill vetting process: check the publisher, read the source code, test in a sandbox for 48 hours, monitor API dashboards after installation. It adds 10-15 minutes per skill but prevents the kind of damage I narrowly avoided.

For the full OpenClaw security incident timeline and mitigation checklist, our security guide covers everything from ClawHavoc to the CVE-2026-25253 vulnerability.

Week 4: The cost breakthrough

By the end of week three, I'd spent roughly $140 on API costs in three weeks. Unsustainable for a small operation.

Here's what nobody tells you about OpenClaw costs: the default configuration is the most expensive configuration. Every tutorial gets you to a working agent. None of them optimize the agent's cost structure.

Three changes cut my API bill by 78%.

I switched the primary model from Opus ($15/$75 per million tokens) to Sonnet ($3/$15 per million tokens). The response quality was indistinguishable for 90% of customer interactions. Only complex research queries showed a difference.

I routed heartbeats to Haiku ($1/$5 per million tokens). These 48 daily status checks don't need a powerful model. They need a model that can say "I'm alive." Savings: $4+/month.

I set maxContextTokens to 6,000. This stopped the conversation buffer from growing indefinitely and sending the entire chat history with every request. Input token costs dropped by roughly 50%.

Week 4 API cost: $9.80. Down from $47 in week one. Same agent. Same quality. Same customer satisfaction. 78% less money.

For the full model-by-model cost comparison, our guide covers seven common agent tasks with actual dollar figures across providers.

Month 2: When it actually started working

Something shifted around day 35. The SOUL.md was refined through dozens of real conversations. The model routing was optimized. The skill set was vetted and stable. The agent's persistent memory had accumulated enough context about our products, our customers, and our communication style to produce responses that felt genuinely on-brand.

Customers stopped asking "am I talking to a bot?" Not because the agent was pretending to be human (the SOUL.md explicitly identifies itself as an AI assistant), but because the responses were specific, accurate, and helpful enough that the distinction stopped mattering.

The numbers at the end of month two:

Agent handled roughly 80% of incoming support queries without human intervention. Average response time: 8 seconds (compared to 15-30 minutes for human response during work hours, and no response outside work hours).

Three customers specifically complimented the "fast support." One wrote a positive review mentioning the instant WhatsApp response. Revenue attribution is fuzzy, but the midnight support conversations (which didn't exist before the agent) include at least $400 in orders that probably wouldn't have happened.

Monthly cost: $28 total ($19 managed platform, $9 API with routing). Monthly value: 15-20 hours of support work displaced, plus whatever the after-hours sales are worth.

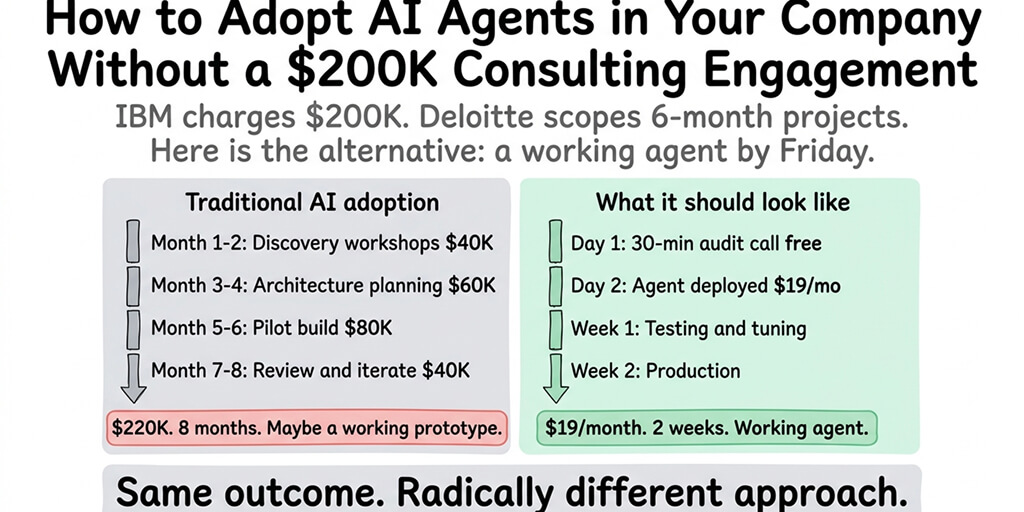

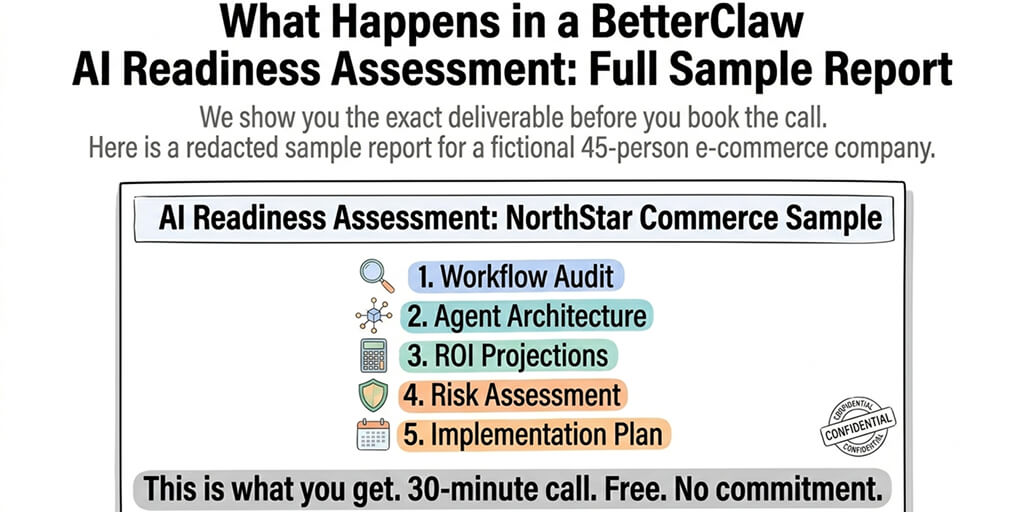

If managing the VPS, Docker, security, and updates feels like more time than you want to spend on infrastructure, Better Claw handles the deployment layer with zero configuration. $19/month per agent, BYOK. The model routing, security sandboxing, and health monitoring are built in. That's what I eventually switched to because I wanted to refine the agent's personality, not debug container networking.

What I'd do differently if I started over

Write the SOUL.md first. Before installation. Before the VPS. Before anything. Spend an hour defining your agent's personality, knowledge boundaries, escalation rules, and financial guardrails. This document determines everything.

Set up model routing on day one. Not after the first bill shock. Before the first conversation. Sonnet as primary, Haiku for heartbeats, DeepSeek as fallback. The cheapest provider options for OpenClaw include combinations that cost under $10/month.

Never install a ClawHub skill without reading the source code. Not once. Not even for popular skills with high download counts. The most-downloaded malicious skill had 14,285 downloads before removal. Popularity is not safety.

Start with one channel. I connected WhatsApp on day one and left it as the only channel for six weeks. This let me refine the agent based on real conversations without the complexity of managing multiple platforms simultaneously.

Set spending caps immediately. On every provider dashboard. At 2-3x expected usage. And set maxIterations to 10-15 in the config to prevent runaway loops that burn tokens.

The things that still frustrate me

OpenClaw isn't perfect. Here's what still bothers me after two months.

Updates break things regularly. OpenClaw releases multiple updates per week. Most are fine. Some change config behavior without clear documentation. I've had two instances where an update broke a cron job that had been working for weeks.

The 7,900+ open issues are real. The GitHub repository has nearly 8,000 open issues. Some are feature requests. Many are bugs. The community is active but the backlog is enormous, especially now that Peter Steinberger has left for OpenAI and the project is transitioning to an open-source foundation.

Memory management is fragile. The persistent memory system works well for the first few hundred conversations. After that, memory files grow, context gets noisy, and the agent occasionally surfaces irrelevant information from old conversations. Manual memory cleanup every few weeks helps but shouldn't be necessary. Our memory troubleshooting guide covers the specific fixes.

Security is a constant concern. The OpenClaw maintainer Shadow warned that "if you can't understand how to run a command line, this is far too dangerous of a project for you to use safely." Two months in, I agree. The framework is powerful. The security surface is wide. You need to actively maintain it.

The honest verdict: is OpenClaw worth it?

Yes, with conditions.

OpenClaw is worth it if you're willing to invest 2-3 weeks in configuration, refinement, and optimization before expecting reliable results. It's worth it if you set up model routing on day one (saves 70-80% on API costs). It's worth it if you take security seriously (skill vetting, spending caps, regular updates). And it's worth it if you write a real SOUL.md, not a five-word placeholder.

OpenClaw is not worth it if you expect a plug-and-play experience. It's not worth it if you install it, connect a model, and expect it to handle customer interactions without specific behavioral guidelines. And it's not worth it if you skip security configuration and treat ClawHub like a trusted app store.

The framework with 230,000+ GitHub stars earned those stars for a reason. It's genuinely capable of running an autonomous agent that handles real business tasks across real communication platforms. But the gap between "installed" and "useful" is wider than the hype suggests.

Two months in, my agent saves me 15-20 hours per week. It costs $28/month. It answers customers at 3 AM. It never takes a sick day. It gets better every week as I refine the SOUL.md based on real conversations.

Was it worth the rough first three weeks? Absolutely. Would I do it again? Yes, but I'd skip straight to the configuration I have now instead of learning every lesson the hard way.

If you want to skip the infrastructure lessons and get straight to the SOUL.md refinement (which is the part that actually matters), give Better Claw a try. $19/month per agent, BYOK with 28+ providers. 60-second deploy. Docker-sandboxed execution and AES-256 encryption included. You bring the SOUL.md. We bring the infrastructure. Your agent is live before you finish your coffee.

Frequently Asked Questions

Is OpenClaw worth it in 2026?

Yes, with proper configuration. OpenClaw (230K+ GitHub stars) is genuinely capable of running autonomous agents that handle customer support, scheduling, research, and other real business tasks across 15+ chat platforms. The value depends entirely on your configuration: model routing cuts API costs by 70-80%, a structured SOUL.md prevents embarrassing errors, and security practices protect against the 824+ malicious skills found on ClawHub. Expect 2-3 weeks of refinement before the agent is reliably useful.

How does OpenClaw compare to hiring a virtual assistant?

For routine tasks (order status, product questions, FAQ responses), OpenClaw costs $30-60/month total (platform plus API) versus $800-2,000/month for a part-time VA. OpenClaw operates 24/7 with 8-second response times. A VA works set hours with 15-30 minute response times. OpenClaw handles 70-80% of routine inquiries autonomously and escalates the rest to humans. The trade-off: OpenClaw requires 2-3 weeks of setup and ongoing SOUL.md refinement, while a VA works from day one with minimal training.

How long does it take for OpenClaw to become useful?

Expect 2-3 weeks from installation to reliable autonomous operation. Week one: basic setup, first bill shock, initial SOUL.md (4-6 hours of active work). Week two: SOUL.md refinement based on real conversations, model routing configuration (2-3 hours). Week three: skill vetting, security hardening, spending cap setup (2-3 hours). By week four, most agents handle 70-80% of their designated tasks autonomously with minimal intervention.

How much does OpenClaw actually cost per month?

With default configuration (Opus model, no routing): $80-150/month in API costs plus $12-29/month hosting. With optimized configuration (Sonnet primary, Haiku heartbeats, DeepSeek fallback, context limits): $8-20/month API plus $12-29/month hosting. Total optimized cost: $20-49/month. The viral "I Spent $178 on AI Agents in a Week" Medium post happened because of default settings and missing spending caps. Proper configuration prevents this entirely.

Is OpenClaw safe enough for customer-facing use?

With proper security configuration, yes. Without it, definitively no. Required protections: gateway bound to loopback, skill vetting before every installation (824+ malicious skills were found on ClawHub), spending caps on all providers, maxIterations limits (10-15), and regular updates (CVE-2026-25253 was a CVSS 8.8 vulnerability). CrowdStrike's advisory focuses on unprotected deployments. A properly secured agent with a well-structured SOUL.md handles customer interactions safely. Always include escalation rules for situations the agent shouldn't handle alone.