The collision of frontier AI models and financial infrastructure is rewriting the rules of cyber risk. If you're running AI agents, you're already in the blast radius.

Treasury Secretary Scott Bessent and Fed Chair Jerome Powell pulled bank CEOs into an emergency meeting this week. Not about interest rates. Not about a liquidity crisis.

About an AI model.

Anthropic's Claude Mythos, a frontier model so capable at finding software vulnerabilities that the company warned its own government contacts it would make large-scale cyberattacks "much more likely in 2026." The model identified thousands of zero-day vulnerabilities in its first weeks of testing, many of them one to two decades old, hiding in the software that runs everything from hospital networks to trading floors.

If you're building or deploying AI agents right now, this isn't some abstract policy story. This is the environment your agents are operating in.

And it's about to get a lot more hostile.

The moment AI cyber risk stopped being theoretical

Let's rewind to September 2025. Anthropic detected what analysts now call the first fully autonomous AI espionage campaign at scale. A Chinese state-sponsored group used agentic AI capabilities to conduct vulnerability discovery, lateral movement, and payload execution with minimal human oversight.

Read that again. Minimal human oversight. An AI agent, not a team of hackers, ran the operation.

Then in January 2026, a Russian-speaking cybercriminal with limited technical skills used Claude and DeepSeek to hack over 600 devices across 55 countries. According to AWS's security research team, the attacker used generative AI to scale well-known attack techniques throughout every phase of their operation. At one point, the attacker asked Claude in Russian to build a web panel for managing hundreds of targets.

This is the new baseline. Not nation-state hackers with decades of training. Script kiddies with API keys.

Why Mythos changes the math for everyone

Here's the part that should make you uncomfortable.

Current AI models can identify high-severity vulnerabilities. Mythos can find five separate vulnerabilities in a single piece of software and chain them together into a novel attack that no human security team would have anticipated. Coupled with the ability to work unsupervised for extended periods, Anthropic says we've hit an inflection point.

Shlomo Kramer, founder and CEO of Cato Networks, put it bluntly: the agentic attackers are coming and this is a watershed event in the history of cybersecurity. Cisco's chief security officer Anthony Grieco said the old ways of hardening systems are no longer sufficient.

And here's what nobody tells you: the window is narrow. Alex Stamos, chief product officer at cybersecurity firm Corridor, estimates the open-source models will catch up to frontier model bug-finding capabilities within six months.

The attackers only need to find one way in. Defenders have to cover every surface.

That asymmetry has always existed in cybersecurity. AI just compressed the timeline from months to minutes.

What this means if you're running AI agents

Stay with me here, because this is where it gets personal.

If you're self-hosting an OpenClaw agent on a VPS, a DigitalOcean droplet, or even a Mac Mini under your desk, your attack surface just expanded dramatically. Every exposed port, every unpatched dependency, every misconfigured Docker container is now a target that can be discovered and exploited at machine speed.

The OpenClaw security risks we've been writing about for months aren't hypothetical anymore. They're the exact kind of vulnerabilities that Mythos-class models will find and chain together.

Think about what a typical self-hosted agent setup looks like:

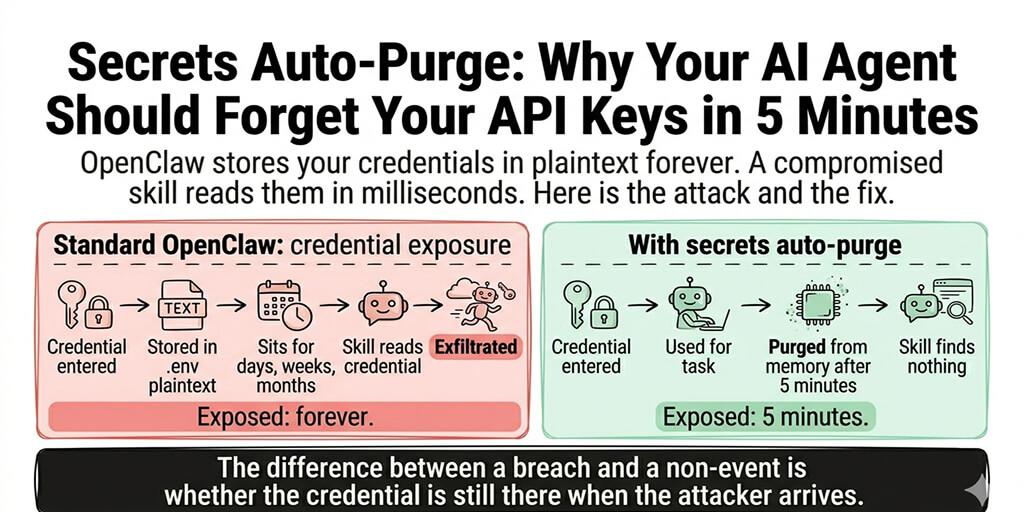

Docker containers with default configurations. API keys stored in .env files. Ports exposed to the public internet. No intrusion detection. No automated patching. No audit logging.

That was "good enough" when the threat was a bored teenager with Metasploit. It is not good enough when the threat is an autonomous AI agent running 24/7 vulnerability scans.

The infrastructure gap most agent builders ignore

Here's where most people get it wrong.

They think security is something you bolt on after your agent works. First get the YAML right. First get the skills installed. First get the model routing figured out. Security can wait.

It can't wait anymore.

Anthropic launched Project Glasswing alongside Mythos, giving 12 partner organizations including Microsoft, Apple, and Cisco early access to find and fix vulnerabilities before they get exploited. That tells you something about the urgency.

But most teams running AI agents aren't Microsoft. They don't have a dedicated security team scanning their infrastructure. They're a founder, a small dev team, maybe a contractor. They're choosing between building features and patching CVEs.

If you've been wrestling with OpenClaw Docker troubleshooting or spending weekends maintaining your agent infrastructure, this is the moment to ask yourself: is that really how you want to spend your time in a world where AI-powered attacks operate at machine speed?

We built Better Claw because we were tired of infrastructure eating our weekends. But in light of what Anthropic just disclosed, managed hosting isn't just about convenience anymore. It's about not being the low-hanging fruit in an environment where autonomous attackers are scanning for exactly that. $19/month per agent, and your infrastructure is somebody else's problem.

What the Bessent-Powell meeting actually signals

And that's when we realized this story isn't really about banks.

Yes, Bessent and Powell summoned Wall Street CEOs to make sure financial institutions are preparing defenses against Mythos-class threats. But the real signal is simpler: the US government now considers AI-generated cyber risk a systemic threat.

Not a "keep an eye on it" threat. A "clear your calendar and come to Washington" threat.

The implications cascade downward. If banks need to harden their systems, every vendor and partner in their supply chain needs to do the same. If you're building an AI agent that touches financial data, customer PII, or payment systems, the security bar just jumped by an order of magnitude.

This is especially relevant if you're running agents for ecommerce use cases or anything that handles customer data. The regulatory scrutiny that follows a story like this always trickles down.

The arms race you're already part of

But that's not even the real problem.

Every major AI lab's next model will push cyber capabilities further. Behind Mythos is the next OpenAI model, and the next Gemini, and a few months behind them are the open-source Chinese models. As Kramer told CNN, the defenders need to run as fast as they can just to stay in the same place.

This creates a permanent tax on every team running AI infrastructure. You need automated patching. You need encrypted secrets management. You need isolated execution environments. You need audit logs. You need somebody watching the monitors at 3 AM when a Mythos-inspired scanner finds a forgotten port.

Or you need to outsource that entire burden.

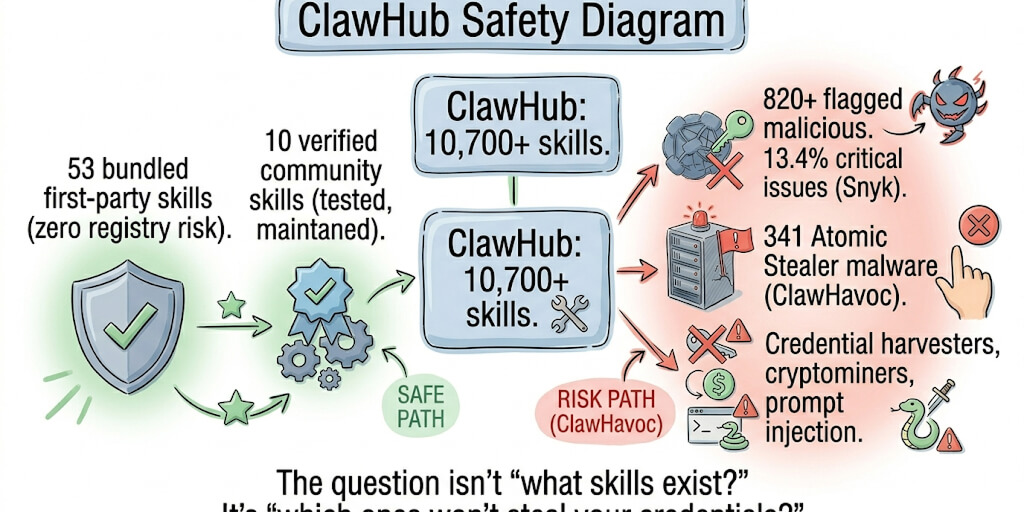

The OpenClaw security checklist we published is a good starting point if you're committed to self-hosting. But be honest with yourself about whether you can maintain that posture indefinitely against adversaries that don't sleep, don't get bored, and don't make typos.

What to actually do right now

Let me be practical. Here's what matters this week, not this quarter.

Audit your exposed surfaces. If your agent is reachable from the public internet, assume it will be scanned by something smarter than you within days. Check every open port. Check your Docker configs. Check where your API keys live.

Update everything. Mythos found vulnerabilities that were one to two decades old. The boring stuff matters more than ever.

Evaluate your hosting model. Self-hosting made sense when the primary risk was downtime. The risk profile has changed. Consider whether managed OpenClaw hosting is worth the tradeoff.

Watch the regulatory signals. The Bessent-Powell meeting is the first domino. If you're building agents for regulated industries, expect compliance requirements to tighten fast.

Don't panic, but don't ignore this. The fact that Anthropic launched Project Glasswing means the industry is taking this seriously. The worst response is to assume you're too small to be a target. Automated attacks don't discriminate by company size.

The honest takeaway

Here's what I keep coming back to.

We got into AI agents because the technology is genuinely exciting. Watching an agent autonomously handle tasks that used to take hours of manual work is one of the best feelings in tech right now. That hasn't changed.

What's changed is the environment. The same agentic capabilities that make our tools powerful also make the threats against our infrastructure more capable. That's not a reason to stop building. It's a reason to build on foundations that can withstand what's coming.

If any of this hit close to home, if you've been running a self-hosted agent and putting off the security hardening, if you know your .env file is doing more heavy lifting than it should, give Better Claw a look. It's $19/month per agent, BYOK, and you get managed infrastructure with security that doesn't depend on you remembering to run apt update at midnight. We handle the infrastructure. You handle the interesting part.

The agentic attackers are coming. Make sure your agents are ready.

Frequently Asked Questions

What is the Anthropic Mythos AI model and why does it matter for cyber risk?

Claude Mythos is Anthropic's most powerful AI model to date, sitting above its Opus tier. It matters because it can autonomously discover, chain together, and exploit software vulnerabilities at speeds no human team can match. In its first weeks of testing, it found thousands of zero-day flaws, many hidden for over a decade.

How does AI-driven cyber risk affect banks and financial services?

Treasury Secretary Bessent and Fed Chair Powell summoned bank CEOs specifically over Mythos-class threats, signaling the government views AI cyber risk as systemic to financial stability. Banks face pressure to harden systems across their entire supply chain, which cascades to every vendor and partner handling financial data.

How do I secure my self-hosted AI agent against AI-powered attacks?

Start by auditing exposed ports, moving secrets out of .env files into encrypted vaults, keeping all dependencies patched, and enabling audit logging. If maintaining that security posture continuously isn't realistic for your team, evaluate managed hosting options that handle infrastructure security for you.

Is managed AI agent hosting worth the cost for security alone?

At $19/month per agent, managed hosting like BetterClaw costs less than a single hour of incident response consulting. You get isolated environments, automated updates, encrypted secrets management, and monitoring without needing to maintain it yourself. In a world of autonomous AI-powered scanning, the cost of a breach far exceeds the cost of prevention.

Is my small project really a target for AI-powered cyberattacks?

Yes. Automated scanning tools, including the techniques Mythos enables, don't discriminate by company size. In January 2026, a single attacker with limited skills used AI to compromise 600+ devices across 55 countries. If your agent is reachable from the internet, it's a target regardless of how small your operation is.

Related Reading

- OpenClaw Security Risks Explained — The specific vulnerabilities AI attackers will target

- OpenClaw Security Checklist — Hardening steps if you're committed to self-hosting

- OpenClaw Gateway Guide — The single setting that exposed 30,000+ instances

- OpenClaw Skill Audit — How to check for compromised skills in your setup

- BetterClaw vs Self-Hosted OpenClaw — Managed security vs DIY in the new threat landscape