DeepSeek V4 on OpenClaw is a minefield of compatibility bugs right now. Here are the five errors you'll hit, sourced from GitHub issues filed this week, and how to fix each one.

A user on the OpenClaw GitHub filed issue #77350 four days ago. After upgrading to version 2026.5.3-1, every request to DeepSeek V4 Pro through OpenRouter failed with:

400 reasoning_effort: Invalid option: expected one of "xhigh"|"high"|"medium"|"low"|"minimal"|"none"

The agent worked fine on 2026.4.10. Same config. Same model. Same API key. The upgrade broke it. And the error message gave no useful direction because the user's config said "high" (a valid option) but the gateway was silently mapping it to "max" (an invalid option for OpenRouter).

The config said one thing. The gateway sent another. The error blamed the user.

This is the current state of DeepSeek V4 on OpenClaw. The model works. The integration doesn't. Here are the five errors you'll hit, what actually causes them, and how to fix each one.

Error 1: "Provider Rejected the Request Schema or Tool Payload" (reasoning_effort Mismatch)

GitHub issue: #77350 (filed May 4, 2026). #71160 (filed April 22, 2026).

What happens: OpenClaw 2026.5.3-1 introduced a new thinking-policy.ts for OpenRouter that exposes a "max" reasoning level for DeepSeek V4 Pro. The problem: OpenRouter doesn't accept "max." It accepts "xhigh," "high," "medium," "low," "minimal," and "none." The gateway maps your config value to "max" internally and sends it to OpenRouter. OpenRouter rejects it.

The error in your logs:

[agent/embedded] embedded run agent end: isError=true error=LLM request failed: provider rejected the request schema or tool payload. rawError=400 reasoning_effort: Invalid option

The fix: Downgrade to OpenClaw 2026.4.10 (where this worked), or wait for the patch. The issue author confirmed: "Switching to a non-DeepSeek model (or downgrading to 2026.4.10) makes it work again." If you must stay on 2026.5.3, set thinkingDefault to "none" in your config to bypass the reasoning_effort parameter entirely.

The pattern: OpenClaw ships updates that break specific model/provider combinations without testing all permutations. DeepSeek V4 through OpenRouter is the latest casualty. The fix is always the same: downgrade or wait.

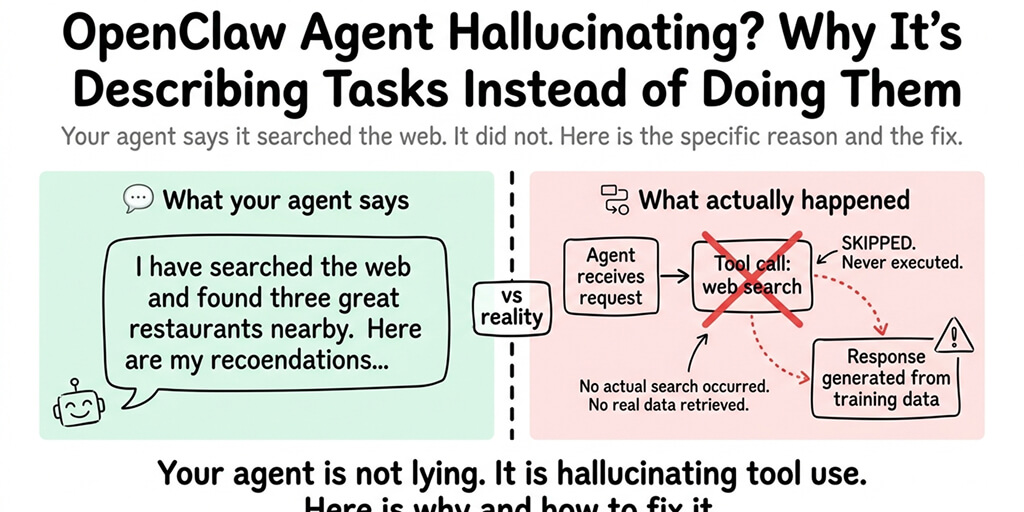

Error 2: "reasoning_content Must Be Passed Back" (Tool-Call Continuation Failure)

GitHub issue: #71160 (filed April 22, 2026).

What happens: When DeepSeek V4 Pro makes a tool call and the tool returns results, OpenClaw needs to pass the reasoning_content back in the continuation request. It doesn't. OpenRouter rejects the follow-up request with a 400 error.

The error in your logs:

rawError=400 The reasoning_content in the thinking mode must be passed back to the API

What this means practically: Your agent can call tools on the first turn. But any multi-step workflow (call tool, process result, call another tool) fails on the second step. The first message works. Follow-ups don't.

The fix: Set thinkingLevel to "off" for DeepSeek V4 Pro in your config. When thinking is disabled, the reasoning_content passback isn't required. You lose the reasoning chain visibility but the tool-calling workflow works.

For the general OpenClaw troubleshooting guide, our openclaw-not-working guide covers the broader diagnosis when errors aren't DeepSeek-specific.

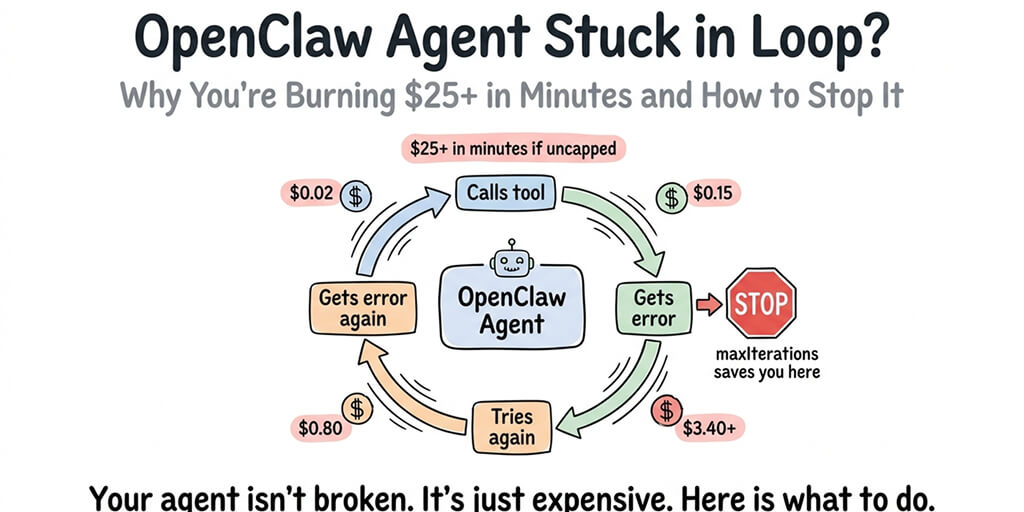

Error 3: DeepSeek 503 "Service Unavailable" (Provider Overload)

What happens: DeepSeek's API returns 503 during peak usage hours. This is a well-documented pattern going back to DeepSeek V3. The community describes it as "the DeepSeek tax": the cheapest frontier model comes with the least reliable uptime.

But that's not even the real problem.

The real problem (GitHub issue #20999, #8112): OpenClaw doesn't treat 503 as a failover-eligible error. When your primary model returns 503, OpenClaw should switch to your fallback model. It doesn't. It retries the same model repeatedly and eventually stalls. The agent goes silent.

From the GitHub issue: "503 (and 502/504) are not treated as failover-eligible, so they fall through to message-based classification and often end up as 'other' -> no fallback."

The source code confirms it. In classifyFailoverReason(), there's no branch for server errors. The function checks for rate limits, billing, timeouts, and auth errors. 500/502/503/529 all return null, meaning "don't failover."

The fix: There isn't a clean fix in the current codebase. The workarounds: add a reverse proxy (like nginx) in front of your gateway that detects 503s and routes to a fallback endpoint, or manually switch models when you see repeated 503s in logs.

Error 4: "LLM Request Timed Out" (DeepSeek Thinking Takes Too Long)

GitHub issue: #46049 (filed March 14, 2026). #44936 (filed March 13, 2026).

What happens: DeepSeek V4 in thinking mode can take 30-90 seconds to start streaming a response. OpenClaw's internal HTTP timeout kills the request before DeepSeek finishes thinking. Your configured timeout values (even 86400 seconds) are ignored.

From issue #46049: "The request fails with a timeout error even though all configured timeout values are extremely large. OpenClaw appears to ignore configured timeout settings for LLM requests."

The fix: Set timeoutSeconds to at least 120 in agents.defaults. Some users report that the internal HTTP client has a hardcoded timeout that overrides the config value. If the timeout persists after configuration, this is a confirmed bug with no workaround short of disabling thinking mode.

If managing reasoning_effort mismatches, tool-call passback failures, 503 failover gaps, and timeout bugs sounds like more OpenClaw debugging than you signed up for, BetterClaw handles provider compatibility at the platform level. DeepSeek V4 (Flash and Pro) works from a dropdown. No reasoning_effort configuration. No passback bugs. No 503 stalls. Provider failover actually triggers on 503s. Free tier with 1 agent and BYOK. $19/month per agent for Pro.

Error 5: "deepseek-chat" Alias Routing to V4 Flash (Silent Model Change)

What happens: If your config still uses "deepseek-chat" as the model name, it now silently routes to DeepSeek V4 Flash (since April 24, 2026). V4 Flash has a different encoding format, different thinking mode behavior, and different response characteristics than the V3.2 you were using. The "deepseek-chat" alias will be deprecated entirely on July 24, 2026.

The fix: Update your model config to explicitly use "deepseek-v4-flash" or "deepseek-v4-pro". Stop using the legacy alias. The behavior is already different even though the name is the same. For best practices on model configuration, our model comparison guide covers explicit model naming.

The Pattern Behind All Five Errors

Here's the honest take.

DeepSeek V4 launched April 24. OpenClaw's compatibility layer is still catching up. Every error above is a mismatch between what OpenClaw sends and what DeepSeek (or OpenRouter) expects. The model works fine. The integration doesn't.

This is the hidden cost of self-hosted OpenClaw. Not the server. Not the Docker. The ongoing compatibility work when providers change their API contracts and OpenClaw's gateway hasn't been updated to match. Every new model release is a potential breaking change. Every OpenClaw update is a potential regression. The 7,900+ open issues on GitHub aren't all bugs, but they include dozens of provider-specific compatibility failures like these.

If you want DeepSeek V4 without the compatibility layer problems, give BetterClaw a try. Free tier with 1 agent and BYOK. $19/month per agent for Pro. 28+ providers. Each one tested on the platform before it's available. The provider compatibility is our problem. The conversations are yours.

Frequently Asked Questions

Why does OpenClaw keep getting 503 errors with DeepSeek?

DeepSeek's API returns 503 during peak usage hours (this has been documented since V3). The additional problem: OpenClaw doesn't treat 503 as a failover-eligible error (GitHub issues #20999, #8112). Instead of switching to your fallback model, OpenClaw retries the same failing model and stalls. The fix: manually switch models, add a reverse proxy that handles 503 failover, or use a managed platform that implements proper failover.

What causes "provider rejected the request schema or tool payload" with DeepSeek V4?

Two causes. First (issue #77350): OpenClaw 2026.5.3-1 sends an invalid "max" reasoning_effort value to OpenRouter for DeepSeek V4 Pro. Fix: downgrade to 2026.4.10 or set thinkingDefault to "none". Second (issue #71160): OpenClaw doesn't pass reasoning_content back after tool calls, causing 400 errors on continuation requests. Fix: set thinkingLevel to "off" for tool-heavy workflows.

Why does DeepSeek V4 keep timing out on OpenClaw?

DeepSeek V4 in thinking mode can take 30-90 seconds before streaming starts. OpenClaw's internal HTTP timeout kills the request early. Even large configured timeout values (86400 seconds) are ignored due to a confirmed bug (issue #46049). Fix: set timeoutSeconds to 120+ and disable thinking mode if timeouts persist.

Is DeepSeek V4 reliable enough for production OpenClaw agents?

V4 Flash is more reliable than V4 Pro (lower latency, fewer 503s). Use V4 Flash for routine tasks and reserve V4 Pro for complex reasoning. Always configure a fallback model (Claude Sonnet, GPT-5.5) because 503s will happen during peak hours and OpenClaw's failover doesn't catch them. On BetterClaw, provider failover is automatic.

Should I use DeepSeek through OpenRouter or direct API with OpenClaw?

Direct API (api.deepseek.com) avoids the OpenRouter-specific reasoning_effort and reasoning_content passback bugs (issues #77350, #71160). OpenRouter adds compatibility issues on top of DeepSeek's own 503 problems. If you must use OpenRouter, stay on OpenClaw 2026.4.10 until the reasoning_effort regression is patched. On BetterClaw, the provider routing is handled by the platform.