Three frontier models launched in the same week. All claim agent supremacy. We tested them on real OpenClaw workflows so you don't have to burn $200 finding out.

Between April 16 and April 24, 2026, three frontier AI models dropped within eight days of each other. Claude Opus 4.7 on April 16. GPT-5.5 "Spud" on April 23. DeepSeek V4 Preview on April 24.

The OpenClaw Discord went from "which model should I use" to "which THREE models should I use" overnight. Community members started reporting wildly different results depending on which model they tested, which tasks they ran, and whether they'd configured their agents for the new tokenizers and pricing structures.

Here's what we found after testing all three on real agent workflows: customer support, email drafting, web research, multi-step task planning, and tool calling. Not benchmarks. Real work.

The Pricing Reality (This Is Where It Gets Interesting)

Before anything else, the money.

DeepSeek V4 Pro: $1.74/$3.48 per million tokens at list price. Currently 75% off until May 31, 2026: $0.435/$0.87 per million tokens. That's 11x cheaper than Claude Opus 4.7 on input and 29x cheaper on output during the promo.

DeepSeek V4 Flash: $0.14/$0.28 per million tokens. That's 35x cheaper than Opus 4.7 on input. Not a typo.

Claude Opus 4.7: $5/$25 per million tokens. Same list price as Opus 4.6, but the new tokenizer counts up to 35% more tokens for the same text. Effective cost increase: 12-35% depending on content.

GPT-5.5: $5/$30 per million tokens. Doubled from GPT-5.4's $2.50/$15. OpenAI claims the model uses fewer tokens per task, but for OpenClaw agents where the framework controls the prompt structure, the per-token pricing is what matters.

| Model | Input/M | Output/M | Monthly est. (50 msgs/day, optimized) |

|---|---|---|---|

| DeepSeek V4 Flash | $0.14 | $0.28 | $1-3 |

| DeepSeek V4 Pro (promo) | $0.44 | $0.87 | $3-8 |

| Claude Opus 4.7 | $5.00 | $25.00 | $20-35 |

| GPT-5.5 | $5.00 | $30.00 | $25-40 |

| Claude Sonnet 4.6 | $3.00 | $15.00 | $10-20 |

The pricing gap is not incremental. It's structural. DeepSeek V4 Flash costs 100x less per output token than GPT-5.5. Even V4 Pro at list price costs 7x less than Opus 4.7 on output. The question isn't whether DeepSeek is cheaper. It's whether the quality difference justifies the 10-100x price premium.

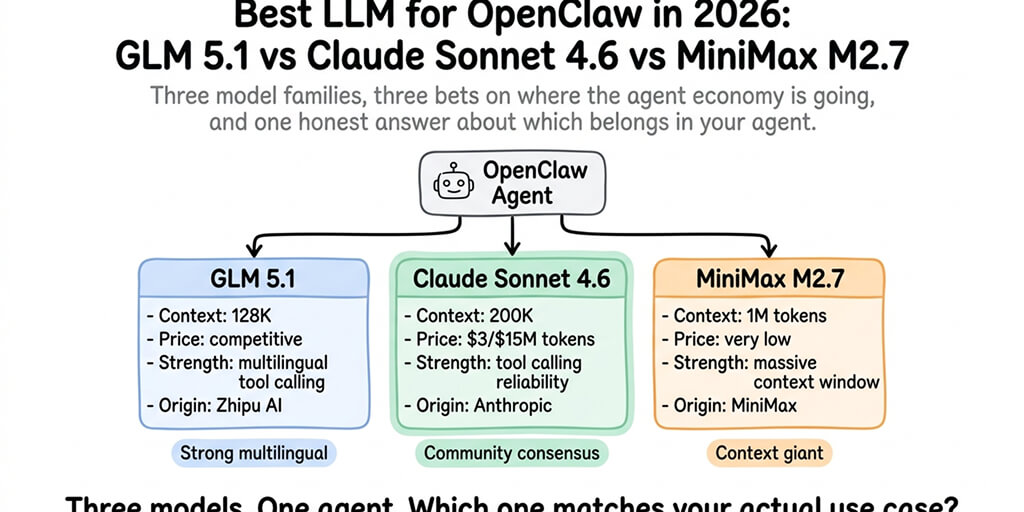

For the complete model comparison with provider options, our model comparison guide covers each model by task type and cost tier.

Claude Opus 4.7: Still the Quality Leader (With a Tax)

Where it wins: Instruction following and self-verification.

Opus 4.7 introduced something no other model does: it verifies its own outputs before reporting back. Vercel reports it "does proofs on systems code before starting work." On multi-step agent tasks where the agent needs to plan, execute, check, and correct, Opus 4.7 catches its own mistakes at a rate previous models didn't.

On real agent workflows: Customer support responses were the most accurate of the three. Email drafts required the least editing. Research tasks produced better-organized output with clearer source attribution. The quality lead is consistent, not dramatic, but measurable.

The catch: The new tokenizer adds 12-35% more tokens for the same text. And temperature, top_p, and top_k parameters now return 400 errors if set to non-default values. If your OpenClaw config uses these parameters, Opus 4.7 breaks your agent until you remove them.

Best for: Agents handling complex, open-ended tasks where getting it right on the first try saves time and money. Legal review, technical writing, research synthesis, high-stakes customer interactions.

GPT-5.5 "Spud": Strongest Tool Calling, Highest Cost

Where it wins: Multi-tool orchestration.

GPT-5.5 handles complex tool chains better than the other two. When an agent needs to call a web search tool, process the results, call a calendar API, format the output, and send it to Slack, GPT-5.5 manages the sequence more reliably. OpenAI has invested years in structured function calling, and it shows.

On real agent workflows: Tool-heavy tasks (calendar management, multi-API data aggregation, file processing pipelines) ran with fewer errors. The model is better at deciding which tool to call next without explicit routing instructions.

The catch: Doubled pricing ($5/$30 vs GPT-5.4's $2.50/$15). The output cost is the highest of all three models. For agents that generate long responses (support conversations, report generation), the output token cost adds up fast. Also: the model has a documented fixation on inserting fantasy creatures (goblins, gremlins, trolls) into some responses, traced to a reinforcement learning bug that OpenAI is still patching.

Best for: Agents that rely heavily on multi-tool workflows. CRM integrations, multi-API data collection, complex scheduling, file processing chains.

DeepSeek V4: The Open-Weight Disruptor

Where it wins: Cost-per-quality ratio. By a wide margin.

DeepSeek V4 Pro posts 80.6% on SWE-bench Verified. That's below Opus 4.7's 87.6% but above GPT-5.4's scores and competitive with Sonnet 4.6. At $0.44/$0.87 per million tokens (promo pricing), the quality-adjusted cost is the best available.

On real agent workflows: Routine tasks (support Q&A, email drafting, calendar management, daily briefings) were indistinguishable from Claude in output quality. The 90% quality at 10% cost rule from DeepSeek V3 still holds with V4. Complex multi-step reasoning showed a noticeable gap versus Opus 4.7, but the gap has narrowed significantly from V3.

V4 Flash at $0.14/$0.28 is the community's default for heartbeat routing, simple Q&A, and high-volume tasks where cost matters more than peak quality.

The catch: DeepSeek is a Chinese company. Data processed through DeepSeek's direct API is subject to Chinese data governance. For US/EU-hosted alternatives: V4 Pro is available on OpenRouter ($0.435/$0.87), Together.ai, Fireworks (132.8 t/s), and other providers running the open weights on non-Chinese infrastructure.

The context window: 1 million tokens native on both V4 Flash and V4 Pro. Same as Opus 4.7 and GPT-5.5. Context window parity means the model choice is now about quality and cost, not capacity.

Best for: Routine agent tasks at scale. Budget-conscious deployments. Teams running 5+ agents where API costs need to stay under $50/month total. Heartbeat routing. Fallback model when primary providers hit rate limits.

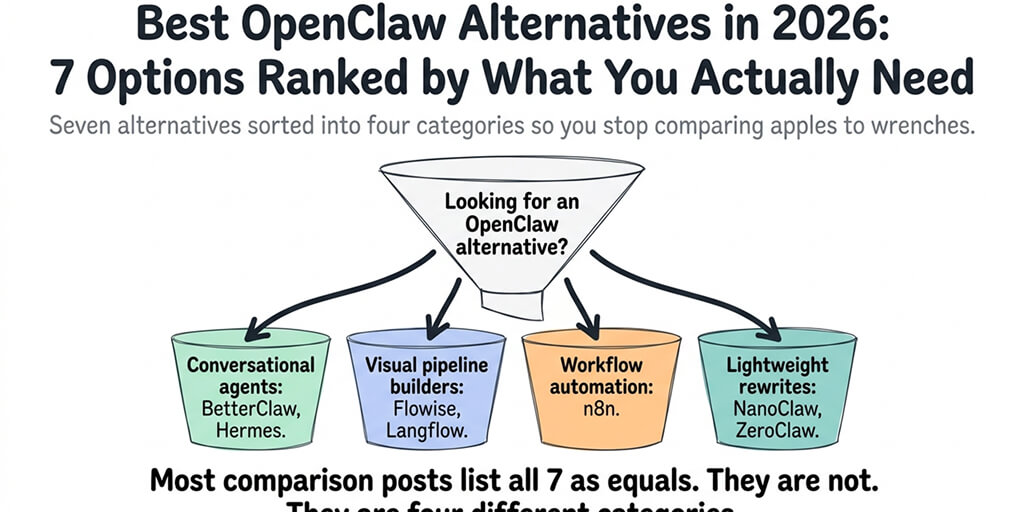

If managing three different model providers, API keys, tokenizer differences, and pricing tiers sounds like more configuration than you want, BetterClaw supports all three from a dropdown. Switch between DeepSeek V4, Opus 4.7, GPT-5.5, and 25+ other providers in 10 seconds. Smart context management reduces token costs on every model. Model routing by task type is configured in the dashboard, not in YAML files. Free tier with 1 agent and BYOK. $19/month per agent for Pro.

The Model Routing Strategy That Wins (Use All Three)

Here's what nobody tells you about choosing between these three models.

You don't choose one. You use all three.

The smartest configuration routes different task types to different models:

- Claude Opus 4.7 for complex reasoning, research synthesis, and high-stakes customer interactions. Quality matters most here. Cost is secondary.

- GPT-5.5 for tool-heavy workflows that chain multiple APIs. Function calling reliability matters more than per-token cost.

- DeepSeek V4 Flash for heartbeats, routine Q&A, FAQ responses, and any task where the response follows a predictable pattern.

Monthly cost with routing: $8-15/month for a moderate-use agent. Compared to $25-40/month on GPT-5.5-only or $20-35/month on Opus 4.7-only.

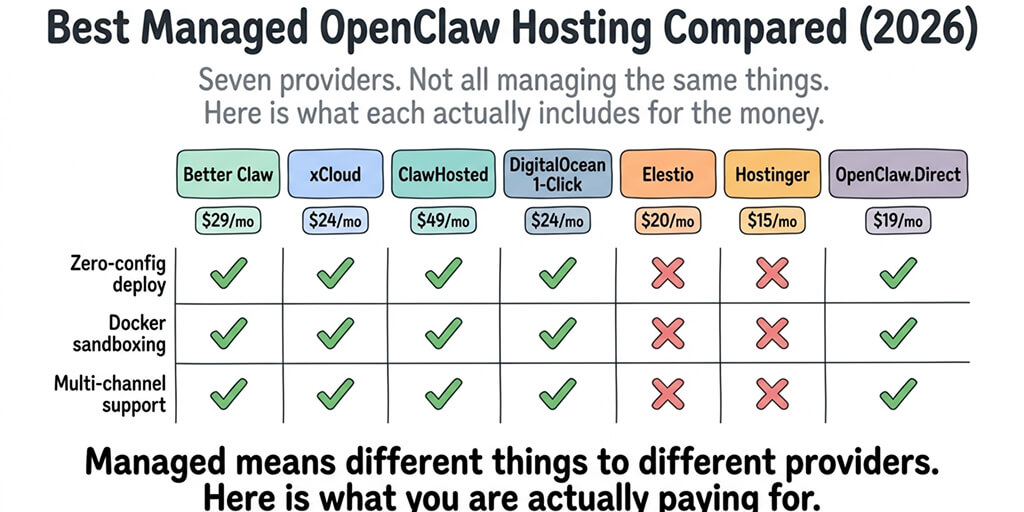

For the cheapest provider configurations, our provider guide covers the exact routing setup.

The Benchmark Summary (For the Number Crunchers)

| Benchmark | Claude Opus 4.7 | GPT-5.5 | DeepSeek V4 Pro |

|---|---|---|---|

| SWE-bench Verified | 87.6% | ~85% | 80.6% |

| Terminal-Bench 2.0 | 69.4% | 82.7% | ~65% |

| GPQA Diamond | 94.2% | ~92% | 90.1% |

| Finance Agent | 64.4% | ~60% | 62.0% |

| Context window | 1M | 1M | 1M |

| Open weight | No | No | Yes (MIT) |

The pattern: Opus 4.7 leads on coding and reasoning. GPT-5.5 leads on terminal/computer use. DeepSeek V4 Pro is competitive on everything at a fraction of the cost. All three have 1M context windows. Only DeepSeek is open-weight.

The Real Takeaway (What Changed in April 2026)

Here's the honest take.

April 2026 was the month the AI model market split into two tiers.

- Tier 1 (Opus 4.7, GPT-5.5): $5+ per million input tokens. Best quality. Closed-weight.

- Tier 2 (DeepSeek V4): $0.14-1.74 per million input tokens. 85-95% of the quality. Open-weight. Self-hostable.

For most OpenClaw agent tasks, the quality gap between tiers doesn't justify the 10-100x price gap. For the 20% of tasks where quality is critical (legal, medical, high-stakes customer-facing), the premium models are worth the premium. For everything else, they're not.

The winners are the teams that use both tiers, routing tasks to the right model instead of paying premium prices for routine work.

If you want multi-model routing across all three (plus 25+ others) without managing separate API configurations, give BetterClaw a try. Free tier with 1 agent and BYOK. $19/month per agent for Pro. 60-second deploy. Switch models from a dropdown. Smart context management keeps costs low on every model. The model market split into two tiers. Your agent should use both.

Frequently Asked Questions

What is the best AI model for autonomous agents in 2026?

It depends on the task. Claude Opus 4.7 for complex reasoning and self-verification ($5/$25/M tokens). GPT-5.5 for multi-tool orchestration ($5/$30/M). DeepSeek V4 Flash for routine tasks and cost efficiency ($0.14/$0.28/M). The best strategy uses all three with model routing: premium models for complex tasks, budget models for routine work.

How does DeepSeek V4 compare to Claude Opus 4.7?

DeepSeek V4 Pro scores 80.6% on SWE-bench vs Opus 4.7's 87.6%. Quality gap is real but narrowing. Cost gap is massive: V4 Pro (promo) costs $0.44/$0.87/M vs Opus 4.7's $5/$25/M. For routine agent tasks, the quality difference is minimal. For complex reasoning, Opus 4.7 is measurably better. V4 is open-weight (MIT license) and self-hostable. Opus 4.7 is not.

How much does it cost to run an AI agent with each model?

Monthly estimates at 50 messages/day, optimized: DeepSeek V4 Flash ($1-3), V4 Pro promo ($3-8), Claude Sonnet 4.6 ($10-20), Claude Opus 4.7 ($20-35), GPT-5.5 ($25-40). Multi-model routing (all three) costs $8-15/month. BetterClaw platform fee: $0 free tier or $19/month Pro, on top of API costs. BYOK with zero markup.

Is DeepSeek V4 safe for production agents?

The model itself is open-weight and available through US providers (OpenRouter, Together.ai, Fireworks) if Chinese data governance is a concern. V4 Pro and Flash perform well on agent benchmarks and are already used in production by many teams. The same OpenClaw security risks (138+ CVEs, credential exposure, supply chain) apply regardless of which model you use. BetterClaw's managed security (sandboxed execution, verified skills, secrets auto-purge) applies to all models.

When does the DeepSeek V4 Pro discount end?

The 75% promotional pricing ($0.435/$0.87/M vs list $1.74/$3.48/M) runs until May 31, 2026 at 15:59 UTC. After that, V4 Pro reverts to list pricing. V4 Flash pricing ($0.14/$0.28/M) is not promotional. For long-term budget planning, use V4 Flash rates as the baseline and treat V4 Pro promo as temporary.