Anthropic killed your subscription access. Here are 7 models that work with OpenClaw, ranked by quality, cost, and what the community actually recommends.

The morning after the Anthropic ban hit on April 4, 2026, the OpenClaw subreddit had 47 posts asking the same question: "What model should I switch to?"

The answers ranged from "DeepSeek is basically Claude for free" to "GLM-5.1 is the hidden gem" to "just pay for the API, it's not that bad." All of them were partly right. None of them gave the complete picture.

We've tested every major Claude alternative for OpenClaw agent tasks. Not benchmarks. Not synthetic tests. Real agent workflows: customer support, task delegation, research, multi-step reasoning, and tool calling. Here's what actually works, ranked by a combination of quality, cost, and community recommendation.

1. Claude Sonnet API (still the best, just costs more now)

The uncomfortable truth: Claude Sonnet is still the best model for OpenClaw agent tasks. The ban didn't change that. It changed the billing from $20/month flat to $3/$15 per million tokens.

Monthly cost: $10-20/month with optimization (model routing, session resets, context limits). $40-87/month without optimization on defaults.

Why it's still #1: Instruction following is the best in the market for agent tasks. Multi-step reasoning is consistently strong. Tool calling is reliable. The community consensus hasn't shifted despite the ban. Users who can afford the API still prefer Claude.

The catch: You need optimization. Without model routing (Haiku for heartbeats, Sonnet for conversation), session management (/new every 20-25 messages), and context limits (maxContextTokens at 6,000-8,000), API costs spiral fast. For the complete model comparison with pricing, our model comparison guide covers each model's strengths by task type.

2. DeepSeek V3 (the community's favorite budget pick)

What the community says: "90% of Claude for 10% of the price." This quote appears in some form in almost every post-ban discussion thread.

Monthly cost: $5-15/month for moderate use ($0.27/$1.10 per million tokens).

Why it's #2: Genuinely strong at routine agent tasks. Customer support, Q&A, summarization, email drafting. The quality gap with Claude is small for predictable, pattern-based work. Tool calling works. Instruction following is solid.

Where it falls short: Complex multi-step reasoning with ambiguous instructions. Claude handles "figure out what I mean and do it" better than DeepSeek handles the same prompt. If your agent tasks are well-defined (most are), you won't notice the difference. If they're open-ended, you will.

Data privacy note: DeepSeek is a Chinese company. Data processing occurs under Chinese data governance regulations. For some users and organizations, this is a non-issue. For others, it's a dealbreaker. Know your requirements.

3. GPT-5 / GPT-5 Mini (strong tool calling, higher cost)

Monthly cost: $15-25/month for moderate use (varies by tier).

Why it's #3: Tool calling is excellent. OpenAI has invested heavily in structured function calling, and it shows. If your agent uses many tools and complex skill chains, GPT-5 handles the orchestration well.

Where it falls short: More verbose than Claude. Responses tend to be longer, which means more output tokens, which means higher cost per response. For agents where response length matters (customer support on messaging apps), this adds up.

The top 3 rule: Claude Sonnet for quality, DeepSeek for budget, GPT-5 for tool-heavy workflows. 80% of users should choose one of these three. The remaining four are for specific situations.

4. Gemini 2.5 Flash (free tier, good enough for testing)

Monthly cost: $0 (1,500 free requests per day).

Why it's #4: Free. Genuinely free. No credit card. 1,500 requests/day covers personal use and testing entirely. If you're experimenting with OpenClaw and don't want to spend money yet, Gemini Flash is the answer.

Where it falls short: Quality is noticeably below the top 3 for complex agent tasks. Instruction following is less precise. Multi-turn conversations sometimes lose context. For production agents handling customer-facing conversations, the quality gap matters.

For the ranked guide to free and budget model options, our free model post covers the five cheapest options in detail.

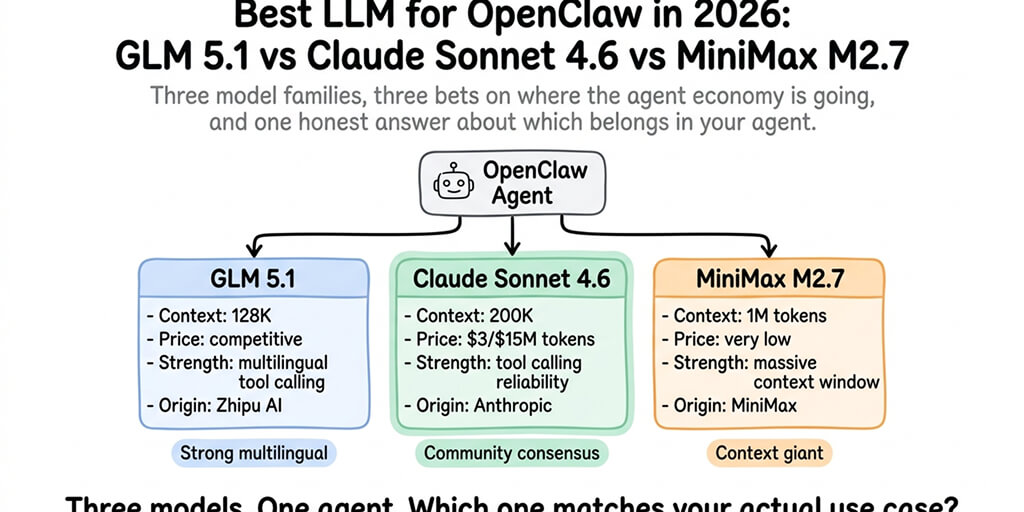

5. GLM-5.1 (cheapest paid option with surprisingly good quality)

Monthly cost: $3-8/month for moderate use.

Why it's #5: The price-to-quality ratio is remarkable. GLM-5.1 (from Zhipu AI) costs less than DeepSeek and performs well for routine agent tasks. The OpenClaw community has been recommending it increasingly since the ban.

Where it falls short: Smaller context window than Claude or DeepSeek. English-language performance is slightly behind Chinese-language performance (it's optimized for Chinese first). Tool calling support is functional but less mature than Claude or GPT-5.

6. Qwen3 via Ollama (free, local, hardware-dependent)

Monthly cost: $0 (runs on your hardware).

Why it's #6: Completely private. No data leaves your machine. No API costs. If you have a machine with 16GB+ RAM (32GB+ for the 32B model), Qwen3 runs locally via Ollama and delivers reasonable quality for agent tasks.

Where it falls short: Speed depends entirely on your hardware. On a MacBook with 16GB RAM, expect 10-15 tokens/second for the 8B model. On a desktop with 32GB RAM, the 32B model runs at usable speeds. On anything less, it's too slow for conversational agents.

7. Mistral Large (European alternative, good for EU compliance)

Monthly cost: $8-15/month for moderate use.

Why it's #7: European company. Data processed under EU regulations. GDPR compliance built into the service. If your organization requires European data sovereignty, Mistral is the only top-tier option.

Where it falls short: Smaller community of OpenClaw users compared to Claude, DeepSeek, or GPT. Fewer community-tested configurations. Tool calling is functional but less documented for OpenClaw specifically.

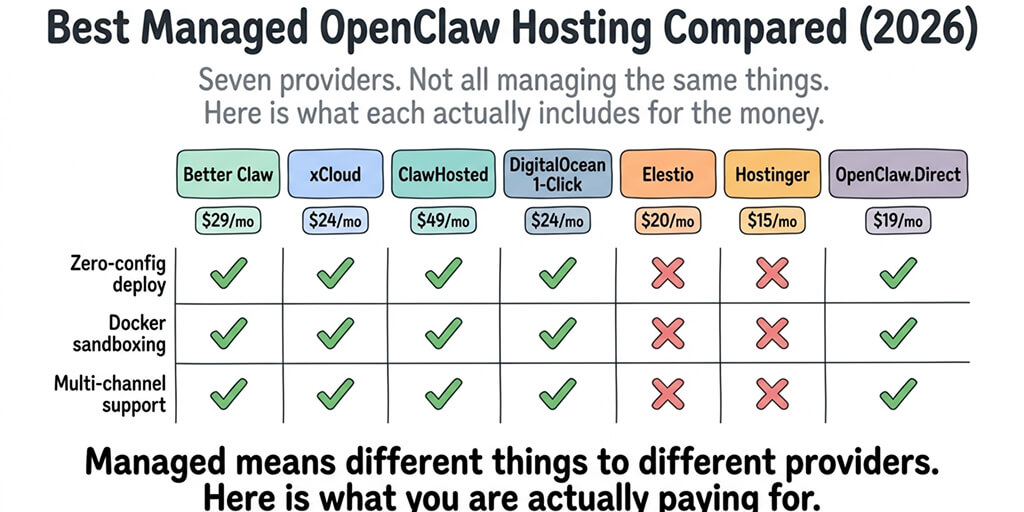

If switching models, configuring API keys, and optimizing token costs across multiple providers sounds like more configuration than you want to manage, BetterClaw supports all 28+ providers from a dropdown. Switch models in 10 seconds. No config files. Smart context management reduces token costs regardless of which model you choose. Free tier with 1 agent and BYOK. $19/month per agent for Pro. You pick the model. We handle the optimization.

The model routing strategy most experienced users are choosing

Here's what nobody tells you about choosing a Claude alternative.

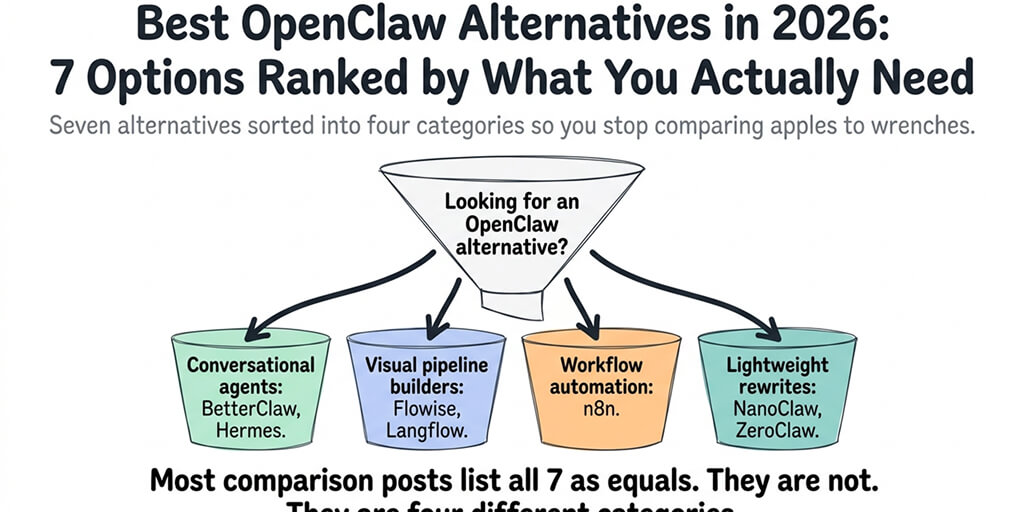

You don't have to choose just one. The smartest post-ban setup uses model routing: Claude Sonnet for complex, high-value tasks. DeepSeek or GLM-5.1 for routine tasks and heartbeats. Gemini Flash as a free fallback when other providers hit rate limits.

Total monthly cost with routing: $8-15/month. Claude quality where it matters. Near-free models everywhere else. For the cheapest provider configurations, our cheapest providers guide covers the specific routing setup.

The Anthropic ban forced a decision that made everyone's setup better. Single-model dependency was always a risk. Multi-model routing is more resilient, cheaper, and often produces better results because each model gets the tasks it's best at.

The ban was painful. What came after was an improvement.

If you want multi-model routing without configuring it in YAML files, give BetterClaw a try. Free tier with 1 agent and BYOK. $19/month per agent for Pro. 28+ providers. Model switching from a dropdown. Smart context management keeps costs low on every model. 60-second deploy.

Frequently Asked Questions

What is the best Claude alternative for OpenClaw?

DeepSeek V3 is the most recommended by the community. It delivers roughly 90% of Claude's quality for agent tasks at $0.27/$1.10 per million tokens ($5-15/month for moderate use). For free options, Gemini 2.5 Flash provides 1,500 requests/day at $0. For maximum quality, Claude Sonnet API remains the best but costs $10-20/month with optimization.

Can I still use Claude with OpenClaw after the Anthropic ban?

Yes. The ban only affected subscription-based OAuth access. You can use Claude via API key from console.anthropic.com. Billing changes from flat-rate subscription to per-token pricing ($3/$15 per million tokens for Sonnet). Anthropic offered a $200 credit to affected users. With optimization, moderate use costs $10-20/month.

Is DeepSeek as good as Claude for OpenClaw?

For routine agent tasks (support, Q&A, summarization, email): very close, roughly 90% of Claude quality. For complex multi-step reasoning with ambiguous instructions: Claude is noticeably better. For most users, the quality difference doesn't justify the 5-10x price difference. Model routing (Claude for complex, DeepSeek for routine) is the pragmatic answer.

What is the cheapest model for OpenClaw?

Gemini 2.5 Flash at $0/month (1,500 free requests/day) for cloud models. Qwen3 via Ollama at $0/month for local models (requires 16GB+ RAM hardware). GLM-5.1 at $3-8/month is the cheapest paid option. DeepSeek V3 at $5-15/month offers the best quality-to-cost ratio among paid models.

Can I use multiple models with OpenClaw?

Yes. Model routing lets you assign different models to different tasks. Common setup: Claude Sonnet for complex tasks, DeepSeek or GLM-5.1 for routine tasks, Haiku for heartbeats, Gemini Flash as fallback. Total cost: $8-15/month. BetterClaw supports all 28+ providers with model switching from a dropdown menu.