Your agent is probably running Opus on tasks that Sonnet handles identically. Here's how to tell the difference and configure accordingly.

I ran two identical OpenClaw agents for a week. Same SOUL.md. Same skills. Same Telegram channel. Same types of questions from the same test users.

One agent ran on Claude Opus at $15/$75 per million tokens (input/output). The other ran on Claude Sonnet at $3/$15 per million tokens.

At the end of the week, the Opus agent had cost $47.20 in API fees. The Sonnet agent had cost $9.80. Both agents answered every question. Both completed every scheduled task. Both used tools correctly. The test users couldn't reliably tell which agent was which.

That $37.40 weekly difference is $162 per month. For a single agent.

The viral Medium post "I Spent $178 on AI Agents in a Week" tells the same story at a larger scale. The author wasn't doing anything exotic. They were running OpenClaw with the most expensive model because the default configuration doesn't optimize for cost. It optimizes for capability.

Here's the thing about OpenClaw model configuration: the default settings assume you want the most powerful model available. Most agent tasks don't need the most powerful model available. Choosing between OpenClaw Sonnet vs Opus correctly is the single highest-impact change you can make to your setup.

Why Opus is overkill for 80% of agent tasks

Opus is Anthropic's most capable model. It excels at complex multi-step reasoning, nuanced creative writing, and tasks requiring deep contextual understanding across long documents.

Your OpenClaw agent spends most of its time doing none of those things.

Here's what a typical agent day looks like: 48 heartbeat checks (simple status pings), 15-30 conversational responses to user messages, 2-5 tool calls (web search, calendar check, file read), and maybe 1-2 genuinely complex tasks (research synthesis, multi-step planning).

The heartbeats are status checks. They need a model that can say "I'm alive" and process a minimal system prompt. Using Opus for this is like hiring a neurosurgeon to take your blood pressure.

The conversational responses are mostly straightforward. "What time is my meeting?" "Summarize this article." "Draft a quick email." Sonnet handles these identically to Opus. The responses are indistinguishable.

The tool calls require the model to generate a structured function call. Both Opus and Sonnet do this reliably. Sonnet's tool calling accuracy matches Opus for standard OpenClaw skills.

The only tasks where Opus meaningfully outperforms Sonnet: complex multi-step research with 5+ sequential tool calls, creative writing with specific stylistic constraints, and reasoning tasks that require holding 50,000+ tokens of context while making nuanced judgments. These represent maybe 10-20% of a typical agent's workload.

You're paying Opus prices for Sonnet-level tasks 80% of the time. The fix is model routing, and it takes about 10 minutes to configure.

The OpenClaw Sonnet vs Opus decision matrix

Let me be specific about which tasks belong on which model. These are the patterns we've observed across hundreds of deployments.

Tasks where Sonnet matches Opus

Question answering from context. When your agent has the relevant information in its system prompt or conversation history, Sonnet answers just as accurately as Opus. Customer support queries, FAQ responses, schedule lookups.

Single-step tool calls. "Search the web for X." "Check my calendar for today." "Read this file." Sonnet generates identical tool call syntax. The results are the same because the tool does the work, not the model.

Conversation management. Greetings, clarifying questions, follow-ups, acknowledging requests. Sonnet's conversational quality is excellent.

Structured output generation. JSON, summaries, list formatting, email drafts with clear templates. Sonnet follows formatting instructions with the same precision.

Tasks where Opus genuinely earns its price

Multi-step research synthesis. When the agent needs to search for information, evaluate multiple sources, compare findings, and produce a coherent summary that weighs conflicting data. Opus handles the complexity of holding multiple threads simultaneously better than Sonnet.

Complex planning with dependencies. "Plan a trip to Tokyo that accounts for my dietary restrictions, budget, travel dates, and the fact that my partner doesn't like crowds." The interconnected constraint satisfaction is where Opus's additional reasoning power shows up.

Long-context analysis. When your agent needs to process a 30,000+ token document and answer nuanced questions about relationships between sections. Sonnet's accuracy degrades faster on very long contexts.

Ambiguous instructions. When user intent is unclear and the agent needs to make sophisticated judgment calls about what the person probably means. Opus handles ambiguity more gracefully.

For the full cost-per-task data across all major providers including DeepSeek and Gemini, our model comparison covers seven common agent tasks with actual dollar figures.

How to configure model routing in OpenClaw (the 10-minute version)

The OpenClaw configuration file controls which model handles which type of request. The key is the model routing section, where you specify a primary model for general tasks and a separate model for heartbeats.

Step 1: Set Sonnet as your primary model. In your config file, change the primary model from Opus to Sonnet. This immediately cuts your per-token cost by 80% for all regular conversations and tool calls. The field is nested under the agent model section, and you specify the full model identifier (for example, anthropic/claude-sonnet-4-6).

Step 2: Set Haiku as your heartbeat model. Heartbeats are simple status checks that run every 30 minutes by default. That's 48 checks per day. On Opus, heartbeats cost roughly $4.32/month. On Haiku ($1/$5 per million tokens), they cost $0.14/month. Same function. $4.18/month saved. Set the heartbeat model field separately from the primary model.

Step 3: Set a fallback provider. If Anthropic's API goes down (it happens), you want your agent to automatically switch to an alternative. DeepSeek at $0.28/$0.42 per million tokens is a popular fallback. Gemini Flash with its free tier works for lower-traffic agents. Configure this in the provider fallback section.

Step 4: Set spending caps and limits. Set maxIterations to 10-15 to prevent runaway loops. Set maxContextTokens to 4,000-8,000 to prevent ballooning input costs on long conversations. Set monthly spending caps on your Anthropic dashboard at 2-3x your expected usage.

That's it. Four changes. Ten minutes. Monthly savings of 70-80% compared to running everything on Opus.

For the detailed model routing configuration and provider switching setup, our routing guide covers the specific config fields and fallback logic.

Models even cheaper than Sonnet

Sonnet is the sweet spot for most agent tasks. But it's not the cheapest option. Here's the full pricing ladder.

Claude Haiku ($1/$5 per million tokens). Good for heartbeats and very simple conversations. Struggles with multi-step tool calling and complex instructions. Don't use it as your primary model unless your agent handles only basic Q&A.

DeepSeek V3.2 ($0.28/$0.42 per million tokens). Roughly 90% cheaper than Sonnet. Excellent for straightforward tasks. Tool calling works reliably. The main trade-off is slower response times and slightly less nuanced reasoning. Some users run DeepSeek as their primary model and only escalate to Sonnet for complex tasks.

Gemini 2.5 Flash (free tier: 1,500 requests/day). Zero cost for personal use. Capable enough for simple agent tasks. The rate limit makes it impractical for high-volume agents, but for a personal assistant that handles 20-50 messages daily, it works.

GPT-4o ($2.50/$10 per million tokens). Comparable to Sonnet in price and capability for most agent tasks. Available through OpenClaw's ChatGPT OAuth integration, which lets you use your ChatGPT Plus subscription instead of paying per-token API prices.

For the complete comparison of which providers cost what and how they perform, our provider guide covers five alternatives that cut costs by 80-90%.

The cheapest OpenClaw configuration that still handles real agent tasks well: DeepSeek as primary, Haiku for heartbeats, Sonnet as the fallback for complex reasoning. Total API cost for moderate usage: $8-15/month.

If configuring model routing, context windows, and spending caps sounds like more JSON editing than you want, Better Claw supports all 28+ providers with model selection through the dashboard. Pick your primary, heartbeat, and fallback models from a dropdown. Set spending alerts. $19/month per agent, BYOK. The model routing just works because we've already optimized the configuration layer.

The ChatGPT OAuth trick most people miss

OpenClaw supports ChatGPT OAuth, which means you can authenticate with your ChatGPT Plus subscription ($20/month) and use GPT-4o through the ChatGPT interface instead of paying per-token API prices.

Here's why this matters: ChatGPT Plus gives you a fixed monthly rate with generous usage caps. If you're already paying for ChatGPT Plus, you can route your OpenClaw agent's GPT-4o requests through OAuth at effectively zero additional cost.

The limitation: ChatGPT OAuth has stricter rate limits than the API. For agents handling more than a few dozen messages per hour, the API route is more reliable. But for personal agents or low-to-moderate traffic use cases, OAuth converts your existing subscription into free agent hosting.

This is one of the more underappreciated OpenClaw cost reduction strategies. Combined with Sonnet as your primary Anthropic model and Haiku for heartbeats, your total monthly spend can drop below $20 even with multiple model providers configured.

The config mistake that costs the most money

Here's where most people get it wrong.

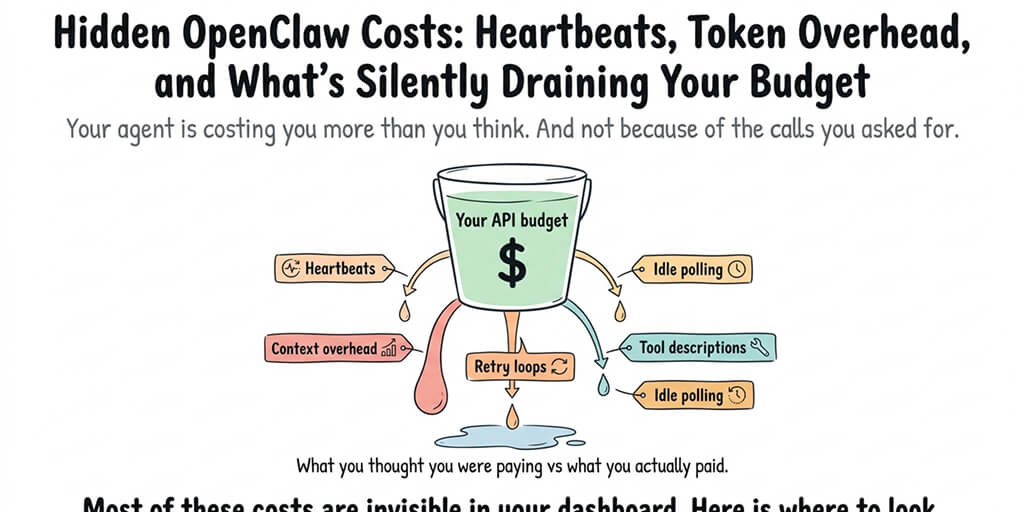

They configure Sonnet as their primary model. Good. They set Haiku for heartbeats. Good. Then they forget about the context window setting.

OpenClaw's default context window sends the full conversation history with every request. For a model charged per input token, this means every new message includes every previous message as context. By message 30 in a conversation, you're sending 30 messages worth of tokens as input just to get a one-line response.

Set maxContextTokens to a reasonable limit. For most agent tasks, 4,000-8,000 tokens of context is sufficient. The agent has persistent memory for longer-term recall. It doesn't need to send the entire conversation on every request.

This single setting can cut your input token costs by 40-60%, depending on average conversation length. Combined with model routing, you're looking at total savings of 80-90% compared to an unconfigured Opus setup.

When to actually use Opus

I've spent this entire article telling you to switch away from Opus. Let me be fair about when it genuinely matters.

If your agent is a research assistant that handles complex, multi-source synthesis daily, Opus's reasoning quality difference is noticeable. Not for every query. But for the 2-3 complex research tasks per day where accuracy on nuanced, ambiguous questions matters, Opus produces better results.

If your agent handles high-stakes communication (investor updates, legal summaries, medical information triage), the marginal quality improvement in Opus's language precision can justify the 5x cost.

If your agent processes very long documents (contracts, technical specifications, research papers over 50 pages), Opus maintains coherence over longer contexts more reliably.

The smart configuration isn't "never use Opus." It's "use Opus only for the tasks that need it." That's what model routing solves. Sonnet handles 80% of the volume at 80% less cost. Opus handles the 20% that justifies the premium.

The recommended starting configuration

After configuring hundreds of agents, here's the model configuration I'd recommend as a starting point.

Primary model: Claude Sonnet. Handles all regular conversations, single-step tool calls, and standard agent tasks. $3/$15 per million tokens.

Heartbeat model: Claude Haiku. Handles the 48 daily status checks. $1/$5 per million tokens. Saves $4+/month compared to running heartbeats on any other model.

Fallback provider: DeepSeek V3.2. If Anthropic goes down, your agent continues at $0.28/$0.42 per million tokens instead of going offline.

Context window: 4,000-8,000 tokens max. Prevents ballooning input costs on long conversations.

MaxIterations: 10-15. Prevents runaway loops from eating your budget.

Spending cap: 2-3x expected monthly usage on every provider dashboard.

Expected monthly cost for moderate usage: $10-25/month in API fees. Compare that to the $80-150/month Opus-for-everything setup that most new users start with.

The managed vs self-hosted comparison covers how these configurations translate across different deployment options, including what BetterClaw handles automatically.

If you want model routing, spending alerts, and multi-provider support without editing config files, give BetterClaw a try. $19/month per agent, BYOK with 28+ providers. Pick your models from a dashboard. Set your limits. Deploy in 60 seconds. The config optimization is built in so you can focus on what your agent actually does instead of how much it costs.

Frequently Asked Questions

What is OpenClaw model configuration?

OpenClaw model configuration is the process of setting which AI model handles which type of request in your agent. This includes choosing a primary model for conversations, a heartbeat model for status checks, a fallback provider for downtime, and parameters like context window size and iteration limits. Proper configuration typically reduces API costs by 70-80% compared to default settings.

How does Claude Sonnet compare to Opus for OpenClaw agents?

Sonnet handles 80% of typical agent tasks (conversations, single-step tool calls, structured output, Q&A) with indistinguishable quality from Opus at 80% less cost ($3/$15 vs $15/$75 per million tokens). Opus outperforms Sonnet on complex multi-step research, long-context analysis over 30,000+ tokens, and ambiguous instructions requiring sophisticated judgment. For most agents, Sonnet as primary with Opus reserved for complex tasks is the optimal configuration.

How do I reduce my OpenClaw API costs?

Four changes deliver the biggest savings: switch your primary model from Opus to Sonnet (80% per-token reduction), set Haiku as your heartbeat model ($4+/month savings), set maxContextTokens to 4,000-8,000 (40-60% input cost reduction), and configure spending caps at 2-3x expected usage (prevents runaway costs). Combined, these changes typically reduce monthly API spend from $80-150 to $10-25 for moderate usage.

How much does it cost to run an OpenClaw agent monthly?

With default settings (Opus for everything): $80-150/month in API fees plus hosting costs ($5-25/month VPS or $19/month managed platform). With optimized model configuration (Sonnet primary, Haiku heartbeats, DeepSeek fallback): $10-25/month in API fees plus hosting. Total optimized cost: $15-54/month depending on hosting choice. The cheapest viable setup uses Gemini Flash free tier with DeepSeek fallback: under $10/month total API cost.

Is Claude Sonnet reliable enough to replace Opus as the primary OpenClaw model?

Yes, for most agent use cases. Sonnet's tool calling accuracy matches Opus for standard OpenClaw skills. Conversational quality is excellent for customer support, scheduling, Q&A, and email tasks. Test users in our comparison couldn't reliably distinguish Sonnet responses from Opus responses on routine agent tasks. The cases where Sonnet falls short (complex multi-step reasoning, very long context analysis, highly ambiguous instructions) represent roughly 10-20% of typical agent workload.