29 models claim to be "free." Seven actually work for agent tasks. Here's the ranked list with daily limits, quality assessments, and the catch for each one.

After the Anthropic ban on April 4, the OpenClaw Discord lit up with one question: "What free model actually works?"

The answers were a mess. People recommended models that require a credit card ("Gemini is free!" ... with a payment method on file). People recommended local models without mentioning you need $800 in hardware. People recommended OpenRouter free tiers without mentioning the 200 requests/day cap.

Not all free is the same kind of free.

Here are seven models that genuinely work with OpenClaw at zero cost, ranked by the combination of quality, daily capacity, and how "free" they actually are.

1. Google Gemini 2.5 Flash (the Undisputed Free Champion)

Daily capacity: 1,500 requests/day. 15 requests/minute. 1 million tokens per minute.

How to get it: Sign up at ai.google.dev. No credit card. API key is instant.

Why it's #1: Nothing else comes close on volume. 1,500 requests/day covers a moderate-use personal agent entirely. The quality competes with GPT-5.4 Mini on most tasks. The 1M token context window is the largest free context available. Multimodal support (images, audio, video) included.

The catch: Google's terms allow using free-tier prompts for model training. If data privacy matters, this is a real trade-off. Quality is adequate for routine tasks but noticeably below Claude and GPT-5.5 for complex reasoning.

For OpenClaw: Set your provider to Google AI and model to gemini-2.5-flash. Works out of the box. For the complete model configuration guide, our model comparison covers how to set up each provider.

2. DeepSeek V4 Flash via OpenRouter (

Daily capacity: Approximately 200 requests/day. 20 requests/minute.

How to get it: Sign up at openrouter.ai. No credit card. Use model ID deepseek/deepseek-v4-flash:free.

Why it's #2: DeepSeek V4 Flash is genuinely good. 284B params (13B active), 1M context, competitive with Claude Sonnet on routine tasks. Through OpenRouter's free tier, you get it at zero cost.

The catch: 200 requests/day is enough for light personal use only. Free requests are deprioritized during peak traffic, so latency can spike unpredictably. The :free tier could change without notice.

3. Llama 3.3 70B via Groq (Fastest Free Inference)

Daily capacity: 1,000 requests/day. 30 requests/minute.

How to get it: Sign up at console.groq.com. No credit card. Instant API key.

Why it's #3: Speed. Groq's LPU hardware delivers 300+ tokens per second. The agent responds before you finish reading the previous message. Llama 3.3 70B is a strong open-weight model with good instruction following.

The catch: 6,000 tokens per minute limit (total across all requests). This is tight for agents that send long system prompts. You'll hit the TPM limit before the RPM limit on most OpenClaw configurations. Keep your SOUL.md short.

The top 3 rule: Gemini 2.5 Flash for volume (1,500/day). DeepSeek V4 Flash for quality (best model available free). Groq Llama for speed (300+ t/s). Stack all three as primary, fallback, and heartbeat model for the most resilient free setup.

4. Qwen3 32B via Groq (Highest Daily Capacity)

Daily capacity: 14,400 requests/day. 60 requests/minute.

How to get it: Same Groq account. Use model qwen3-32b.

Why it's #4: 14,400 requests/day is the highest free capacity of any model. Good for high-volume heartbeats and simple tasks. Qwen3 handles FAQ, classification, and routing well.

The catch: Quality is below the top 3 on complex reasoning. The 32B model is smaller than Llama 70B. Best used for heartbeat routing (48/day at zero cost) and simple tasks, not as primary conversational model.

5. DeepSeek V4 Flash (5M Token Grant, Direct API)

Capacity: 5 million tokens total (one-time grant on signup).

How to get it: Sign up at platform.deepseek.com. No credit card.

Why it's #5: Same excellent V4 Flash model as #2, but through DeepSeek's direct API with better reliability (no OpenRouter deprioritization). 5M tokens covers 2-11 months of light use depending on message volume.

The catch: One-time grant, not renewable. When it runs out, V4 Flash costs $0.14/$0.28 per million tokens (still nearly free, but not zero). For the complete guide to running a $0/month agent, our free agent setup post covers how to stretch the grant.

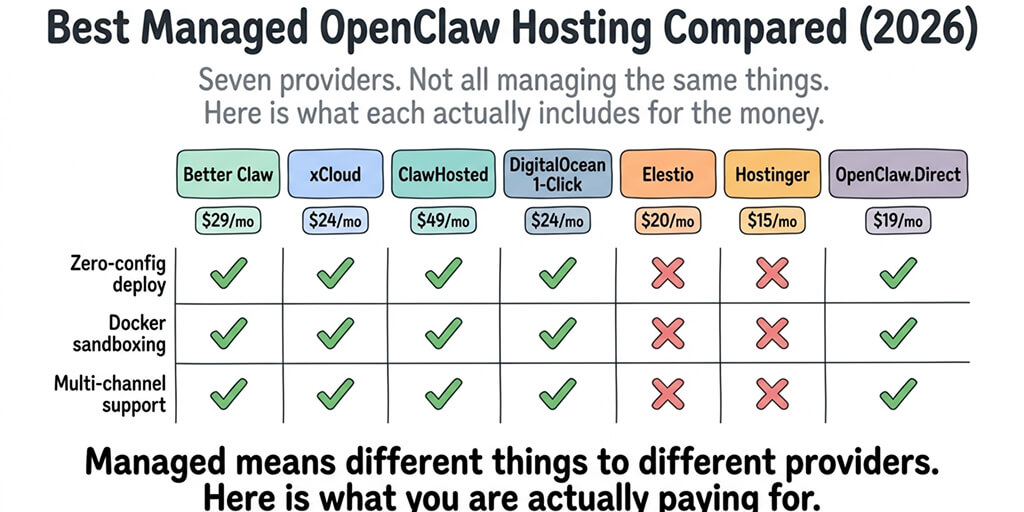

If configuring multiple free providers, managing fallback chains, and debugging rate limits across Gemini, Groq, OpenRouter, and DeepSeek sounds like more API juggling than you want, BetterClaw supports all of them from a dropdown. Paste one API key. Select the model. The platform handles routing and fallback. Free tier with 1 agent and BYOK. $19/month per agent for Pro.

6. Qwen3 via Ollama (Unlimited, Local, Hardware-Dependent)

Capacity: Unlimited. Runs on your hardware.

How to get it: Install Ollama (ollama.com). Run ollama pull qwen3. No API key. No account. No cost beyond electricity.

Why it's #6: Completely private. No data leaves your machine. No rate limits. No daily caps. The 8B model runs on 8GB RAM. The 32B model needs 16-32GB.

The catch: Speed depends entirely on your hardware. MacBook with 16GB RAM: 10-15 tokens/second (8B model). Desktop with 32GB RAM and a GPU: usable for the 32B model. Without a GPU, larger models are too slow for conversational agents. Also: you still need a VPS or local server running 24/7 for the agent to be always-on.

7. Gemma 3 27B via OpenRouter (

Daily capacity: Approximately 200 requests/day. 20 requests/minute.

How to get it: Same OpenRouter account as #2. Use model google/gemma-3-27b:free.

Why it's #7: Google's open-weight model. Good for classification, extraction, and structured tasks. Smaller than Llama 70B but faster on the free tier.

The catch: Same OpenRouter free tier limitations (deprioritized, variable latency, 200/day). Quality is below the top 3 for conversational tasks. Best as a fallback model, not a primary.

The Free Model Strategy That Actually Works

Here's what nobody tells you about free models for OpenClaw.

Don't pick one. Stack three.

- Primary: Gemini 2.5 Flash (1,500 requests/day, good quality).

- Fallback: DeepSeek V4 Flash via OpenRouter (kicks in when Gemini is rate-limited).

- Heartbeat: Qwen3 32B via Groq (48 heartbeats/day at zero cost from a 14,400/day cap).

Total daily capacity: 1,700+ requests across three providers. Monthly cost: $0. No credit card on any of them.

The quality trade-off is real. Claude Opus 4.7 and GPT-5.5 are measurably better on complex tasks. But for personal agents handling Q&A, email drafts, scheduling, and FAQ, the free stack is adequate. The community consensus since the Anthropic ban: "free models are 80-85% of Claude quality for the 80% of tasks that don't need Claude quality."

If you want the free models plus managed hosting, verified skills, smart context management, and zero infrastructure, give BetterClaw a try. Free tier with 1 agent and BYOK. Use any of these seven models at $0. $19/month per agent for Pro when you need more. The platform handles the provider routing. You handle the conversations.

Frequently Asked Questions

What is the best free model for OpenClaw in 2026?

Google Gemini 2.5 Flash is the best overall free model for OpenClaw. It offers 1,500 requests/day with no credit card, a 1M token context window, and quality competitive with GPT-5.4 Mini. For higher quality at lower daily volume, DeepSeek V4 Flash via OpenRouter's free tier (:free endpoint) provides 200 requests/day with better reasoning capability.

Can I run OpenClaw for free without a credit card?

Yes. Three providers offer free API access with no credit card: Google AI Studio (Gemini 2.5 Flash, 1,500/day), Groq (Llama 3.3 70B, 1,000/day), and OpenRouter (29+ free models, ~200/day). DeepSeek also gives 5M free tokens on signup without a credit card. Combined with BetterClaw's free tier (1 agent, hosting included, BYOK), you can run a complete agent at $0/month.

How many messages can a free OpenClaw agent handle per day?

With stacked free tiers: 1,700+ messages/day (Gemini 1,500 + OpenRouter 200). With a single provider: 200-1,500/day depending on which free tier you use. Groq's Qwen3 32B offers 14,400/day but with lower quality. For comparison, a typical personal agent processes 20-50 messages/day, well within any single free tier.

Are free models good enough for real agent tasks?

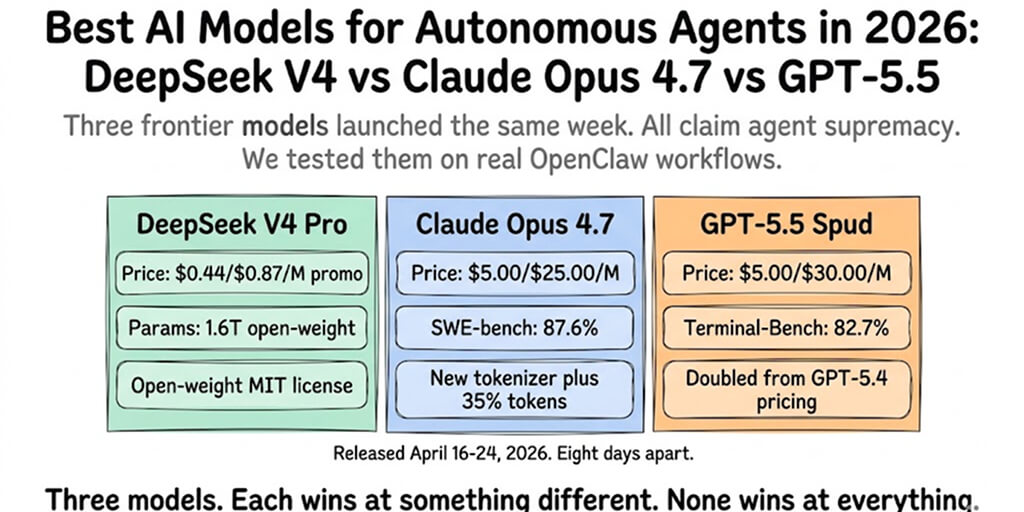

For routine tasks (Q&A, FAQ, email drafts, scheduling): yes. Free models deliver 80-85% of Claude quality on predictable, well-defined tasks. For complex reasoning, creative writing, and multi-step research: no. Claude Opus 4.7 ($5/$25/M) and GPT-5.5 ($5/$30/M) are measurably better. Most personal agents handle routine tasks 80%+ of the time.

What's the catch with free AI models?

Three catches: daily rate limits (200-1,500 requests/day), data privacy (Google AI Studio may use your prompts for training), and latency (OpenRouter free tiers are deprioritized during peak hours). Local models via Ollama avoid all three catches but require $400-2,000+ in hardware. The cheapest paid option after free tiers is DeepSeek V4 Flash at $0.14/$0.28/M tokens.