They share a name, a UI, and almost nothing else. Here's what each one actually remembers, where it forgets, and why most people are using the wrong one.

I was 30 minutes into a Cowork session, watching it slowly retrace context I'd already covered in Chat the day before, when it hit me.

These two things do not talk to each other.

I'd told Claude in Chat about our pricing tiers, our positioning, the exact subject line formula we use for cold outreach. Then I opened Cowork to actually produce a deck. And it asked me what we sell.

If you've used both, you've felt this. The naming makes it sound like one product with two modes. It is not. Chat Memory and Cowork Memory are two different memory systems with two different philosophies, two different scopes, and two different sets of tradeoffs. And most people pick the wrong one for the wrong job.

Here's the breakdown that should have been in the docs.

What Chat Memory Actually Does

Chat Memory is the layer you get when you talk to Claude at claude.ai or in the mobile app. As of March 2026, it's free on every plan, including the free tier. Before that, it was Team and Enterprise only.

The mechanic is two-part. First, Claude runs an automatic synthesis roughly every 24 hours, scanning your standalone conversations and building a compressed profile of you. Your name, your role, what you're working on, how you like things explained. Second, you can manually pin facts by telling Claude "remember that I prefer Postgres" and it'll store that explicitly.

Then, at the start of every new chat outside of a project, that synthesis gets injected as context.

A few things to know about it:

- It's a profile, not a record. Claude knows you're a founder building a B2B SaaS. It does not know why you chose Postgres over MySQL last Tuesday, what schema you settled on, or what tradeoffs you weighed.

- It's scoped. Memory from your standalone chats does not leak into your Projects. Each Project has its own isolated memory pool. Your meal-planning project Claude has no idea what your content-strategy project Claude knows. This is actually correct behavior, but it surprises people the first time.

- It has recency bias. The synthesis is a living summary. Old conversations fade. If you discussed something six months ago and haven't mentioned it since, don't count on Claude remembering.

- It's transparent. When Claude references a memory, it tells you. ChatGPT does the same thing silently. This is the single biggest functional difference between the two products.

Chat Memory is a profile of who you are. It is not a record of what you've done.

What Cowork Memory Actually Does

Cowork is Anthropic's autonomous desktop agent. It reads and writes files on your machine, runs multi-step tasks in a sandboxed VM, and delivers finished artifacts to a folder. It launched as a research preview on January 12, 2026.

And its memory system is built for a completely different job.

Cowork Memory lives inside Projects. Per Anthropic's own docs, memory is supported within projects but is not retained across standalone Cowork sessions. That's the official line. You set up a Cowork Project, point it at a folder, give it standing instructions, and across runs inside that project, it carries forward the relevant context.

There's also an enterprise layer some people conflate with this. Cowork Customize lets workspace administrators set persistent memory at the workspace level. Personas, brand voice, terminology, policy rules. That's a separate product layer, mostly for teams.

Here's the part nobody tells you: Cowork's memory is artifact-aware in a way Chat Memory will never be. Because Cowork operates over files, the context isn't just "what you told me." It's "what's in the folder, what got written last run, what's in the manifest." That's a fundamentally different kind of memory.

But it's also brittle in different ways. Cowork activity is not captured in audit logs, compliance API, or data exports. Anthropic explicitly says: do not use Cowork for regulated workloads. Memory does not persist across standalone sessions outside of projects.

Here's Where It Gets Messy

Let me show you what trips everyone up.

You can have Chat Memory enabled, Project Memory enabled, and Cowork Memory enabled simultaneously, and they will all coexist as separate, isolated pools.

So if you're me, three weeks ago:

- Chat (standalone) knew my name, my company, my preferences.

- Chat Project "Q2 Strategy" had detailed context on our roadmap.

- Cowork Project "Quarterly Deck" had access to the folder of source files.

- None of these three knew what the other two knew.

The result: I'd brief Cowork on the strategy, then go back to standalone Chat to write a follow-up post, and standalone Chat would ask me what the strategy was.

This isn't a bug. This is intentional design. Anthropic explicitly siloed these to prevent client data from one project bleeding into another. But if you don't know it's happening, Claude will seem to have selective amnesia, and you'll waste hours re-explaining yourself.

Why This Matters More Than It Looks

Step back and think about what's actually happening here.

Anthropic is building memory as a series of carefully scoped, opt-in containers. Each scope has different retention rules, different access patterns, different export controls. Compare this to ChatGPT, which builds a single global memory that follows you everywhere.

The Anthropic approach is more annoying day-to-day. It's also more defensible if you ever need to delete something, isolate client work, or comply with an audit.

But for builders, this scoping has real consequences:

- If you're a developer iterating on a project, you want Cowork Project Memory. The agent can read files, modify code, remember what it did last run, and pick up where it left off.

- If you're a founder doing strategy work, you want a Chat Project. Memory is scoped to the project, doesn't leak into your personal chats, and the synthesis is dense enough to give Claude real context.

- If you want general "Claude knows me," that's standalone Chat Memory. Don't expect it to know about your specific Project work though.

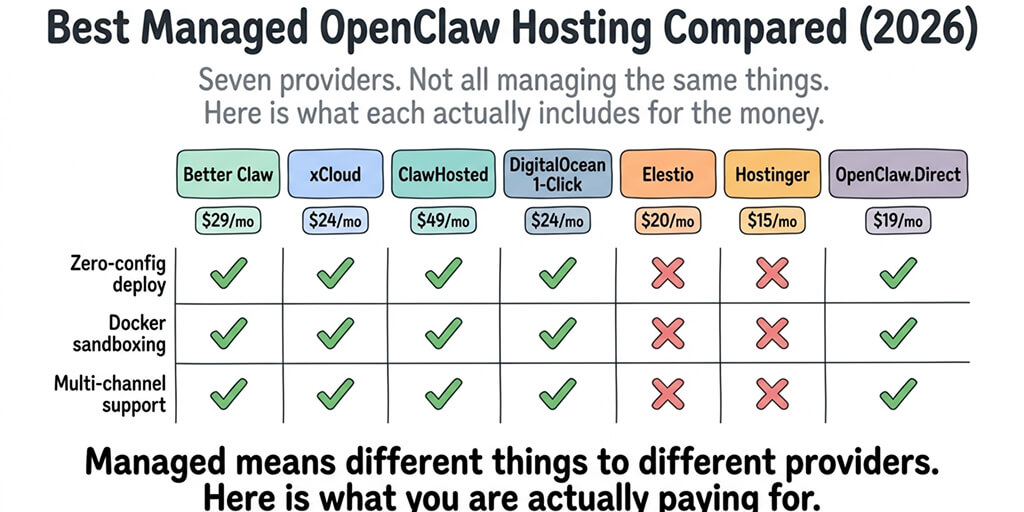

If you've been using BetterClaw or building agents on OpenClaw, this scoping will feel familiar. It's the same pattern as workspace isolation in agent architecture. Each scope is sandboxed. Memory is durable but constrained. That's the right design when you're building production systems, even if it's clunky for casual use.

The Agent Memory Problem Nobody Is Solving

Here's where this comparison gets interesting for anyone building actual agents.

Chat Memory and Cowork Memory are both built around the same assumption: a single human user with a coherent set of preferences and ongoing projects. That works for personal productivity. It does not work for autonomous agents that run 24/7, talk to dozens of people, execute tasks across multiple channels, and need to maintain state for weeks at a time.

If you've tried to give an OpenClaw agent real persistent memory, you know what I mean. The default system writes to MEMORY.md files, splits them into ~400-token chunks, and uses semantic search to retrieve them. Community benchmarks show this hitting roughly 45% recall accuracy on complex queries. Old context drifts. The agent forgets what it knew about a customer two weeks ago. People have built elaborate three-layer memory systems just to get reliable retention.

This is the gap. Claude's first-party memory is for humans using Claude. Agent memory, for systems that run autonomously, is still mostly DIY.

We built BetterClaw because we were tired of this DIY tax. Smart context management that doesn't burn tokens on housekeeping. Persistent memory with hybrid vector plus keyword search. Workspace isolation by default. Free tier with 1 agent BYOK, Pro at $19/agent/month. Your agent gets the memory architecture Anthropic built into Cowork Customize, but for autonomous, multi-channel use.

When Cowork Memory Is the Right Tool

Use Cowork Memory when:

- You're working over a folder of files across multiple sessions. Cowork can see the artifacts, remember its previous output, and iterate.

- You want delegation, not conversation. Set up the project, point at the folder, leave standing instructions. Come back to finished work.

- You're producing artifacts: spreadsheets, decks, structured outputs. The memory of "what's in the folder right now" is more useful than the memory of "what we discussed."

Don't use Cowork Memory when:

- You want continuity across many small ad-hoc questions. That's Chat.

- You need compliance, audit logs, or regulated data handling. Anthropic explicitly told you not to.

- You're trying to give an agent persistent memory across users or channels. That's a different architecture entirely.

When Chat Memory Is the Right Tool

Use Chat Memory when:

- You want Claude to know who you are across conversations. Job, projects, preferences, communication style.

- You want to scope context to a specific workstream. Use a Project for that. Memory stays inside.

- You're doing thinking, writing, coding-by-conversation. Anything where the artifact is the chat itself, not a file on disk.

Don't use Chat Memory when:

- You need Claude to remember exact details, decisions, or reasoning chains. The synthesis compresses too much. Save the chat URL or paste the relevant parts into a Project's instructions.

- You're working in regulated contexts. Use Temporary Chat (the ghost icon) for client-sensitive work. No memory created, nothing persisted.

What I Actually Do Now

After a few months of bouncing between these, here's my setup:

- Standalone Chat: minimal memory. Just enough to know I'm a founder so I get the right register of response.

- One Chat Project per ongoing workstream. Each one has dense standing instructions, and the project memory accumulates over time.

- Cowork only when I need files produced. Project-scoped. Standing instructions on the folder. I treat it like a coworker, not a chatbot.

For the actual agent that runs 24/7 across Slack, WhatsApp, and email? Different system entirely. Anthropic doesn't have a product for that. They have building blocks, you assemble them. Or you use a platform built for it.

Where This Is Headed

Anthropic's "Dreaming" feature, announced in May 2026, is the early signal of where this is going. Agents review past sessions, extract patterns, and self-improve between runs. It's in research preview for Managed Agents on the API.

That's the future. Not memory as a profile. Not memory as a project folder. Memory as something the agent actively curates, prunes, and reasons over.

Chat Memory will look quaint in two years. Cowork Memory will get absorbed into something bigger. The interesting work is happening in the agent layer, where memory has to be a first-class system, not a feature toggle.

If you're building anything autonomous, start there. Don't wait for the chat product to grow into an agent platform. The shape of those problems is different.

Frequently Asked Questions

What is the difference between Cowork Memory and Claude Chat Memory?

Chat Memory is a profile of you that gets carried into standalone Claude conversations at claude.ai. Cowork Memory is project-scoped context inside the Cowork desktop agent, tied to a specific folder of files. They do not share data. Chat Memory is about who you are. Cowork Memory is about what's in the project.

Does Cowork Memory carry over between sessions like Chat Memory does?

Only inside Projects. Per Anthropic's documentation, Cowork Memory is supported within projects but is not retained across standalone Cowork sessions. If you start a fresh Cowork session outside of a project, it has no prior context.

How do I make Claude remember context across both Chat and Cowork?

You can't, directly. They're isolated by design. The workaround is to put shared context into a Chat Project's standing instructions and into a Cowork Project's setup separately. Some teams export their Chat Memory and paste the key facts into Cowork's project instructions to bridge the gap.

Is Cowork Memory available on the free Claude plan?

Cowork itself requires a paid Claude plan (Pro, Max, Team, or Enterprise). Chat Memory, on the other hand, has been free for all users since March 2026, including the free tier. So you can have Chat Memory without Cowork, but not the reverse.

Is Claude's memory system safe for client or regulated data?

Chat Memory is reasonable for general professional work but not for regulated data. Cowork is explicitly not safe for regulated workloads. Anthropic states that Cowork activity is not captured in audit logs, the Compliance API, or data exports. For attorney-client material, HIPAA-covered information, or SEC-regulated data, use Temporary Chat mode, which creates no persistent memory.